|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

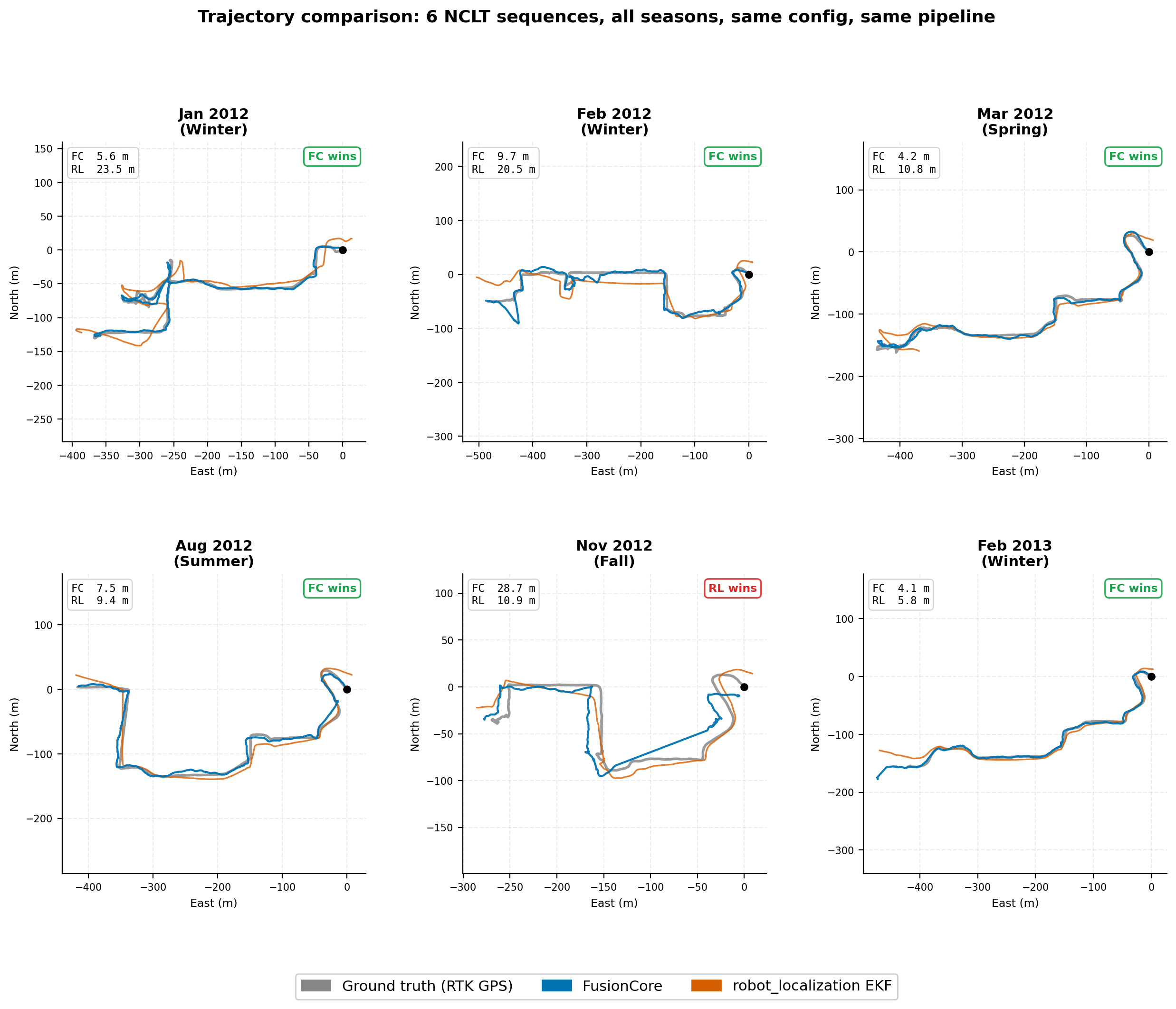

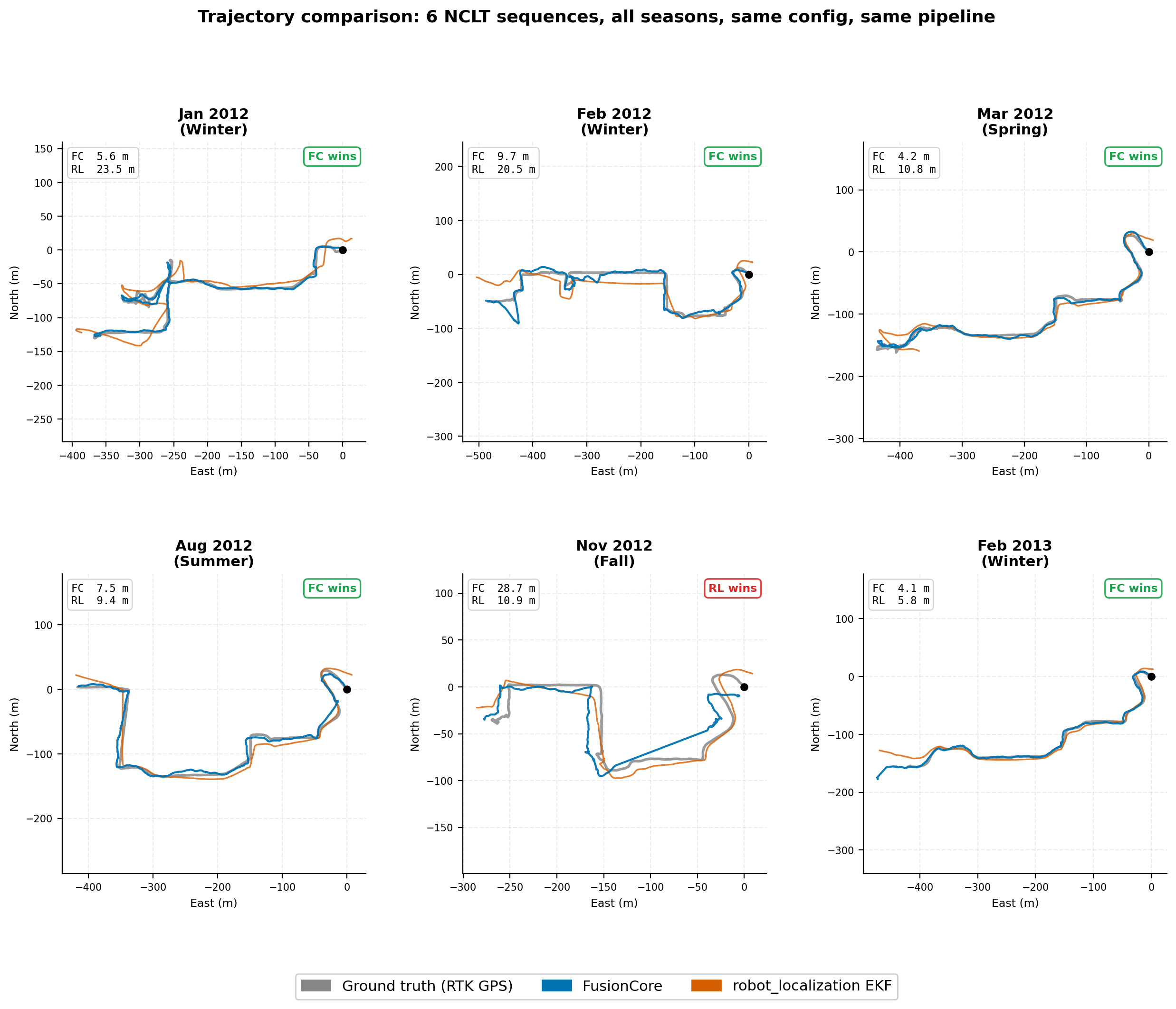

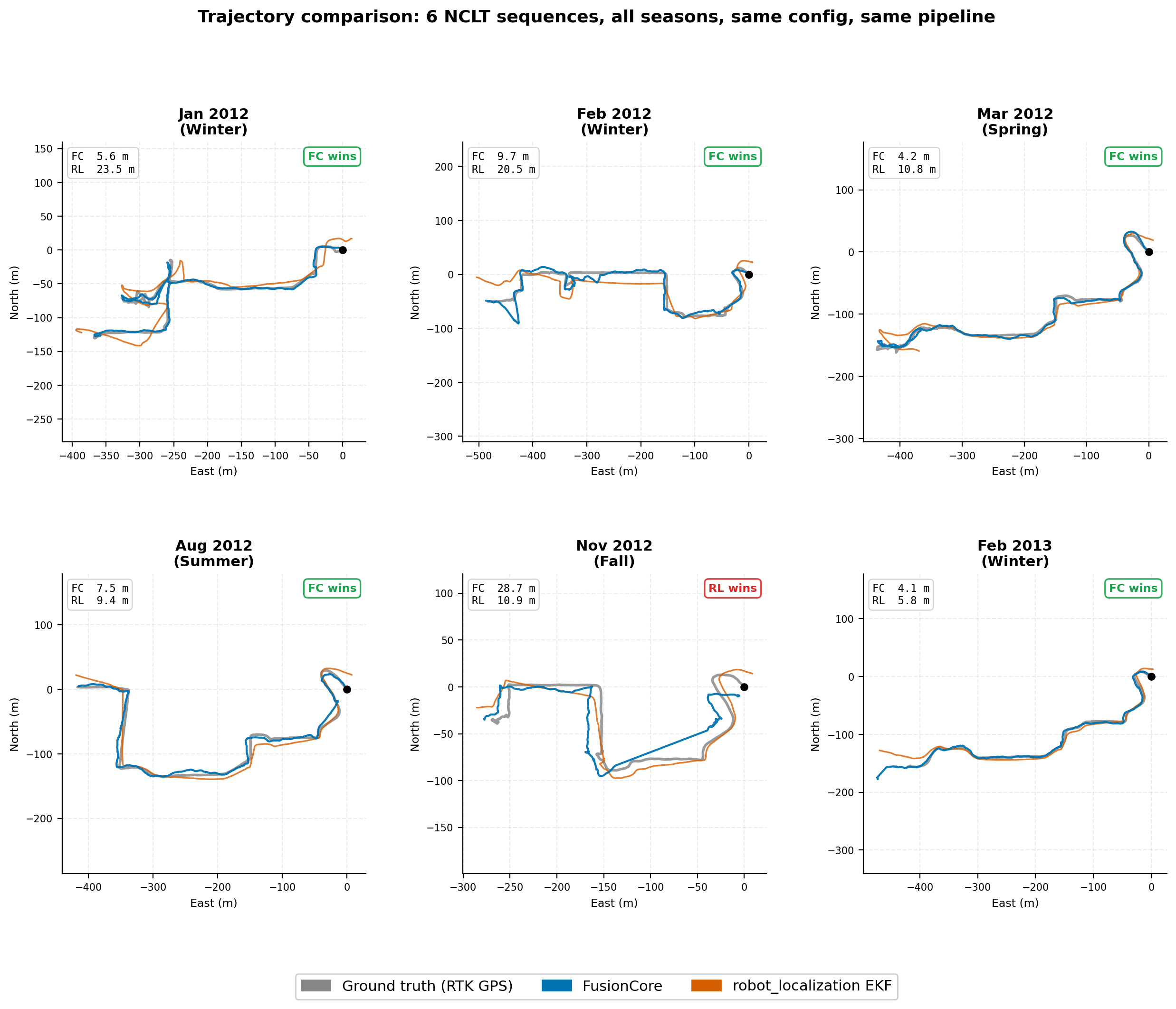

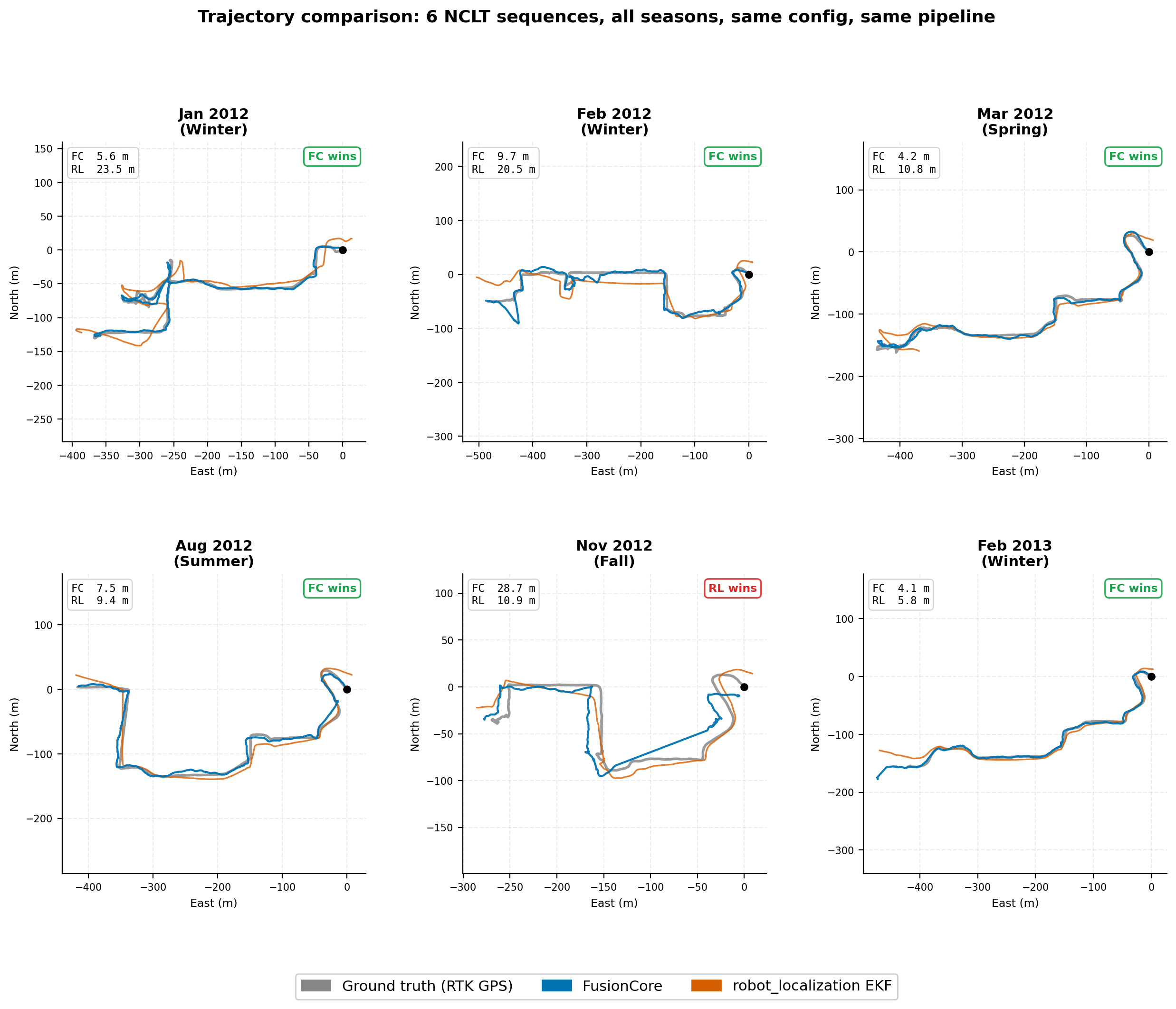

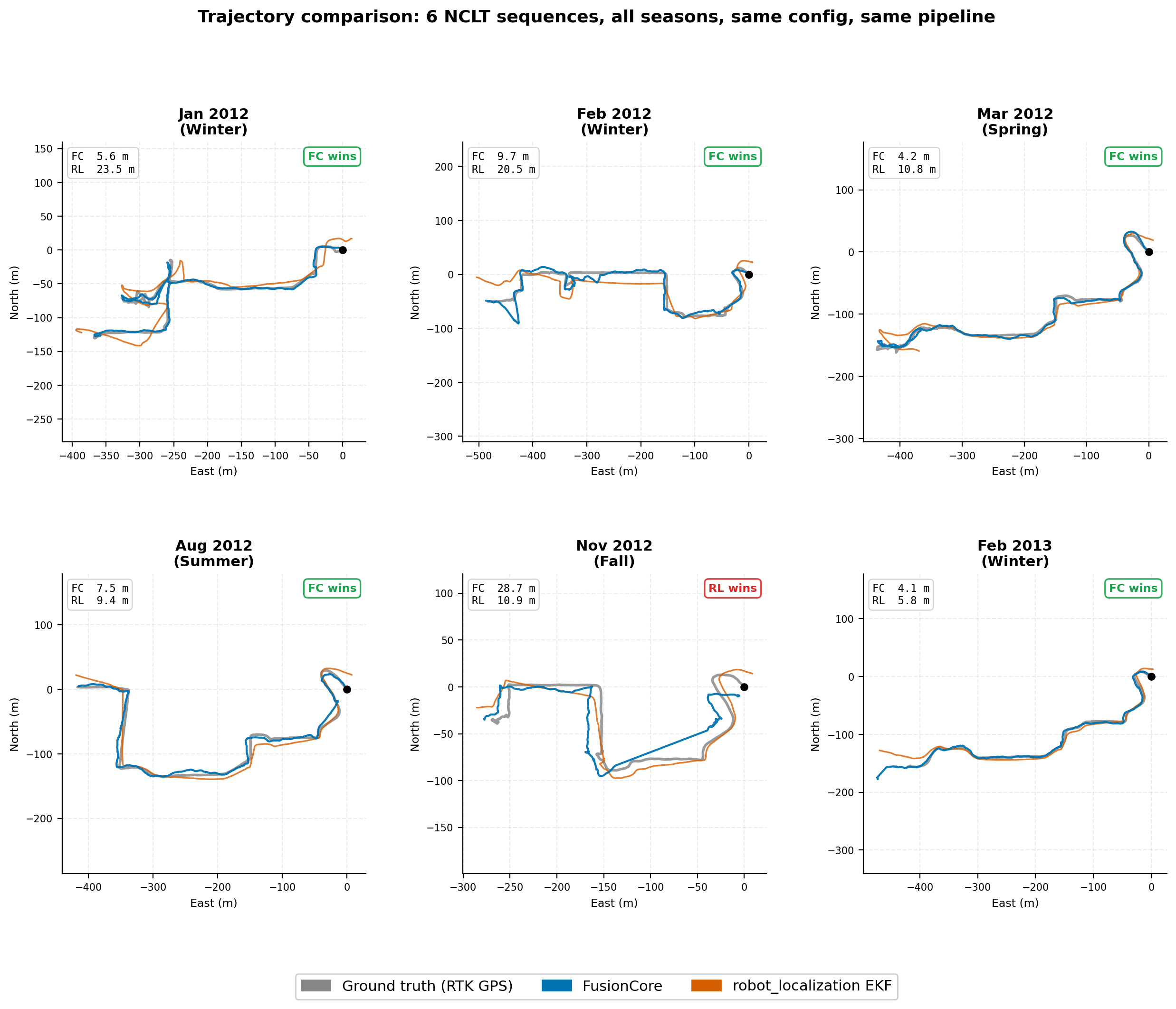

Benchmark

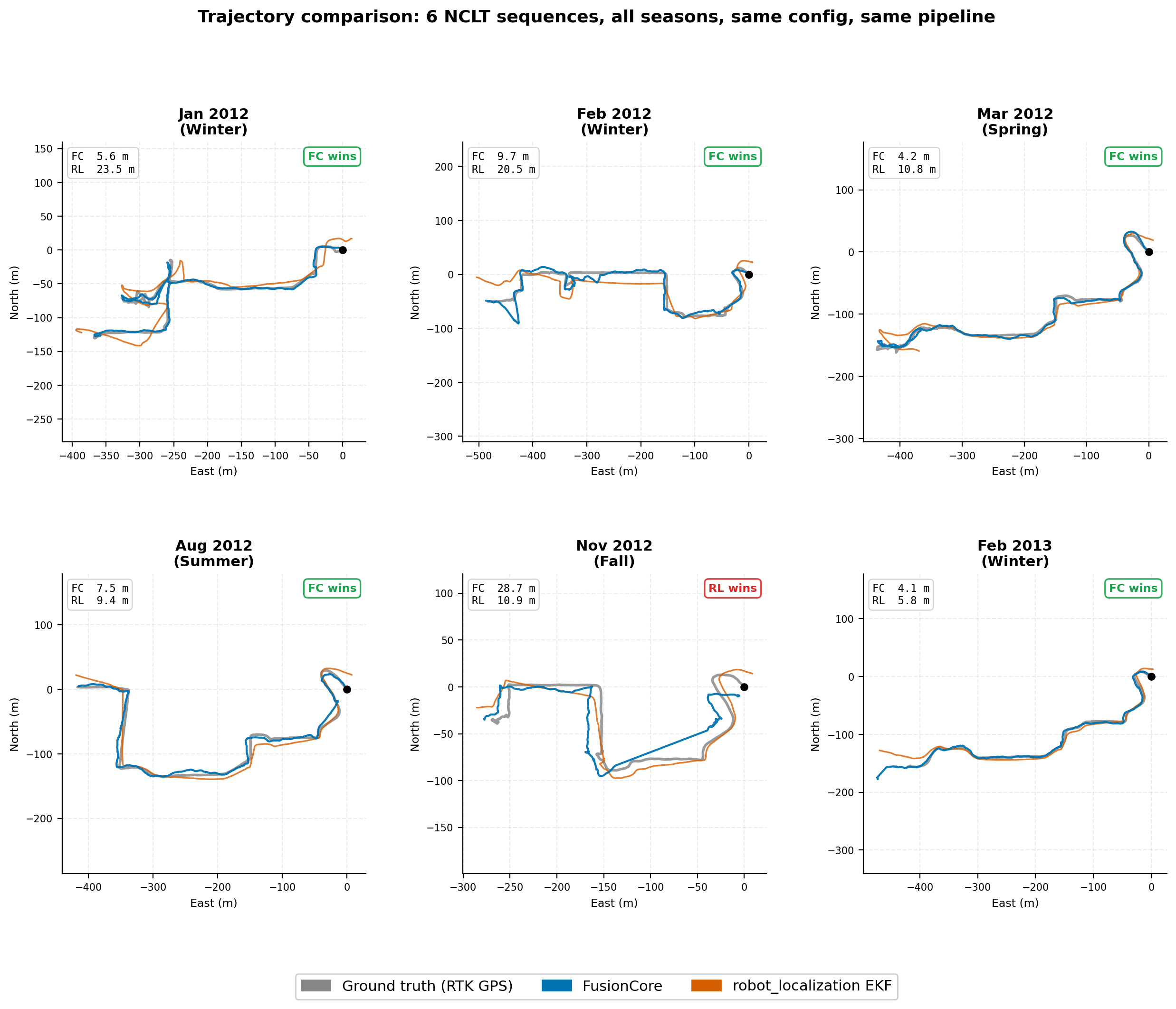

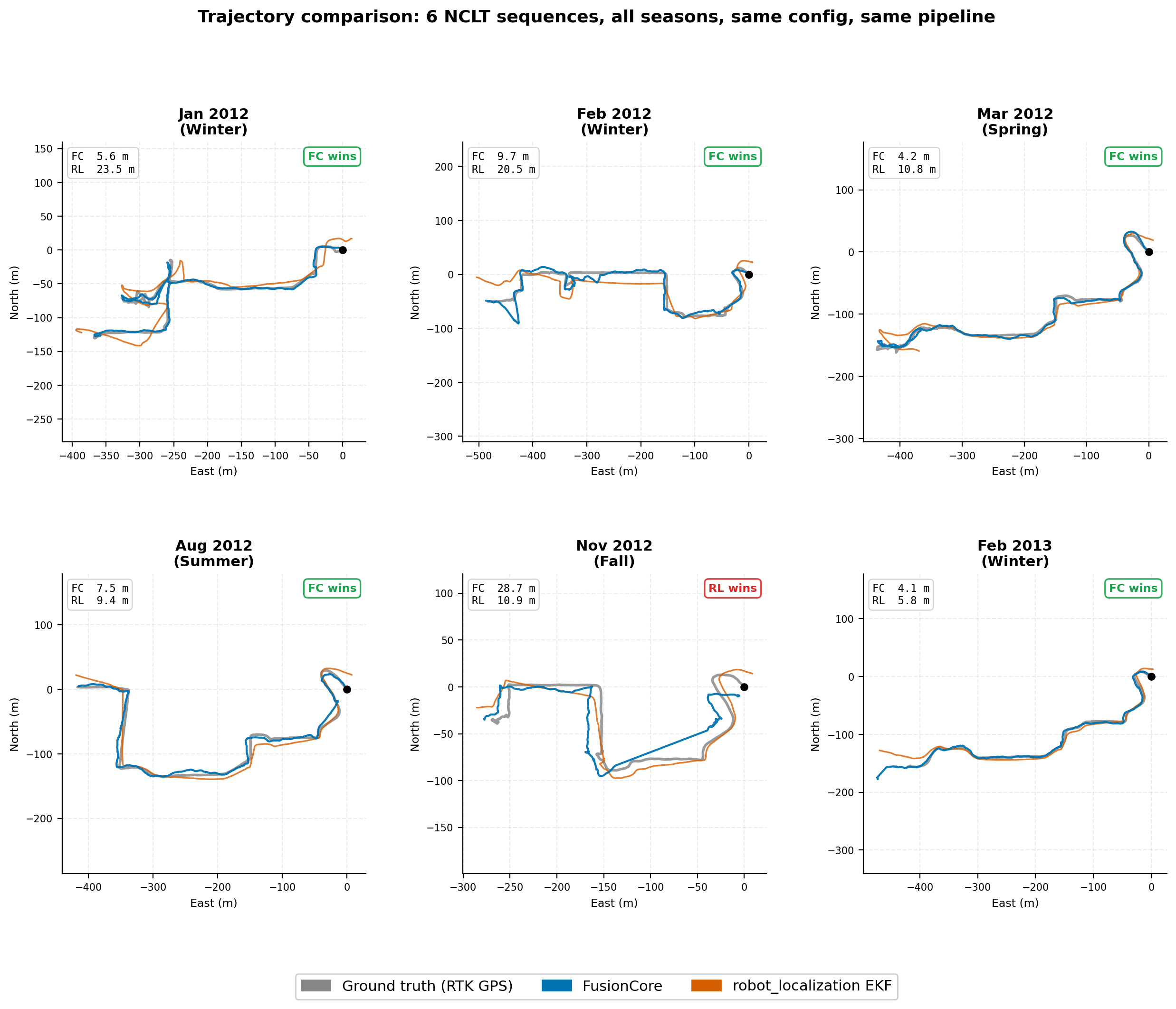

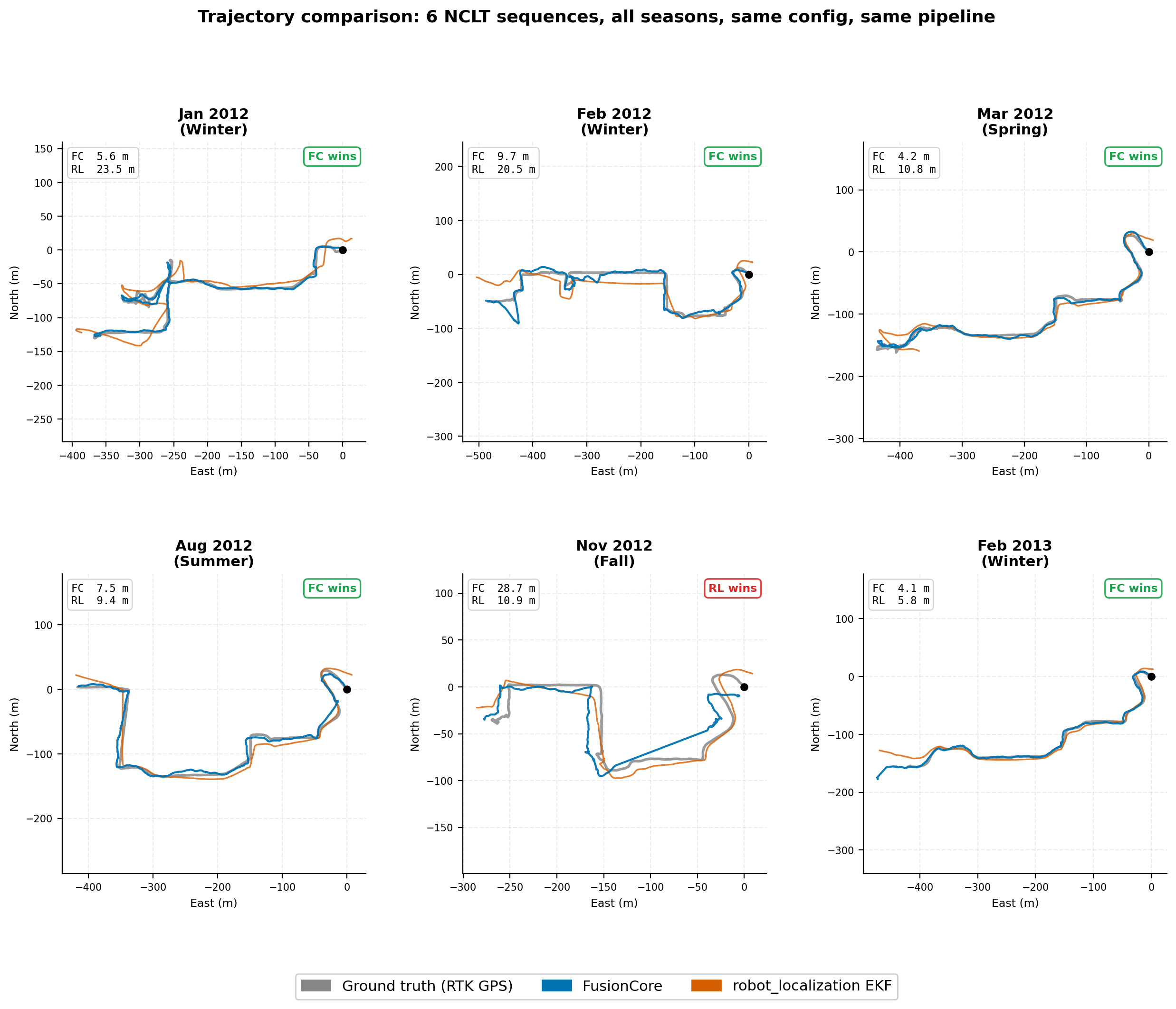

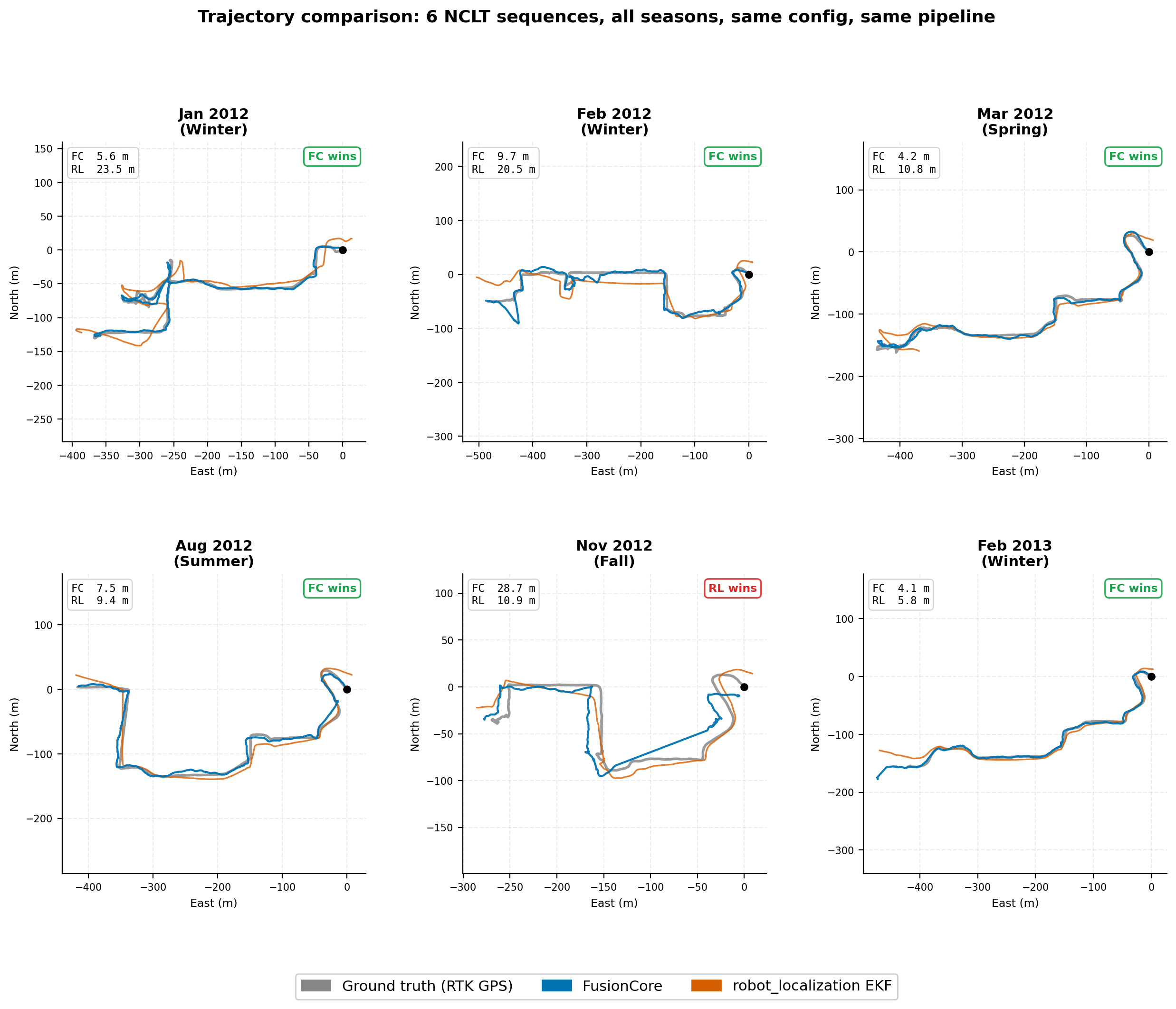

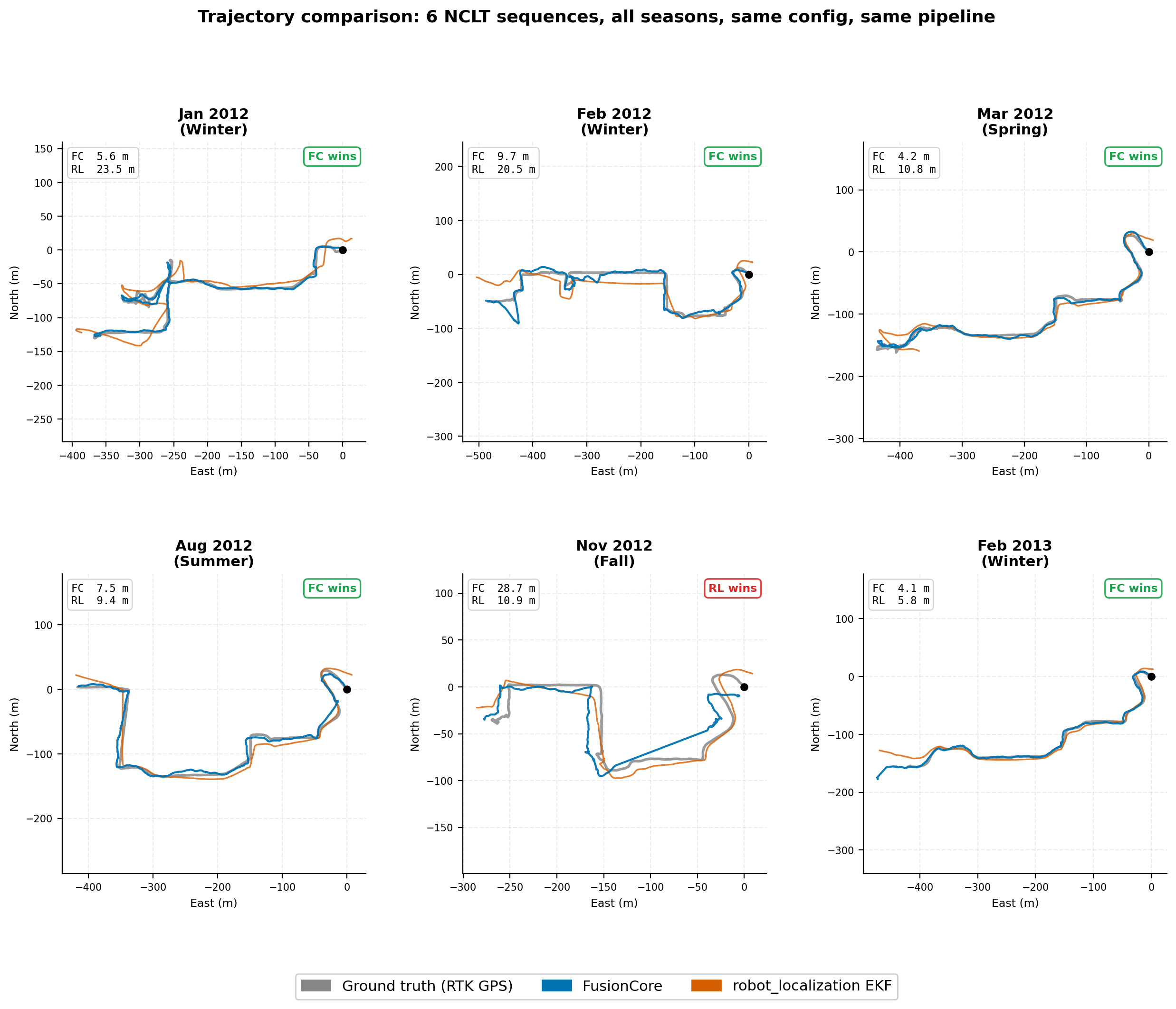

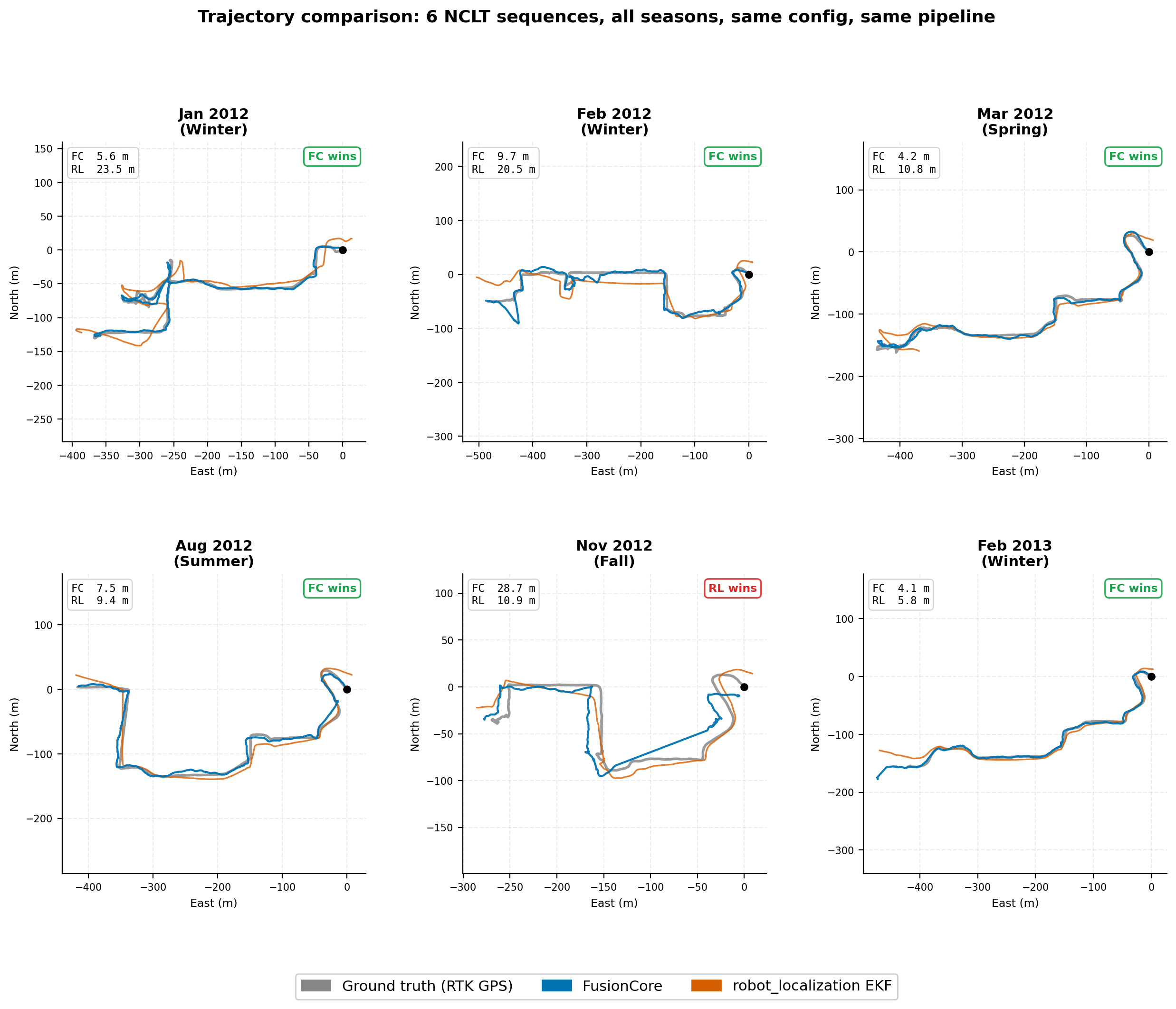

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

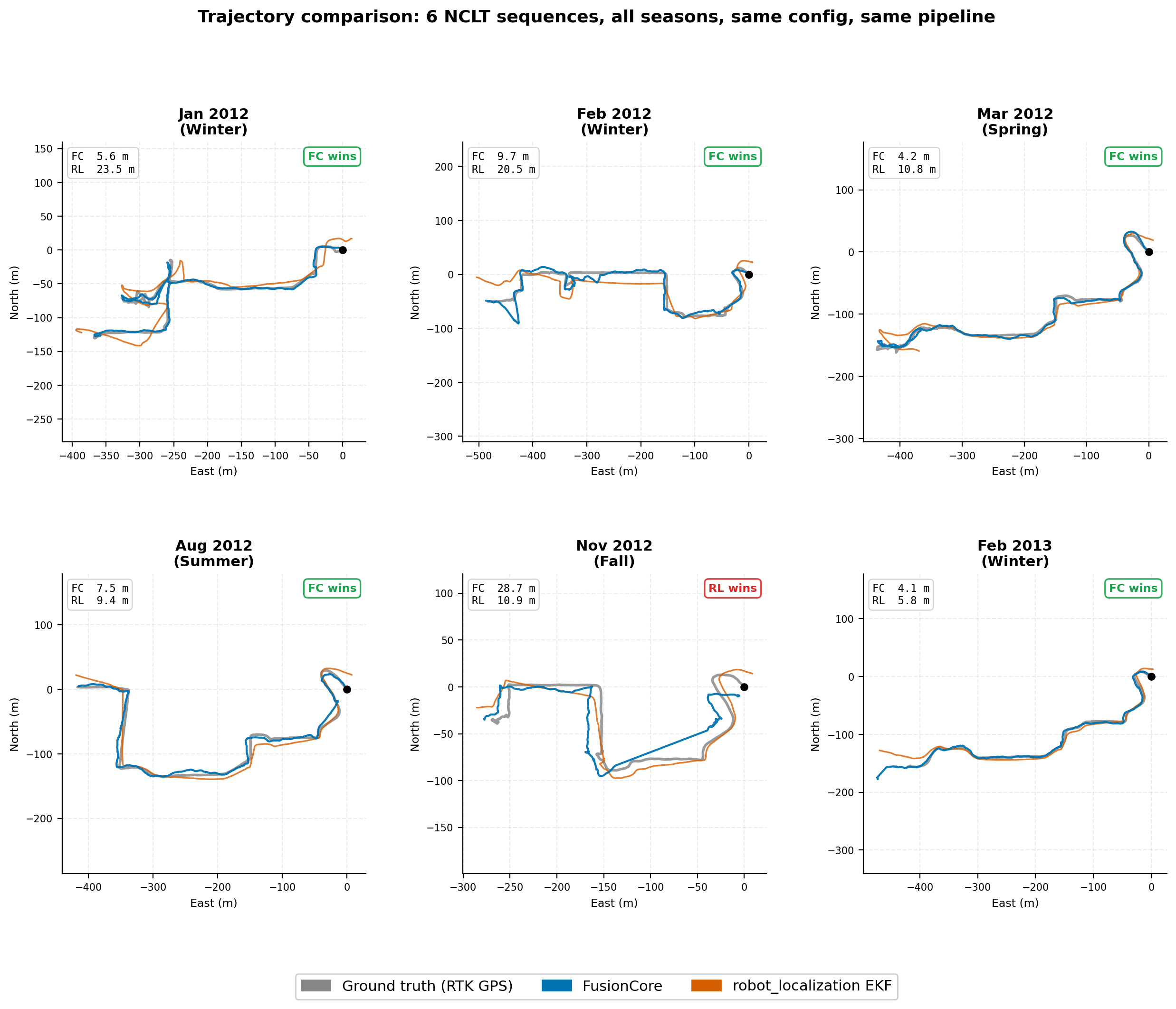

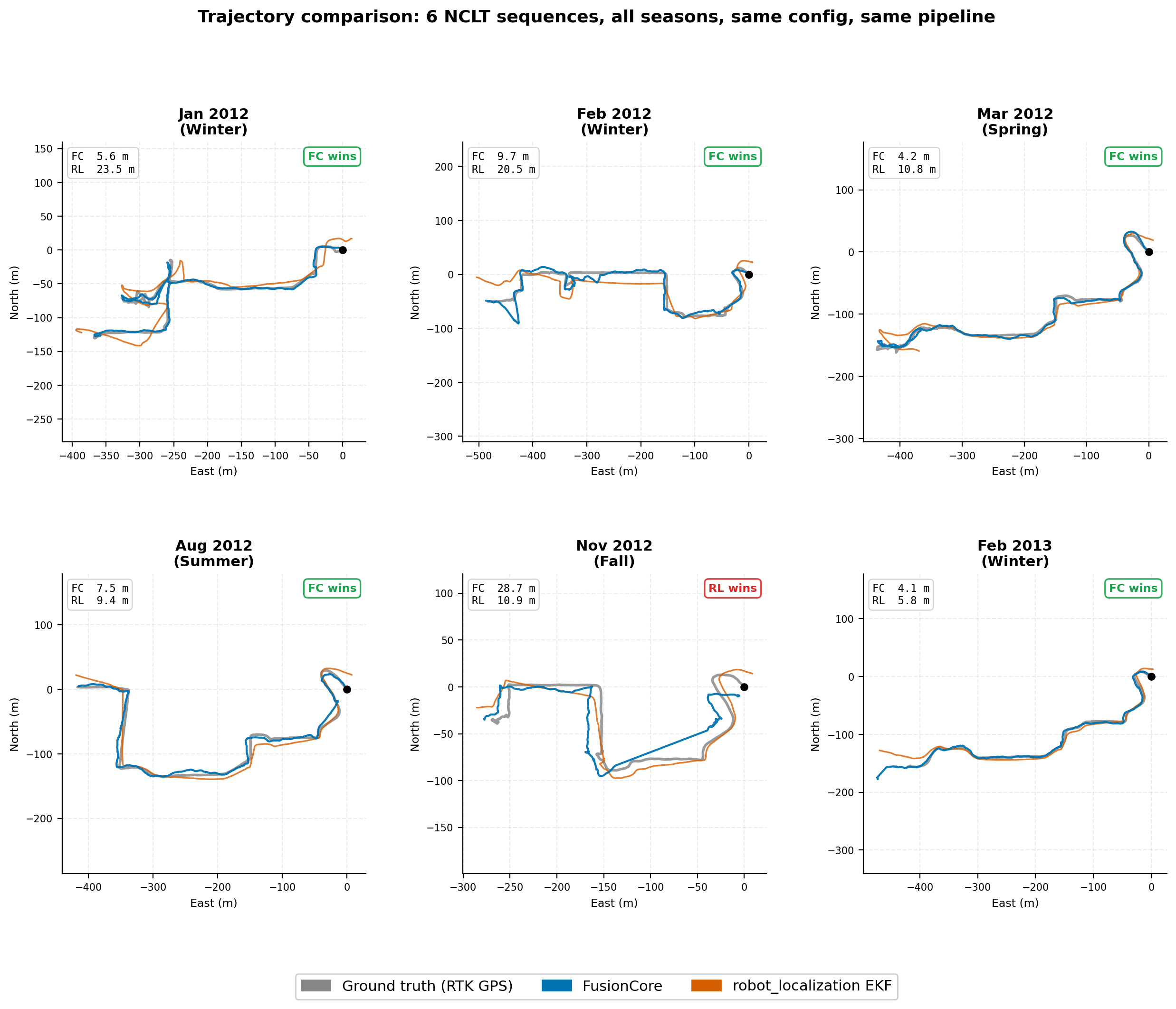

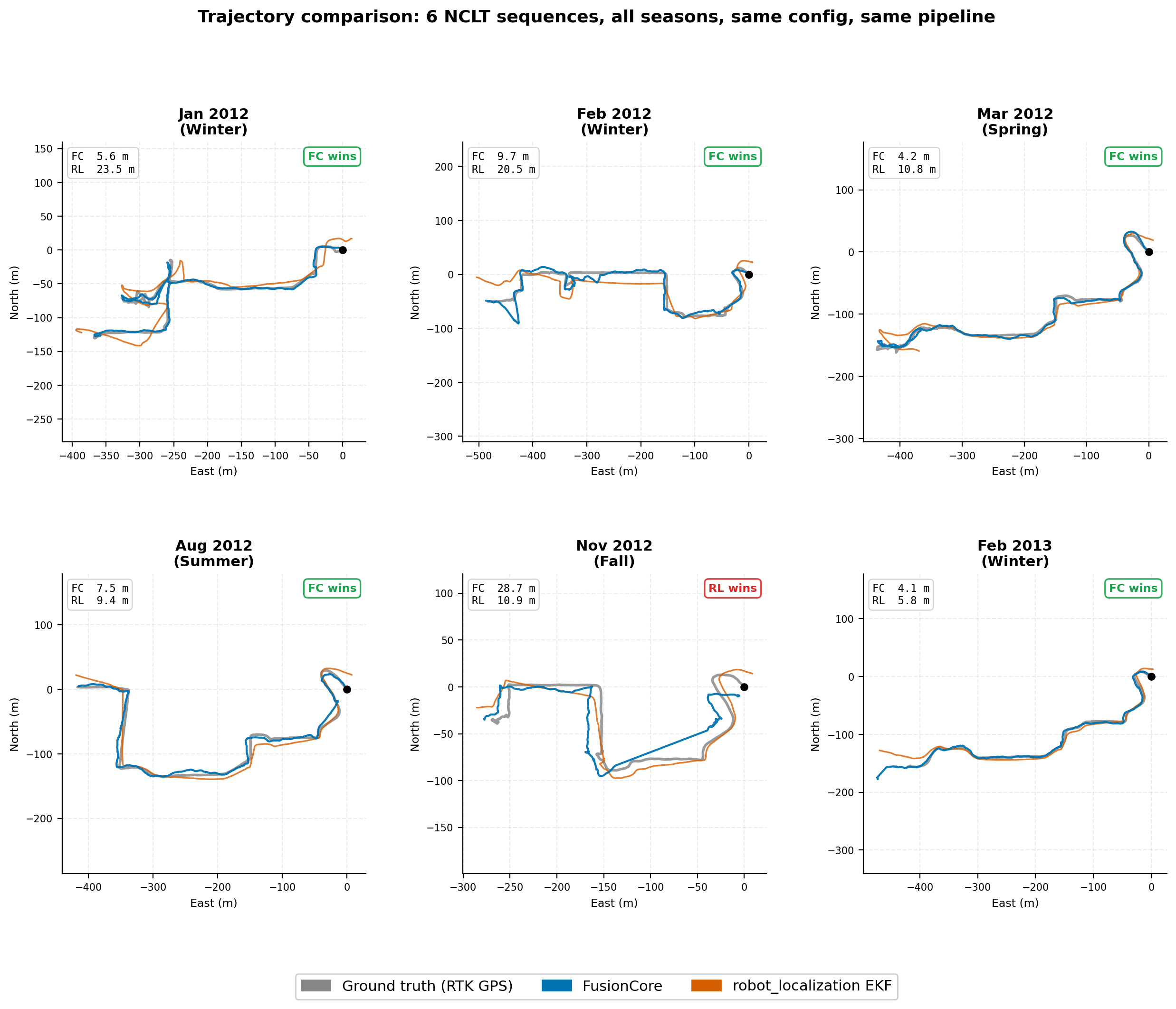

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

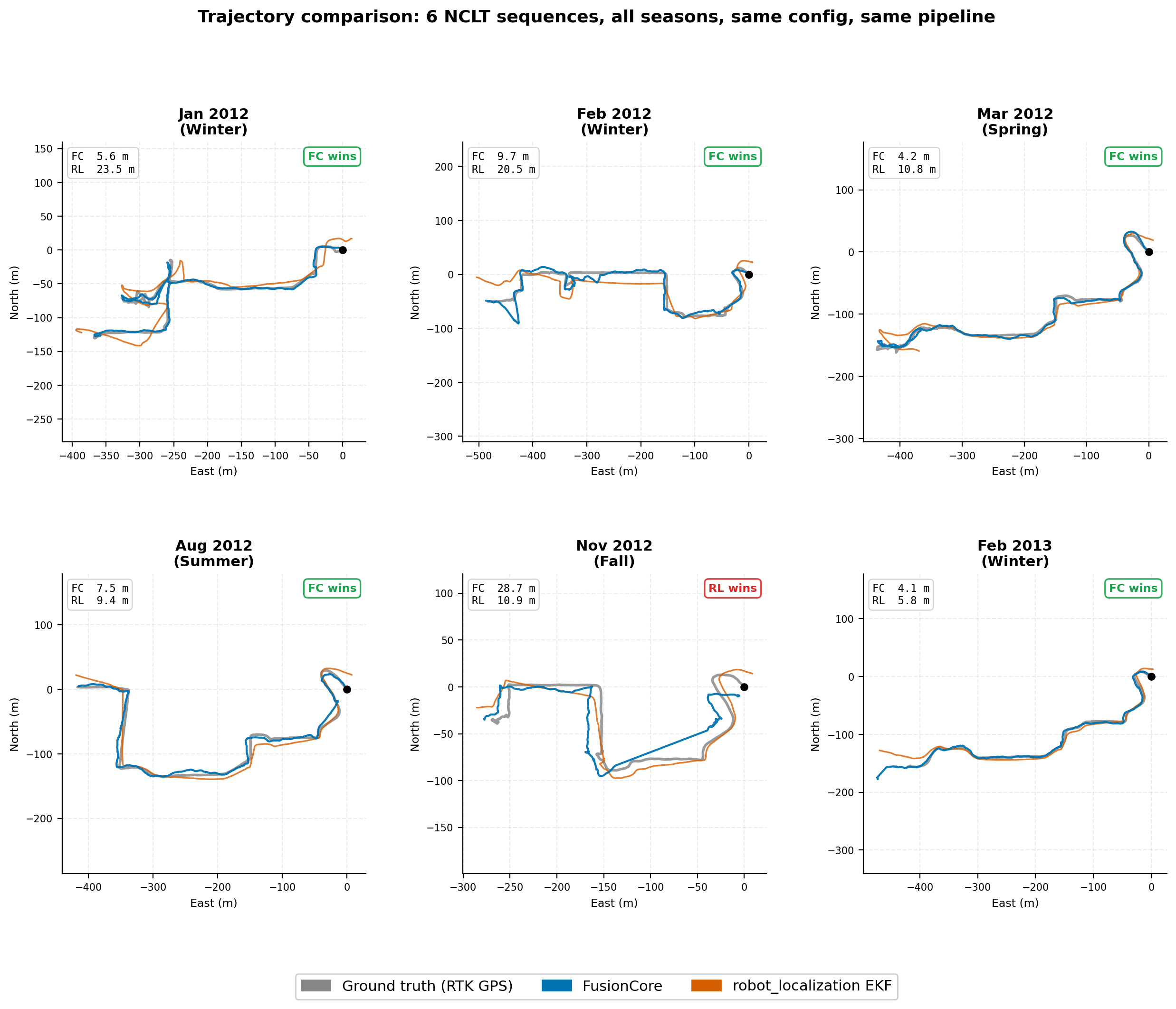

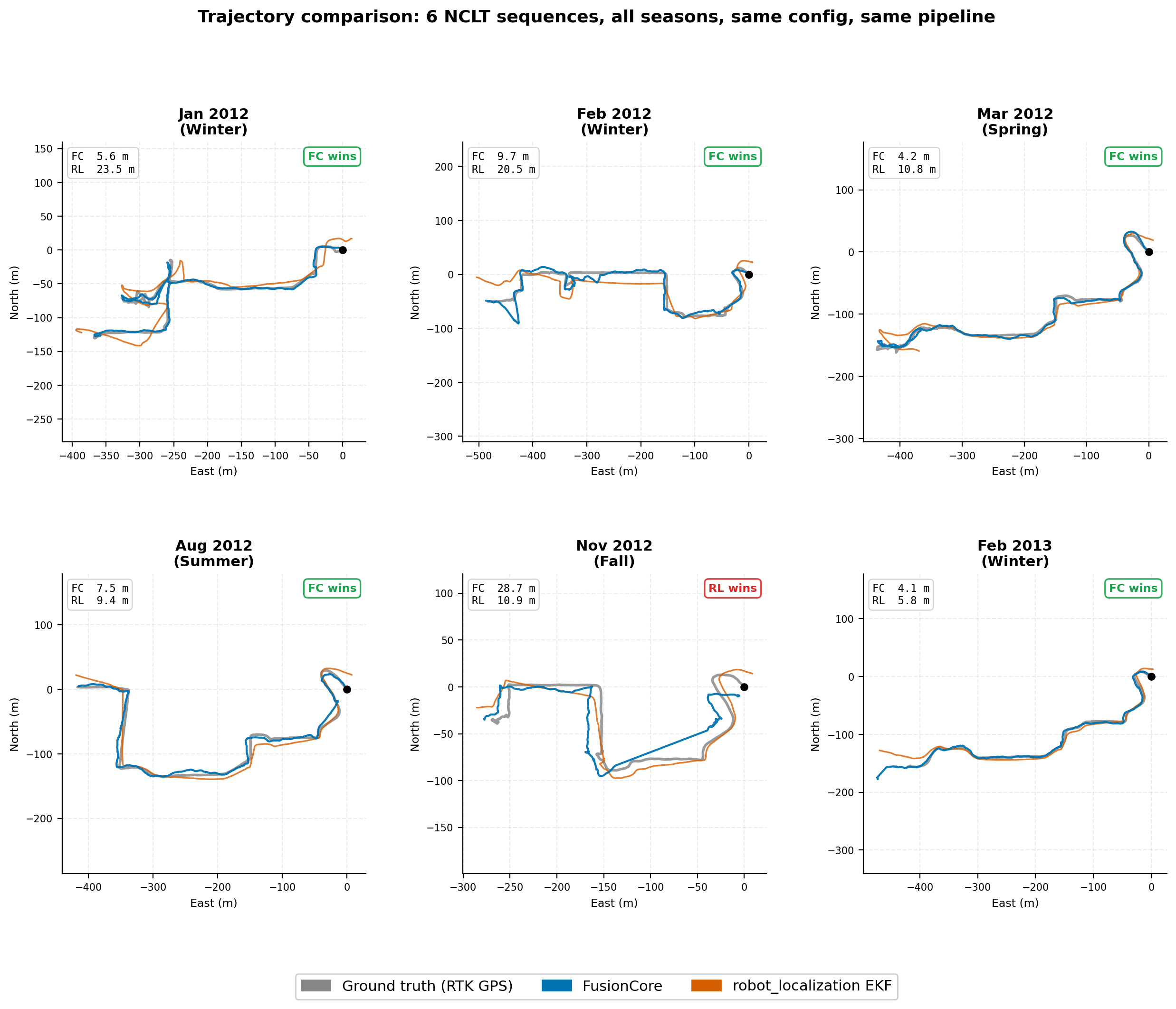

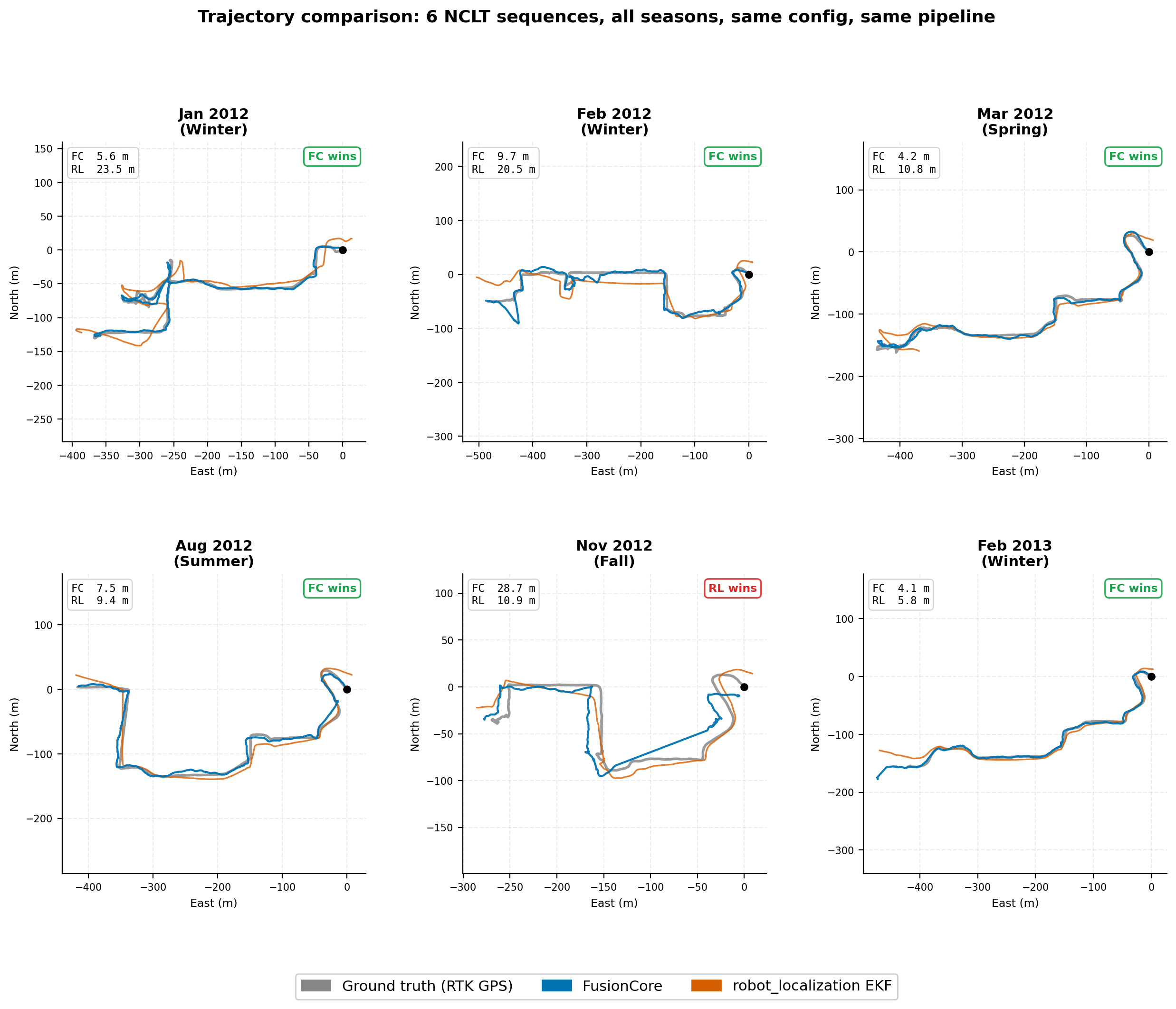

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

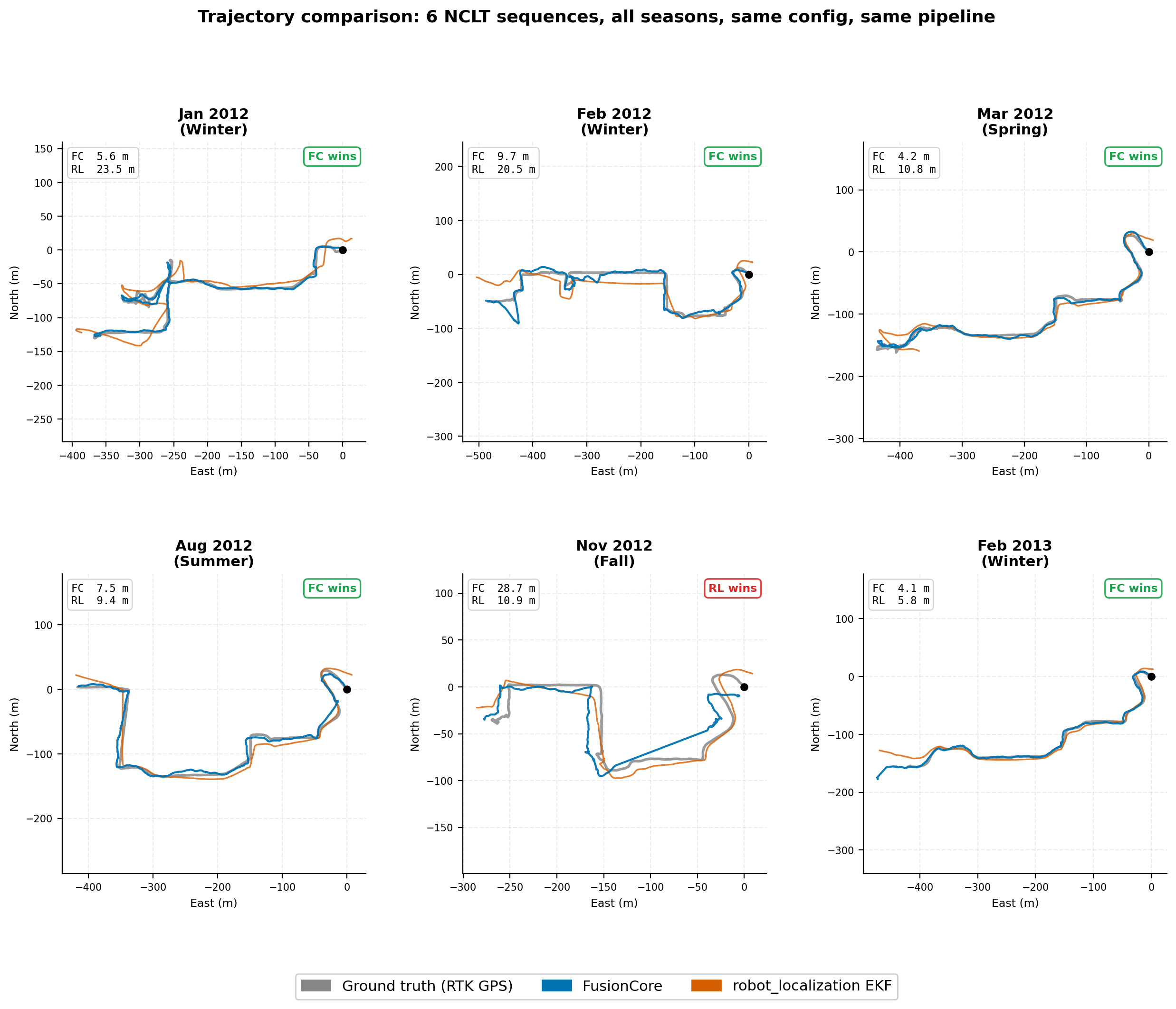

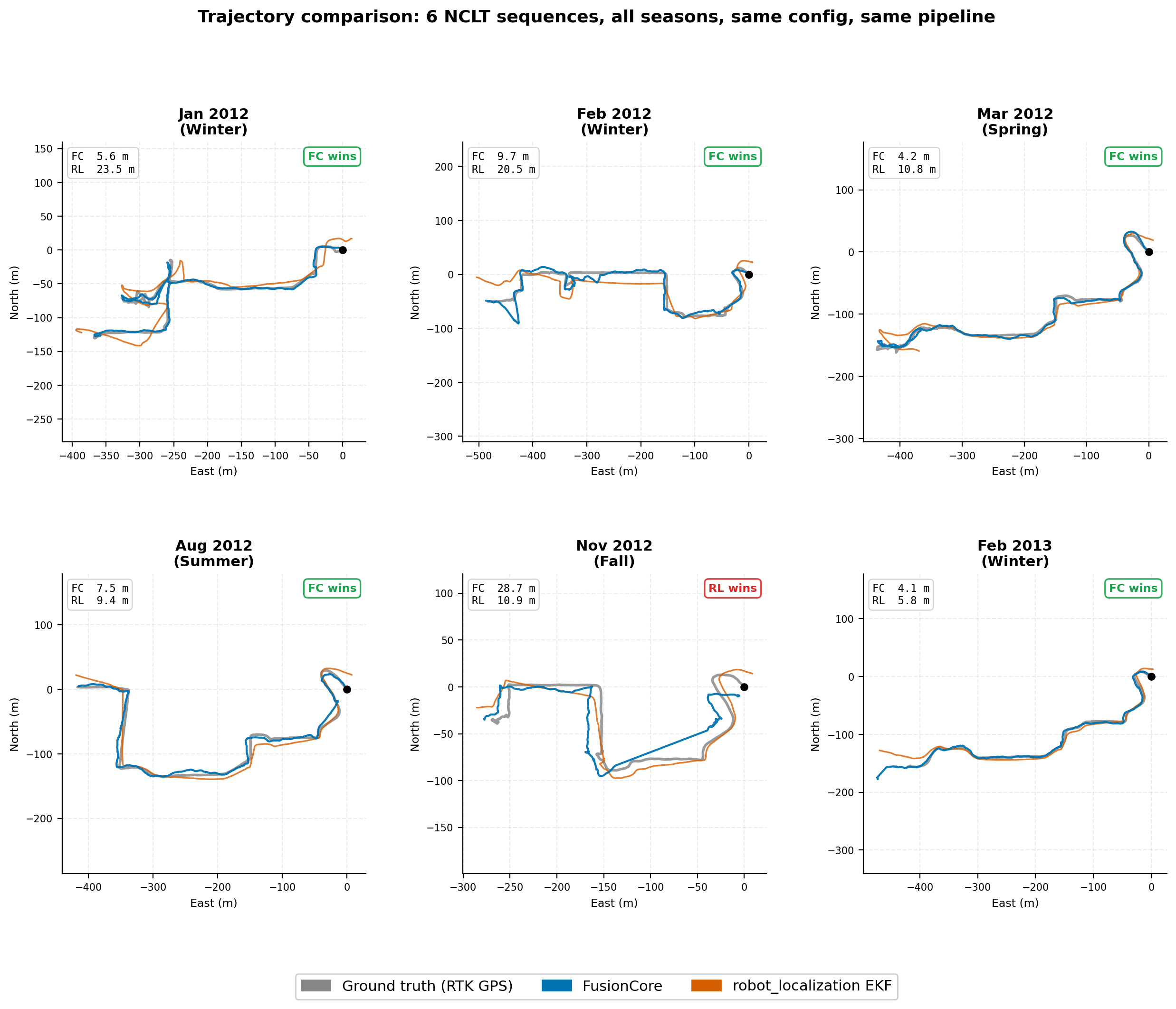

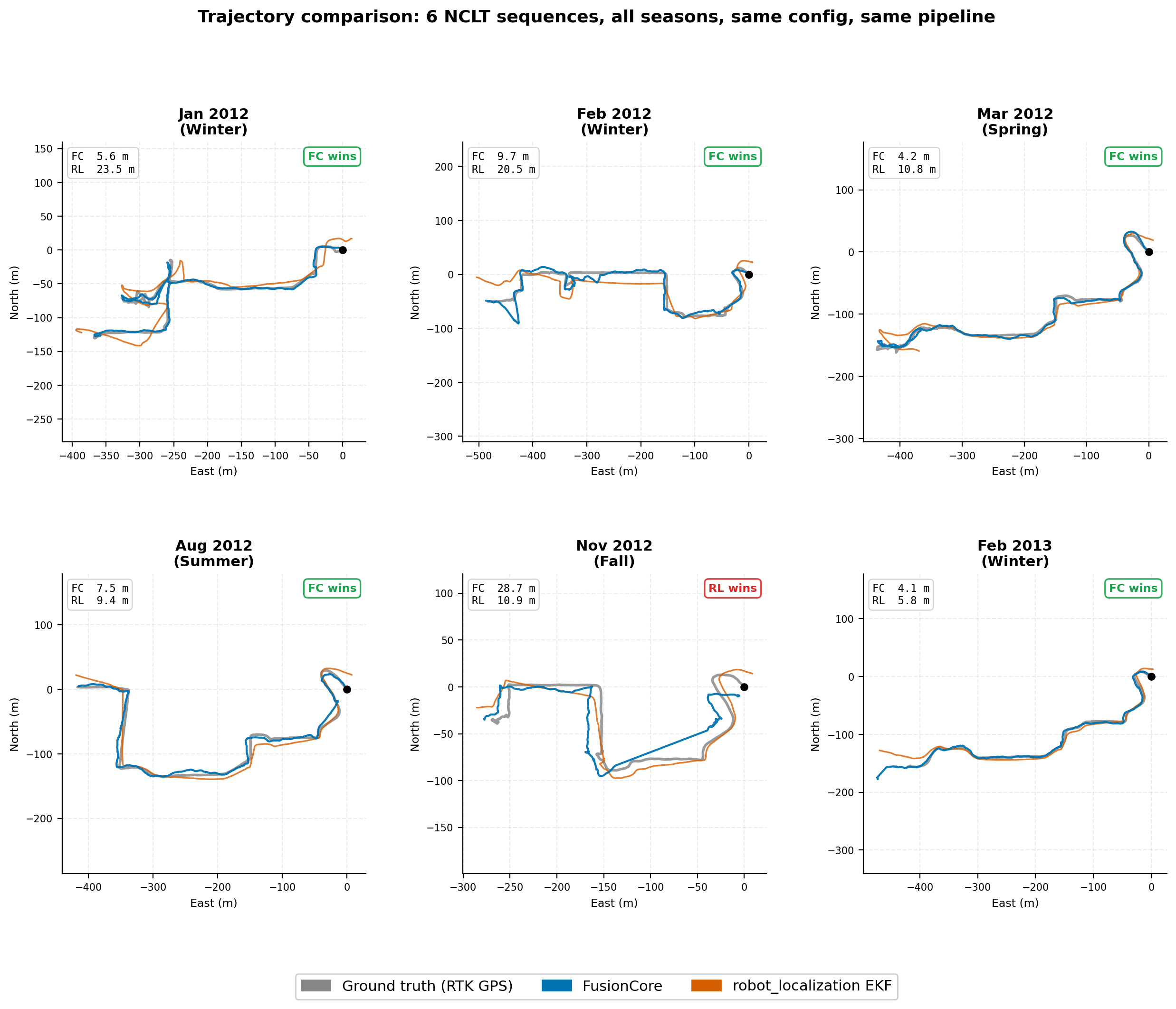

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start

- Check open issues: the bug may already be reported

- Check Discussions: the question may already be answered

- For anything bigger than a typo fix, open an issue or Discussion first so we can align before you write code

Development setup

# Clone and build

git clone https://github.com/manankharwar/fusioncore.git

cd fusioncore

source /opt/ros/jazzy/setup.sh # replace jazzy with humble on Ubuntu 22.04

rosdep install -r --from-paths . --ignore-src --rosdistro jazzy -y # replace jazzy with humble on Ubuntu 22.04

colcon build --packages-up-to compass_msgs fusioncore_core fusioncore_ros --cmake-args -DBUILD_TESTING=ON

# Run all tests before and after your change

colcon test --packages-select compass_msgs fusioncore_core fusioncore_ros

colcon test-result --verbose

All 45 tests must pass. CI will catch it if they don’t.

Hardware configs

A hardware config is a YAML file under fusioncore_ros/config/ named after the platform (e.g. clearpath_husky.yaml, ublox_f9p.yaml).

Copy fusioncore_ros/config/fusioncore.yaml as the starting point and adjust:

-

imu.gyro_noise/imu.accel_noise: pull from your IMU’s datasheet -

gnss.base_noise_xy: your GPS receiver’s CEP spec - Any topic remaps specific to your platform

Add a comment at the top with: platform name, IMU model, GPS receiver model, and whether it was field-tested or tuned from datasheet only. Field-tested configs get merged faster.

Pull request checklist

-

All 49 tests pass (

colcon test-result --verboseshows 0 failures) -

For new features: tests added in

fusioncore_core/tests/ - For hardware configs: YAML includes a comment with platform + sensor details

- Commit message describes why, not just what

Code style

C++17. Follow the style of the surrounding code: no reformatting unrelated lines. clang-format is not enforced but is appreciated.

Reporting bugs

Use the Bug Report issue template. Include the output of colcon test-result --verbose if tests are involved.

Questions

Open a Discussion rather than an issue. Issues are for bugs and tracked work; Discussions are for questions, configs, and ideas.

Response time: typically within 24 hours.

|

fusioncore repositorycompass_msgs fusioncore_core fusioncore_datasets fusioncore_gazebo fusioncore_ros |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/manankharwar/fusioncore.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-05-26 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| compass_msgs | 0.2.4 |

| fusioncore_core | 0.2.4 |

| fusioncore_datasets | 0.2.4 |

| fusioncore_gazebo | 0.2.4 |

| fusioncore_ros | 0.2.5 |

README

FusionCore

ROS 2 UKF sensor fusion for robots that run in the real world. IMU + wheel encoders + GPS at 100 Hz. Handles bad calibration, timestamp jitter, delayed GPS, wheel slip, and ARM hardware out of the box. Apache 2.0.

Install

Option A: From source (ROS 2 Jazzy on Ubuntu 24.04 or Humble on Ubuntu 22.04):

mkdir -p ~/ros2_ws/src && cd ~/ros2_ws/src

git clone https://github.com/manankharwar/fusioncore.git

cd ~/ros2_ws

source /opt/ros/jazzy/setup.bash # or /opt/ros/humble/setup.bash

rosdep install --from-paths src --ignore-src -r -y

colcon build --packages-up-to fusioncore_ros

source install/setup.bash

Verify it works (single command, replaces the 4-terminal manual test):

bash tools/quick_test.sh

Starts FusionCore with fake sensors and checks all outputs in about 15 seconds. Prints [PASS] / [FAIL] for each check.

Option B: Docker (no ROS install required)

docker pull ghcr.io/manankharwar/fusioncore:latest

docker run --rm -it ghcr.io/manankharwar/fusioncore:latest bash

Inside the container, verify everything works:

bash tools/quick_test.sh

Works on the hardware you actually have

Most sensor fusion tutorials assume clean data. Real robots don’t have clean data. FusionCore was built around the problems you actually run into.

| The problem | How FusionCore handles it |

|---|---|

| IMU calibration is approximate | Gyro and accel bias are filter states, estimated continuously. init.stationary_window: 2.0 estimates startup bias before motion begins, dropping startup drift from ~10 cm to under 1 cm. |

| Extrinsic calibration is never exact | Reads frame_id from every IMU message and looks up the TF rotation to base_link automatically. Set imu.frame_id to override broken frame names from drivers (e.g. Gazebo TurtleBot3). No manual rotation matrices. |

| Timestamp jitter and zero-stamped drivers |

dt is clamped to prevent divergence from missed timer ticks. Wall clock fallback for drivers that publish stamp={sec=0}. |

| GPS arrives late (50–200 ms) | IMU ring buffer replays 1 second of buffered updates when a delayed fix arrives. The state at the GPS timestamp is reconstructed exactly, not approximated. |

| Wheel odometry is noisy or slipping | Adaptive noise covariance updates from the innovation sequence. GPS velocity fusion (optional) compares GPS-reported speed against wheel speed every cycle: the innovation reveals slip and the Kalman gain down-weights the slipping wheel automatically. |

| Noise parameters require days of tuning | Two numbers from your IMU datasheet: imu.gyro_noise (ARW) and imu.accel_noise (VRW). Everything else adapts within the first minute of operation. |

| Robot runs on Raspberry Pi or Jetson | Under 0.2 ms per cycle on i7. Under 1 ms on Raspberry Pi 4. Same binary on ARM (NEON auto-detected) and x86 (AVX auto-detected) via Eigen. No recompilation, no parameter changes. |

| Two IMUs on the platform | Set imu2.topic to fuse a second IMU as an independent measurement. No pre-merging with imu_filter_madgwick needed. |

| GPS drops out in tunnels or canopy | Inertial coast mode maintains position integrity during sustained GPS dropout. Outlier gate relaxes automatically to reacquire when GPS returns. |

| Robot sits still for minutes | ZUPT (zero velocity update) fuses a zero-velocity pseudo-measurement when encoder speed and angular rate are both below threshold. Prevents IMU noise from integrating into position drift during idle periods. |

Benchmark

FusionCore vs robot_localization on the NCLT dataset: same IMU + wheel odometry + GPS, no manual tuning. Twelve full-length sequences across all seasons. RL-EKF run with chi-squared-equivalent thresholds at 99.9% confidence.

| Sequence | Season | Duration | FC ATE RMSE | RL-EKF ATE RMSE | Winner |

|---|---|---|---|---|---|

| 2012-01-08 | Winter | 92 min | 18.6 m | 41.2 m | FC +55% |

| 2012-02-04 | Winter | 77 min | 49.7 m | 265.5 m | FC +81% |

| 2012-03-31 | Spring | 87 min | 22.0 m | 156.5 m | FC +86% |

| 2012-05-11 | Spring | 84 min | 9.7 m | 11.5 m | FC +16% |

| 2012-06-15 | Summer | 55 min | 49.2 m | 18.2 m | RL +63% |

| 2012-08-20 | Summer | 83 min | 98.3 m | 10.6 m | RL +89% |

| 2012-09-28 | Fall | 77 min | 10.8 m | 55.7 m | FC +81% |

| 2012-10-28 | Fall | 85 min | 29.9 m | 60.0 m | FC +50% |

| 2012-11-04 | Fall | 79 min | 60.1 m | 122.0 m | FC +51% |

| 2012-12-01 | Winter | 75 min | 21.0 m | 90.7 m | FC +77% |

| 2013-02-23 | Winter | 78 min | 59.4 m | 82.2 m | FC +28% |

| 2013-04-05 | Spring | 68 min | 12.1 m | 268.9 m | FC +96% |

RL-UKF diverges with NaN on all twelve sequences. FusionCore wins 10/12 sequences. RL-EKF’s losses trace to a single root cause: the GPS driver reports 3m sigma, but measured against RTK ground truth, actual p95 noise is 9.7-53.1m depending on the day. RL’s gate is calibrated to the stated 3m and rejects valid fixes on bad-GPS days. FusionCore’s adaptive noise estimation (adaptive.gnss: true) keeps chi2 statistics calibrated in real time.

The two FC losses are driven by a GPS data quality issue on 2012-08-20 (105 corrupt mode-3 fixes in a 24-second window at a blackout boundary) and accumulated heading error during a 462-second GPS blackout on 2012-06-15. See benchmarks/README.md for full per-sequence analysis including root causes and path-to-fix.

File truncated at 100 lines see the full file

CONTRIBUTING

Contributing to FusionCore

Thanks for your interest. Contributions are welcome: hardware configs, bug fixes, tests, and documentation all help.

The fastest way to contribute

The most impactful contributions right now are hardware configs. If you have FusionCore running on a robot, platform, or IMU that isn’t in the repo yet, open a PR adding a YAML under fusioncore_ros/config/. See the hardware config section below.

Before you start