Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

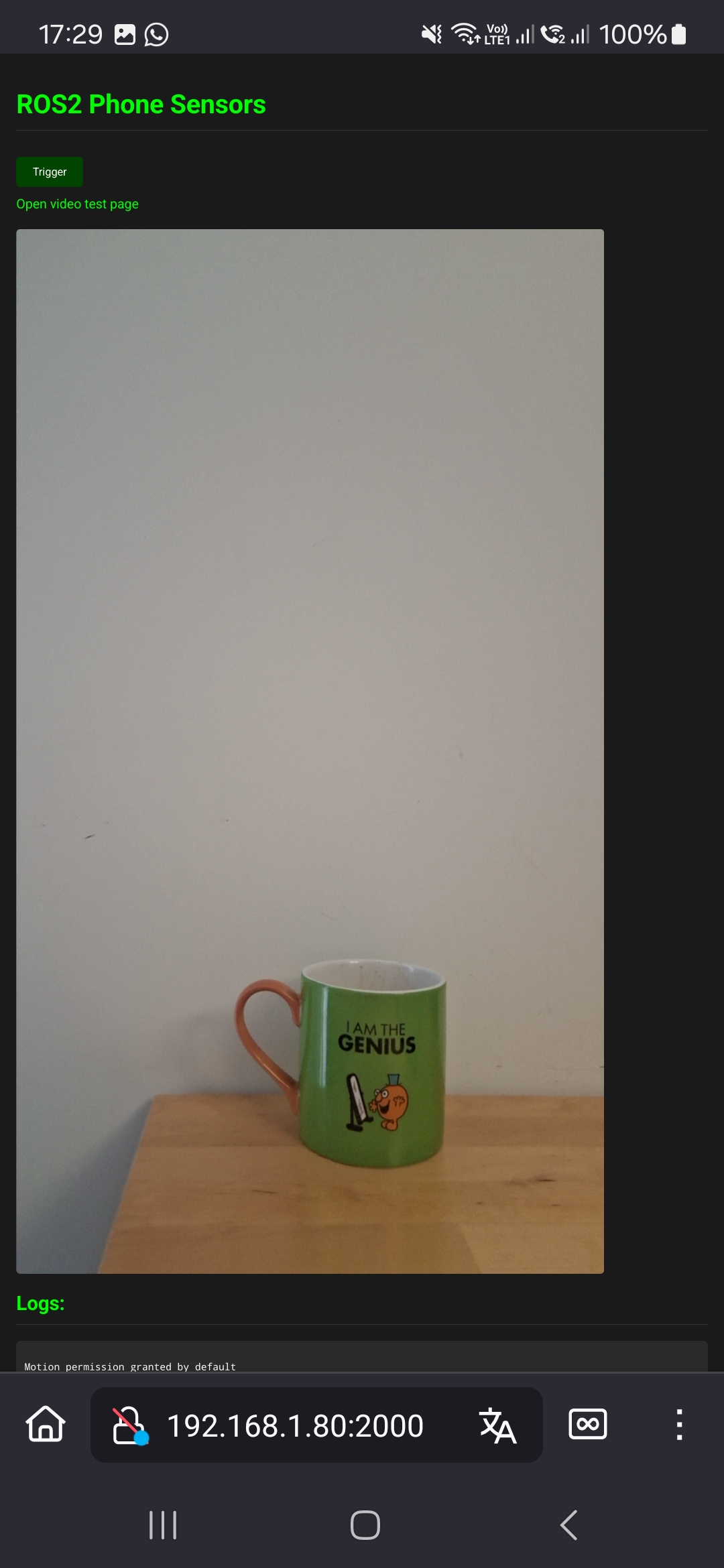

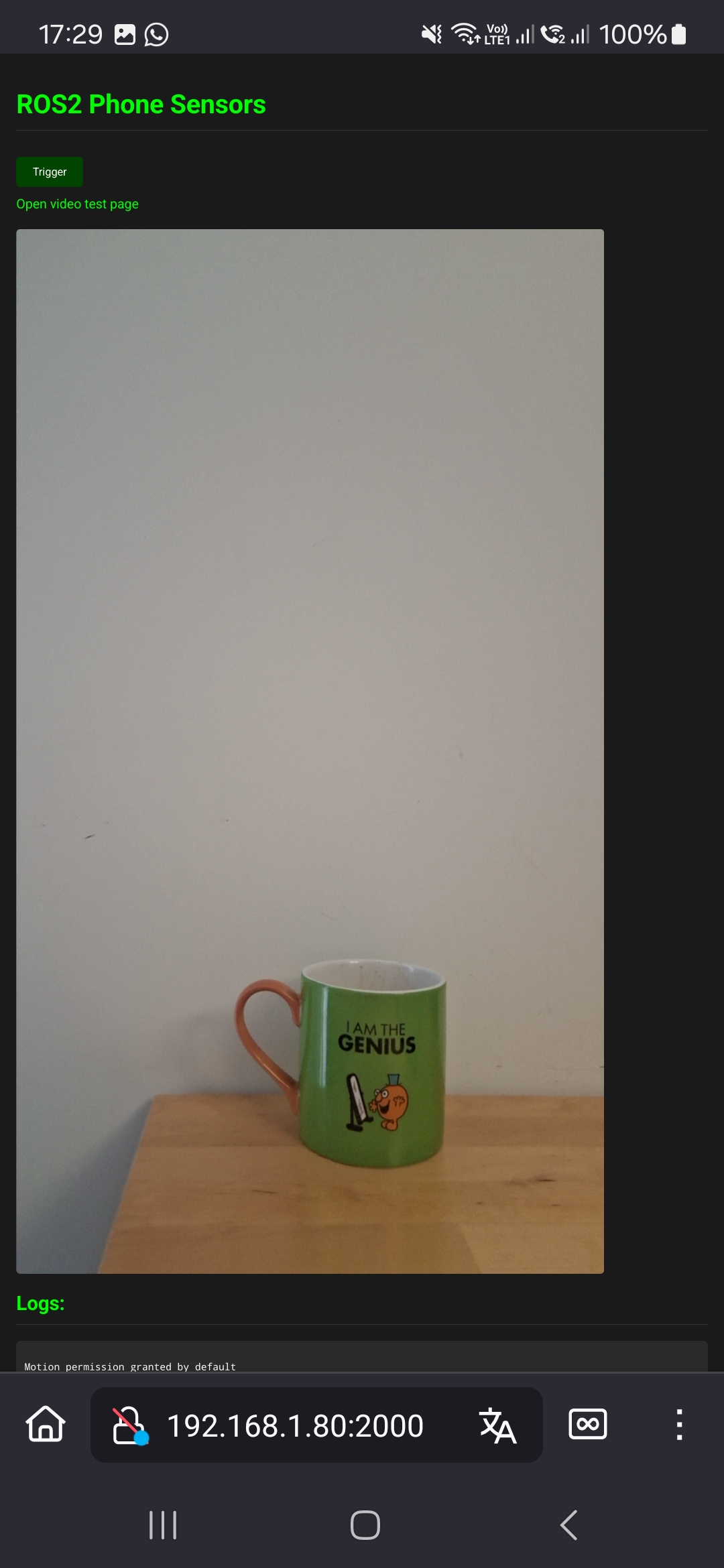

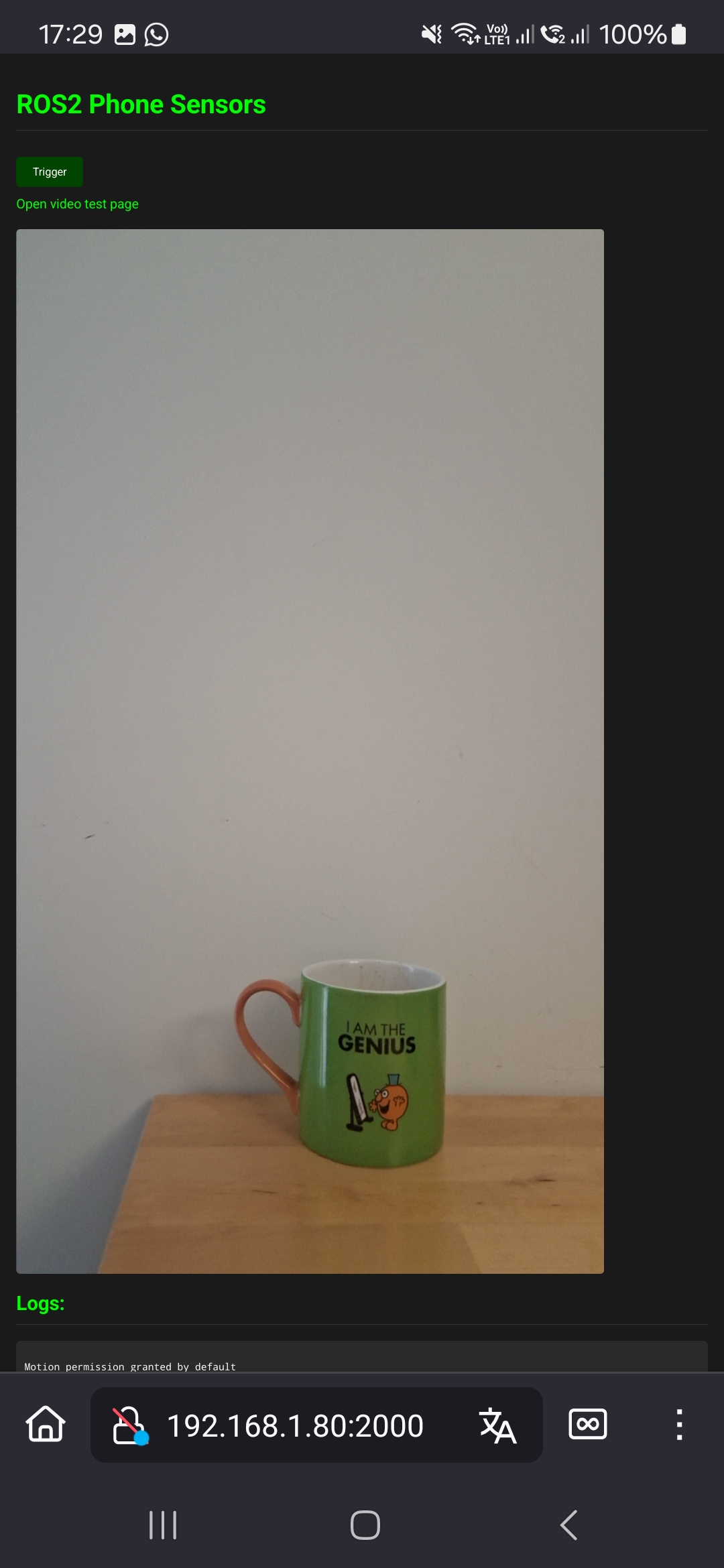

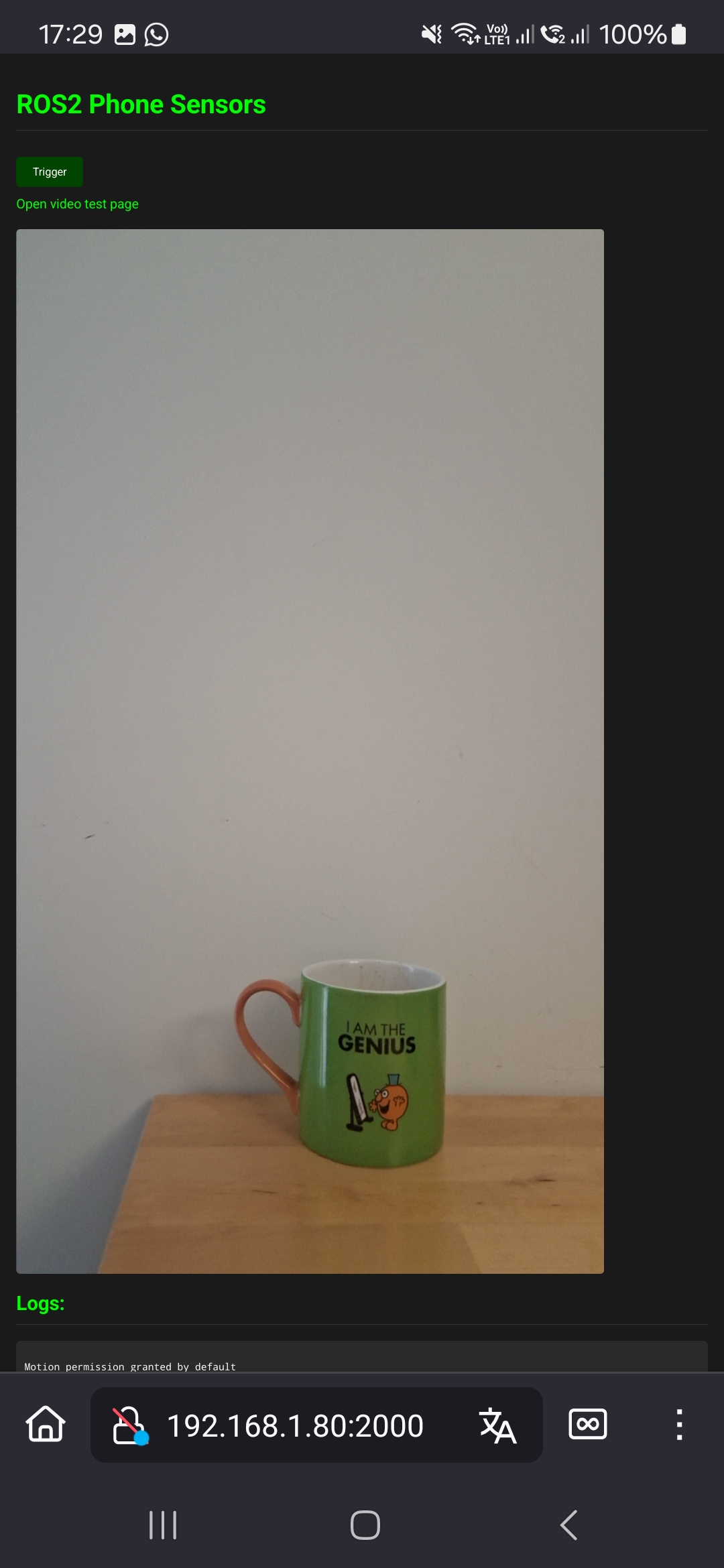

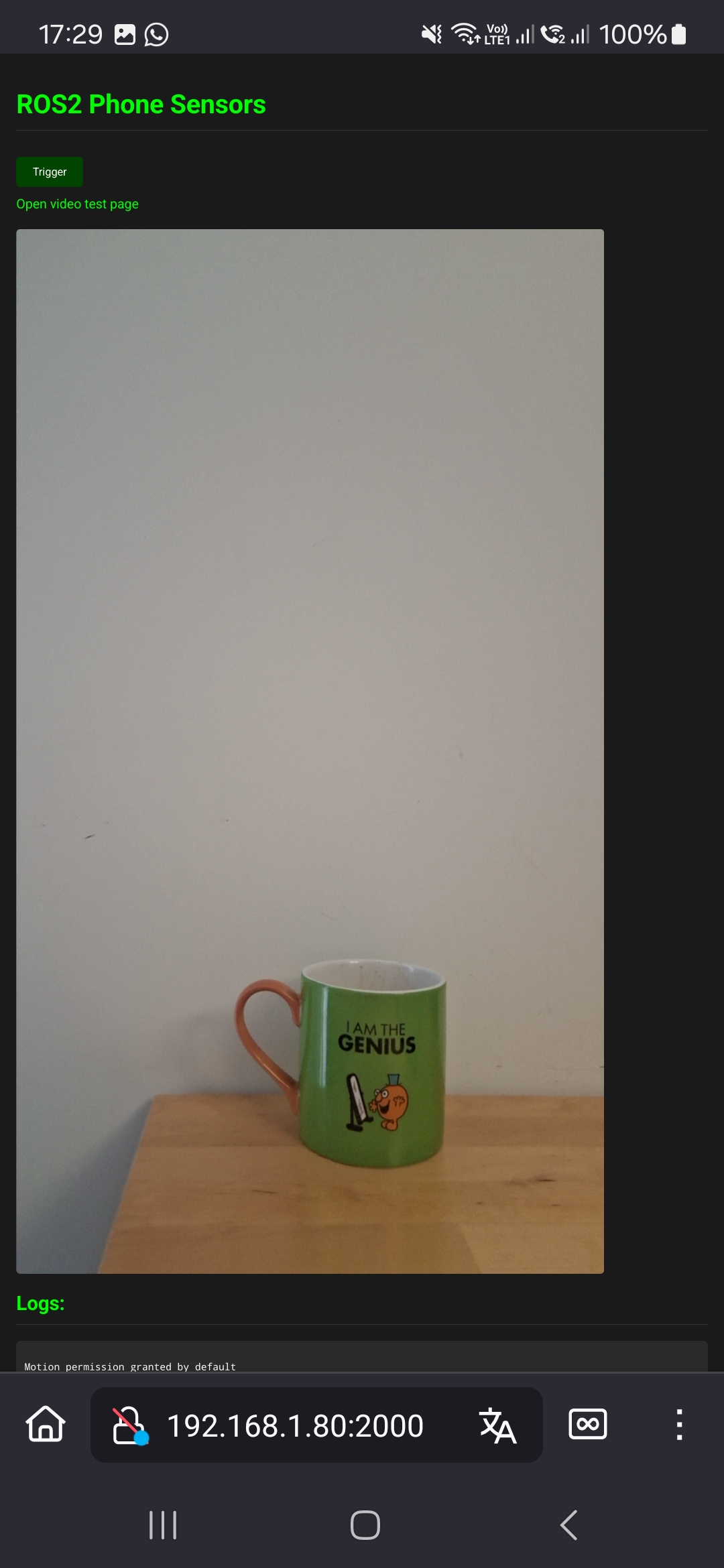

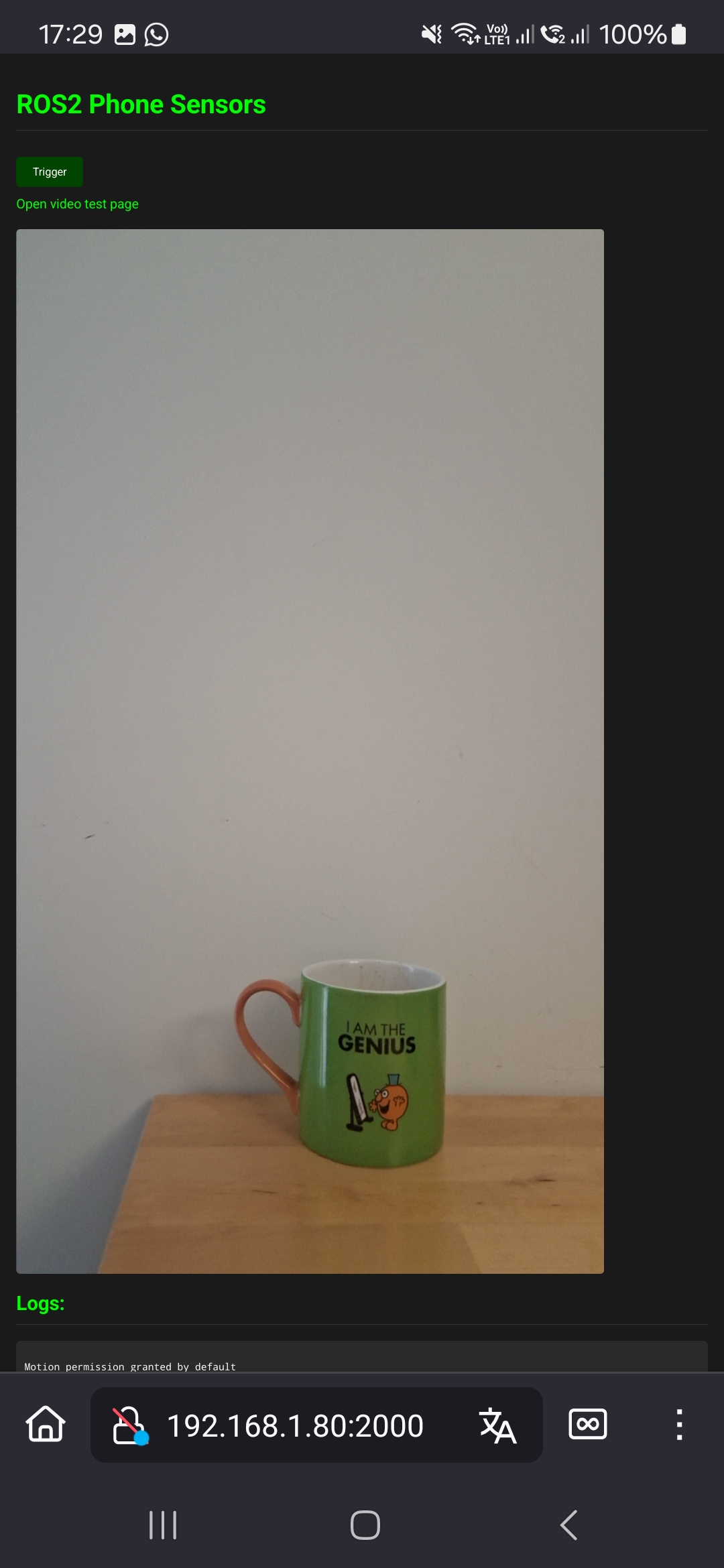

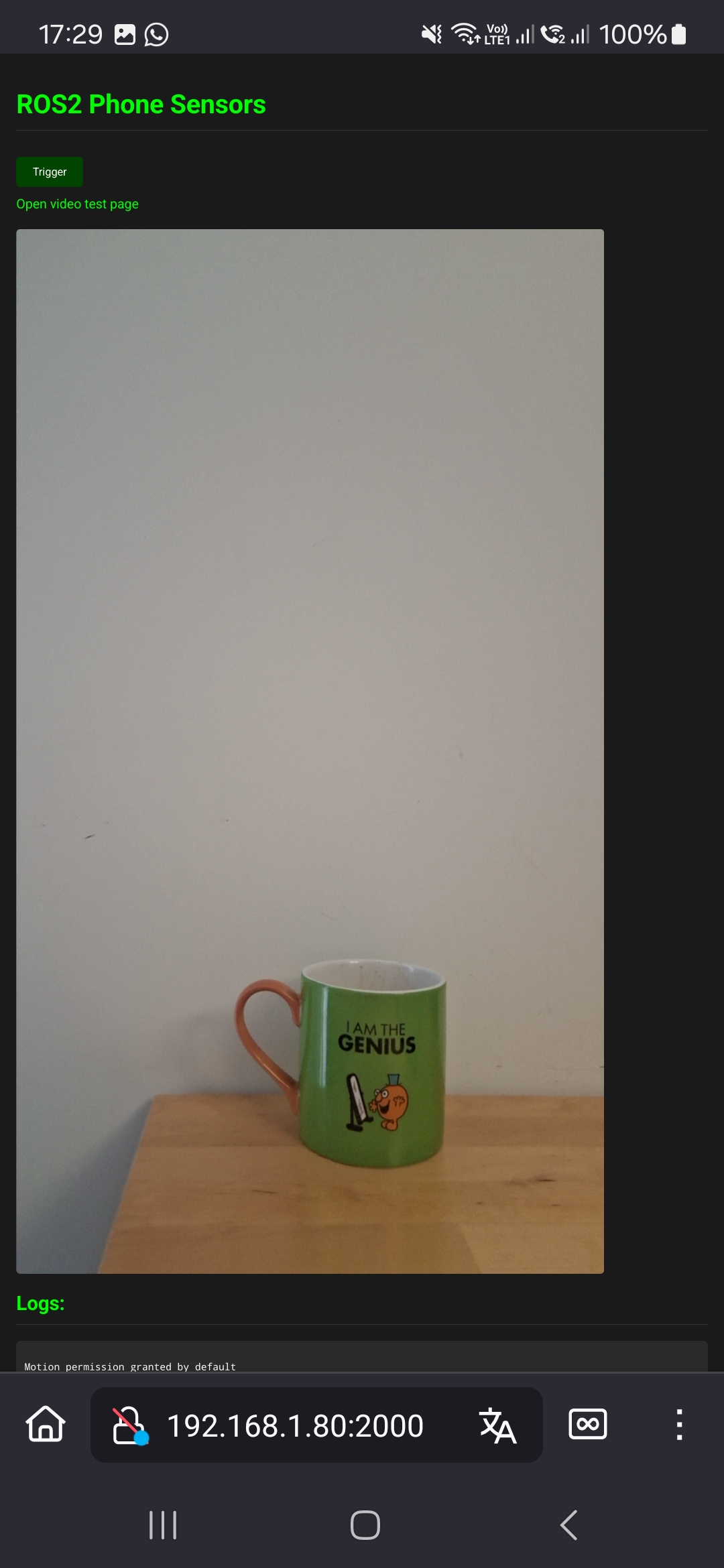

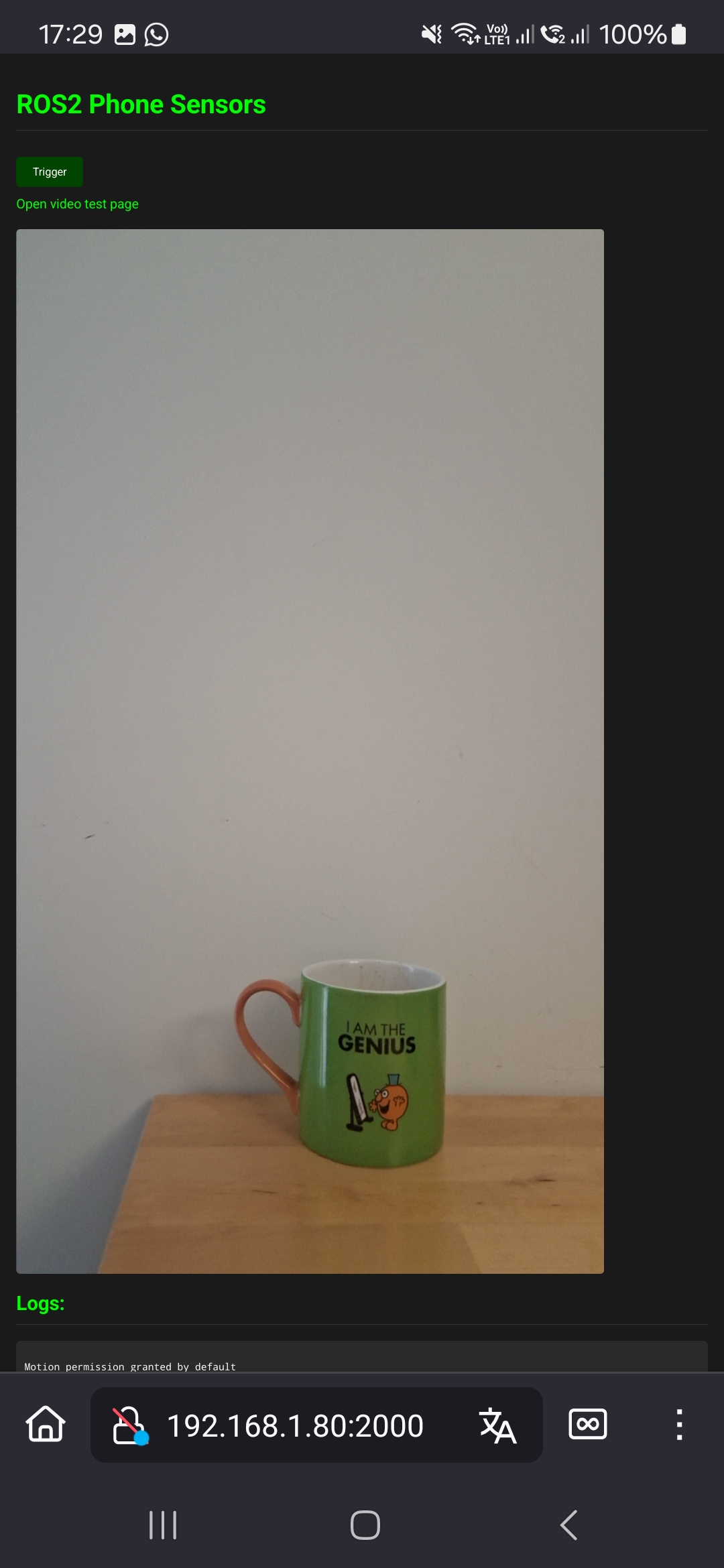

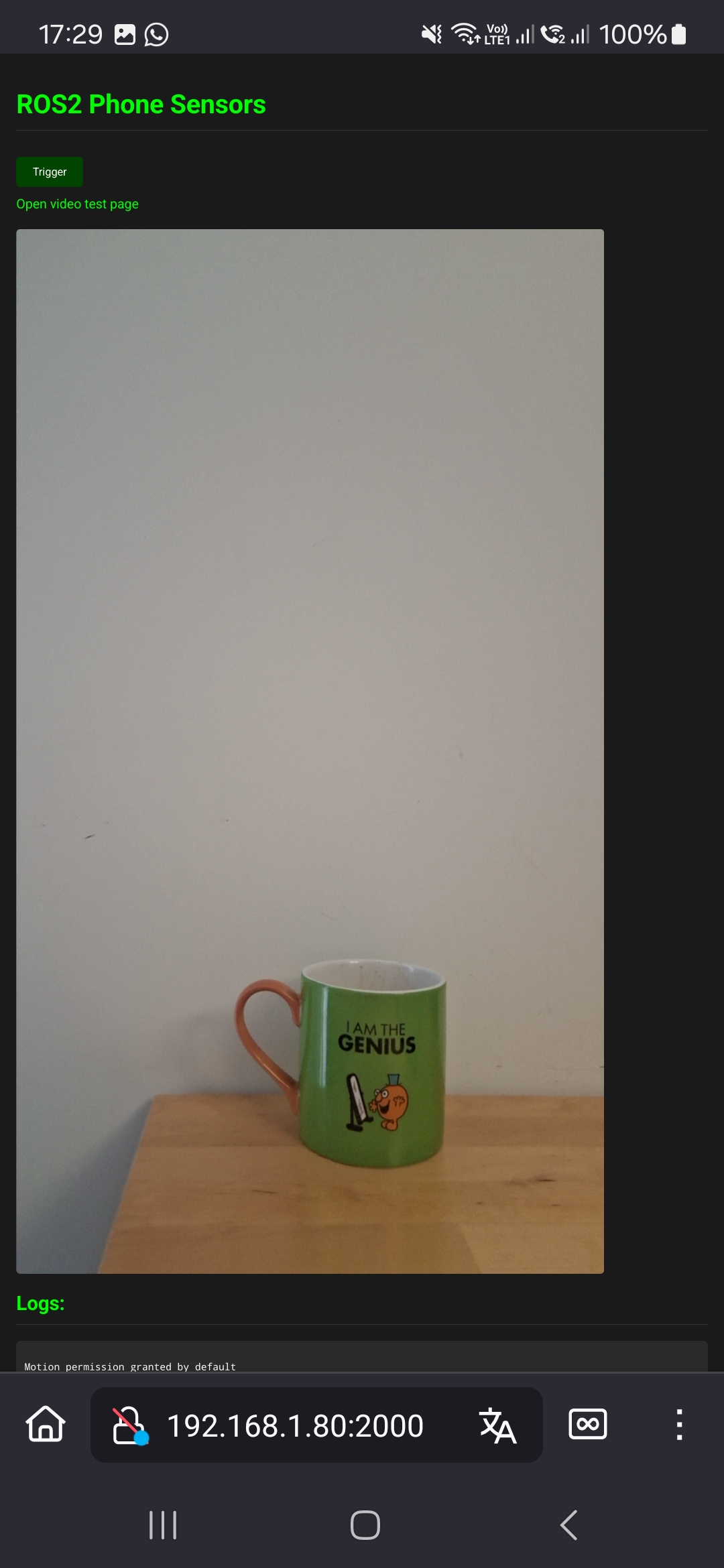

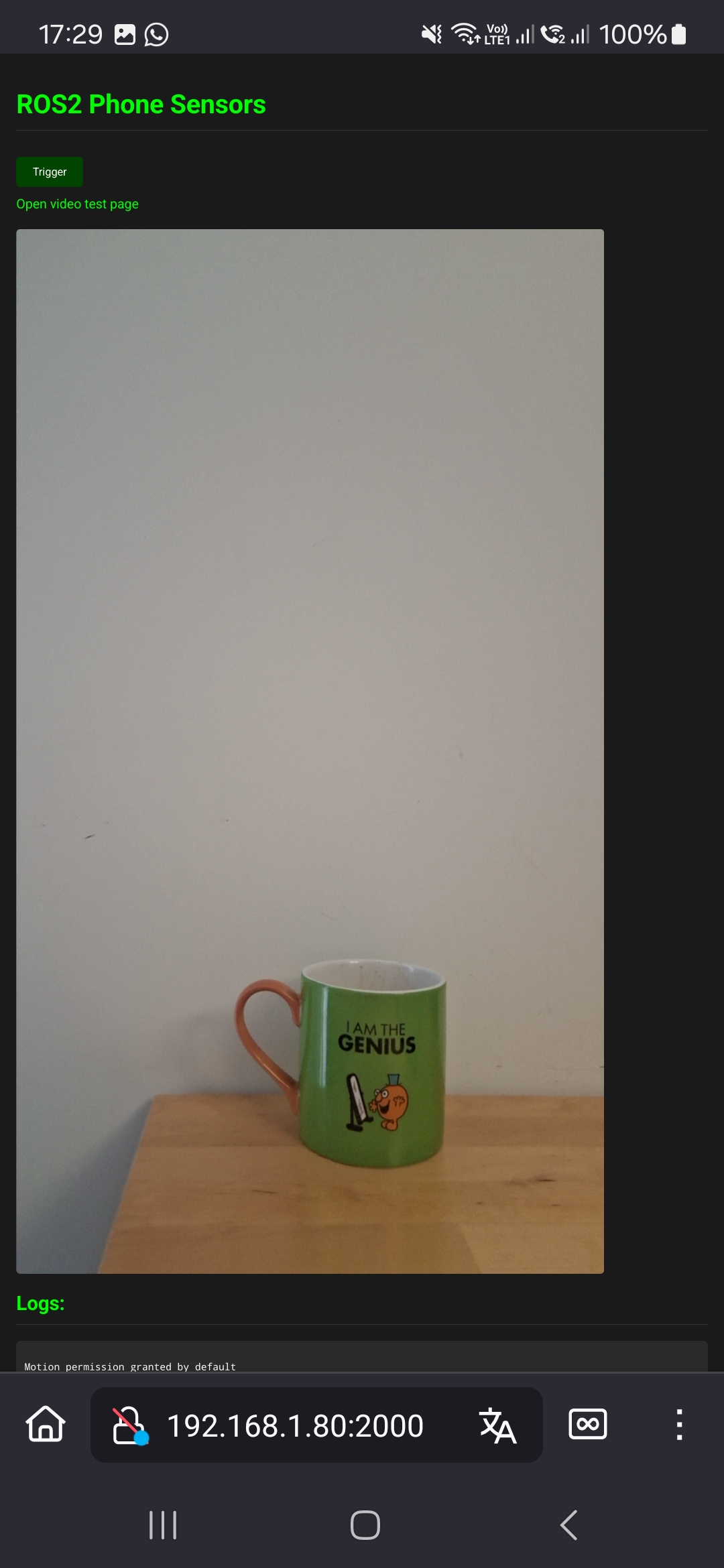

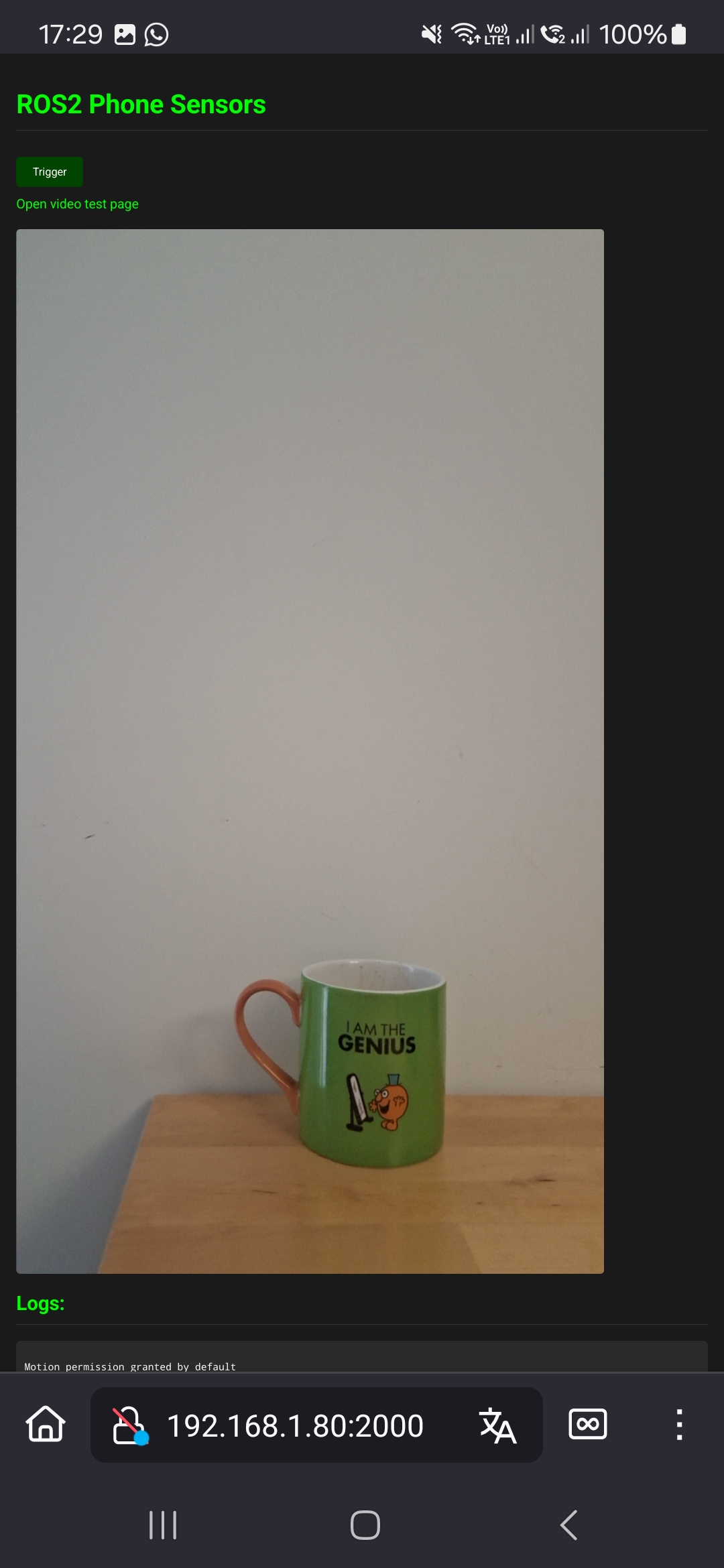

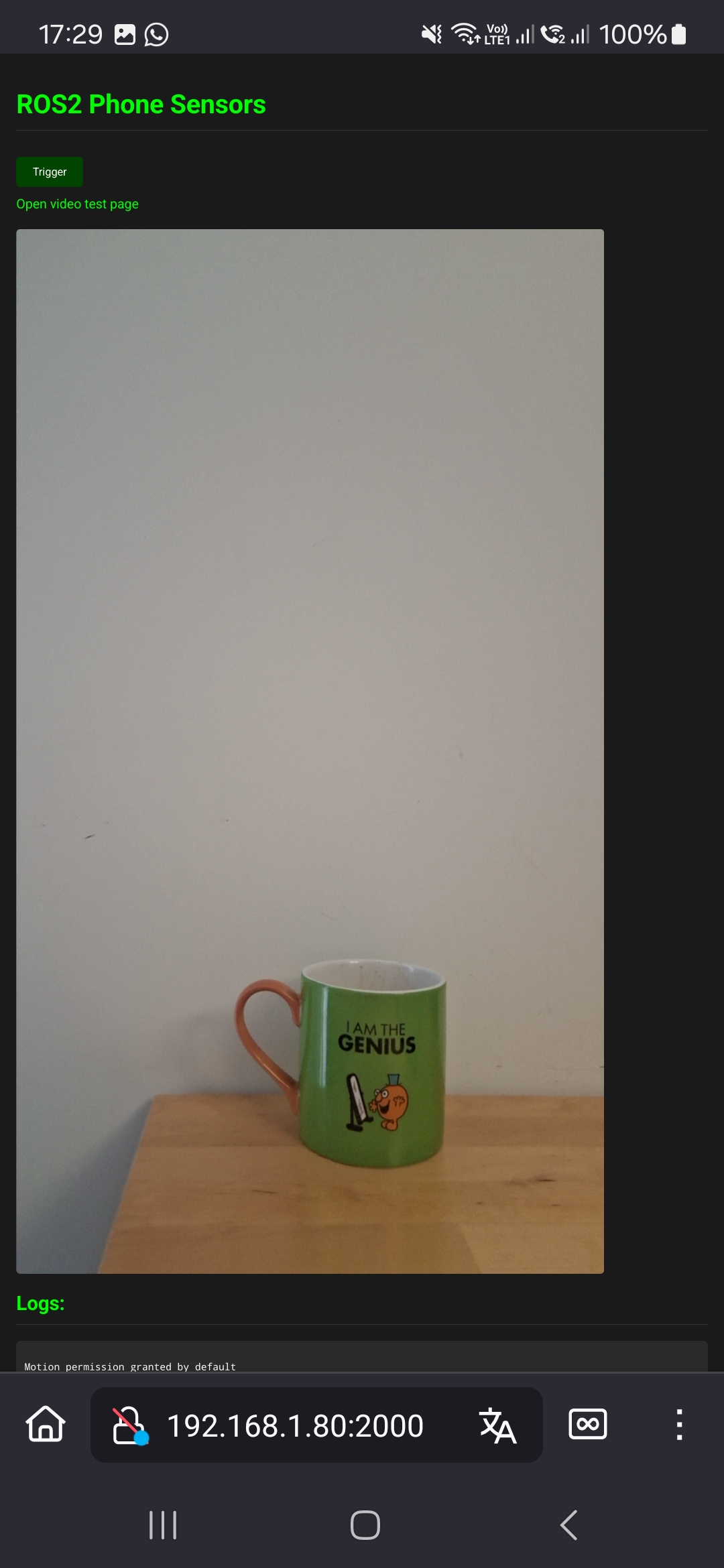

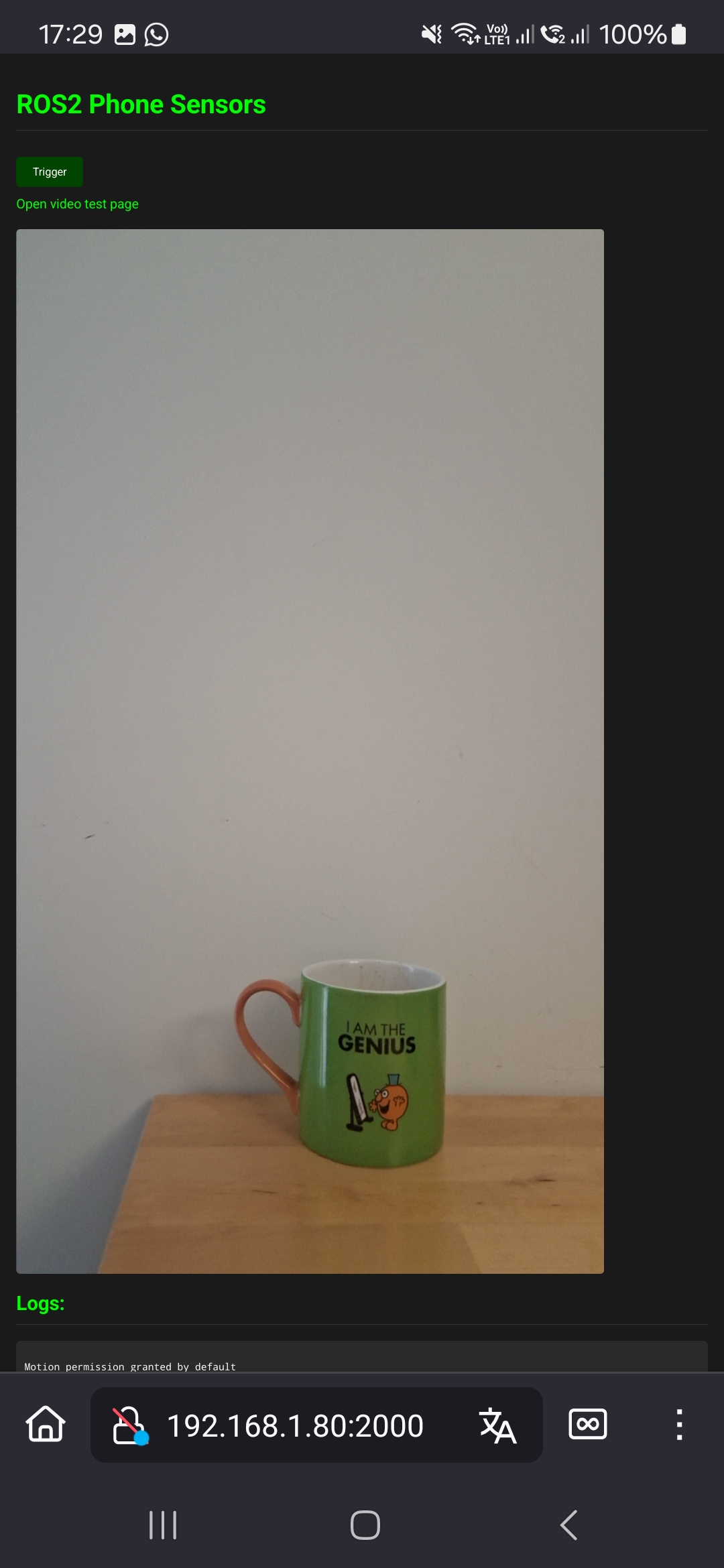

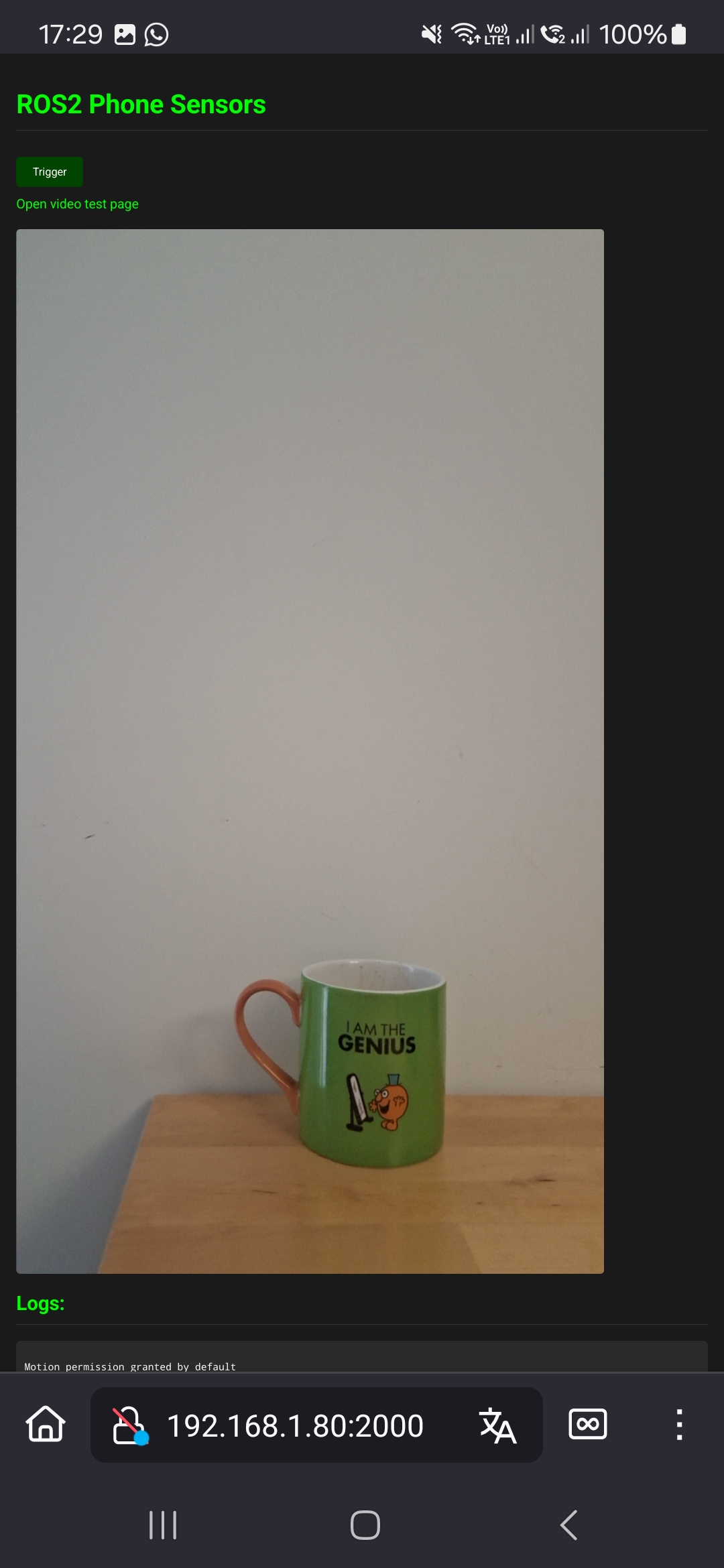

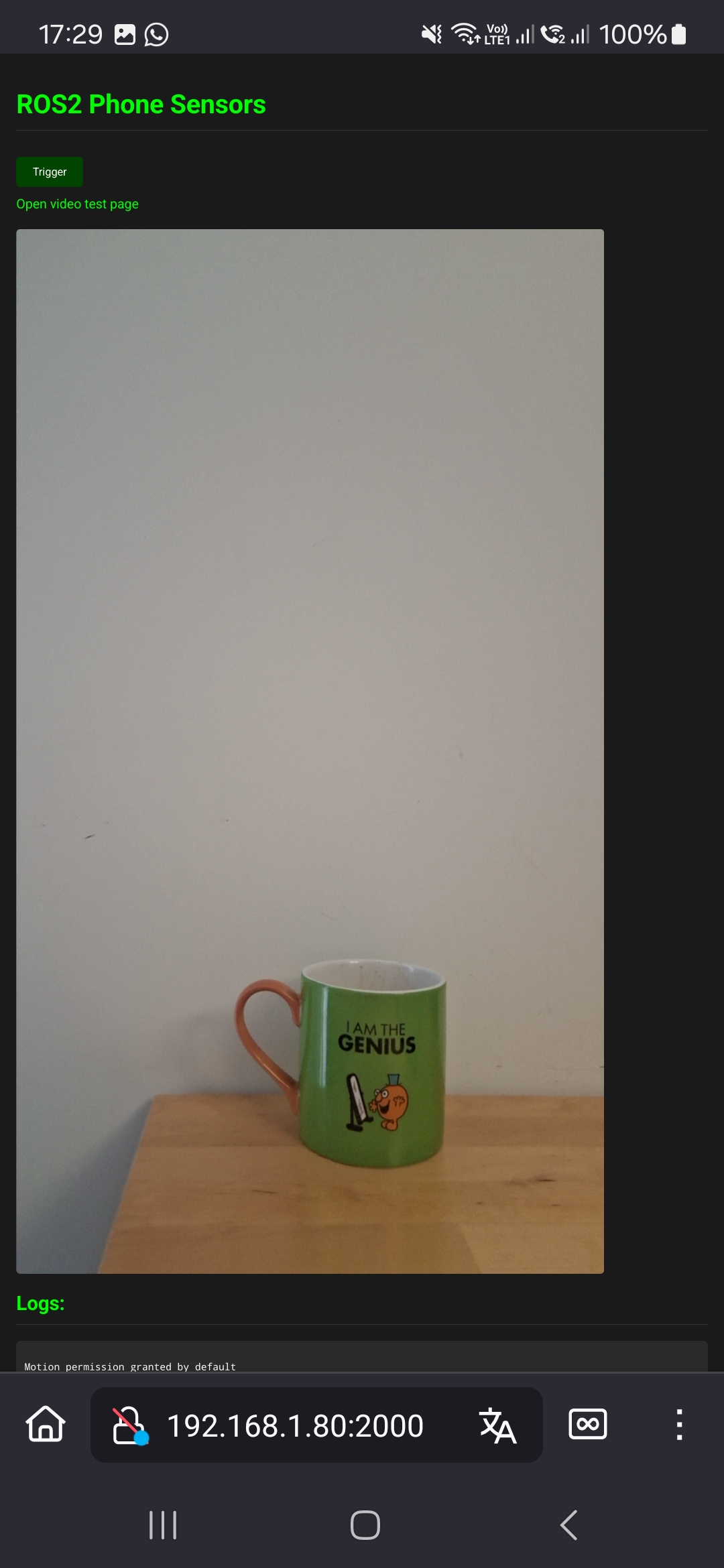

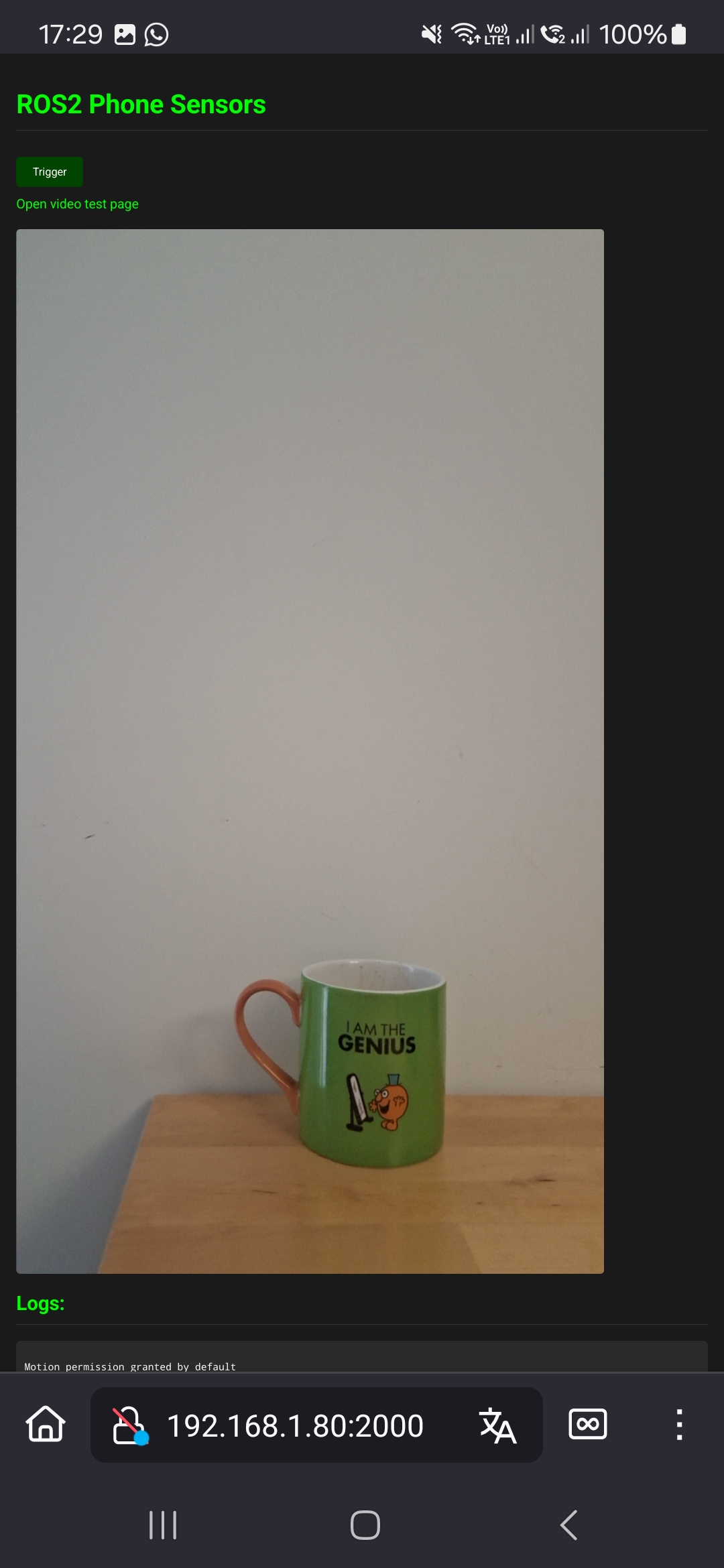

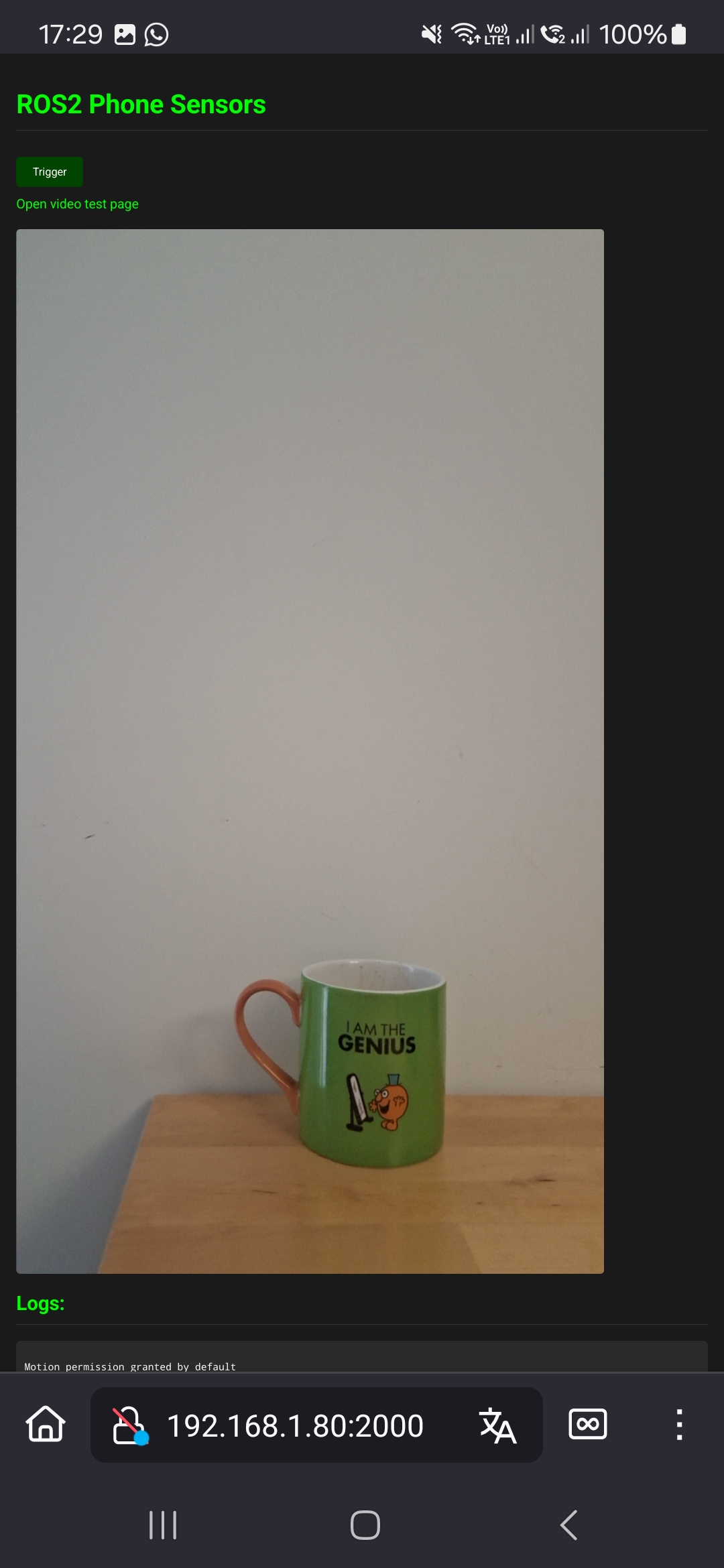

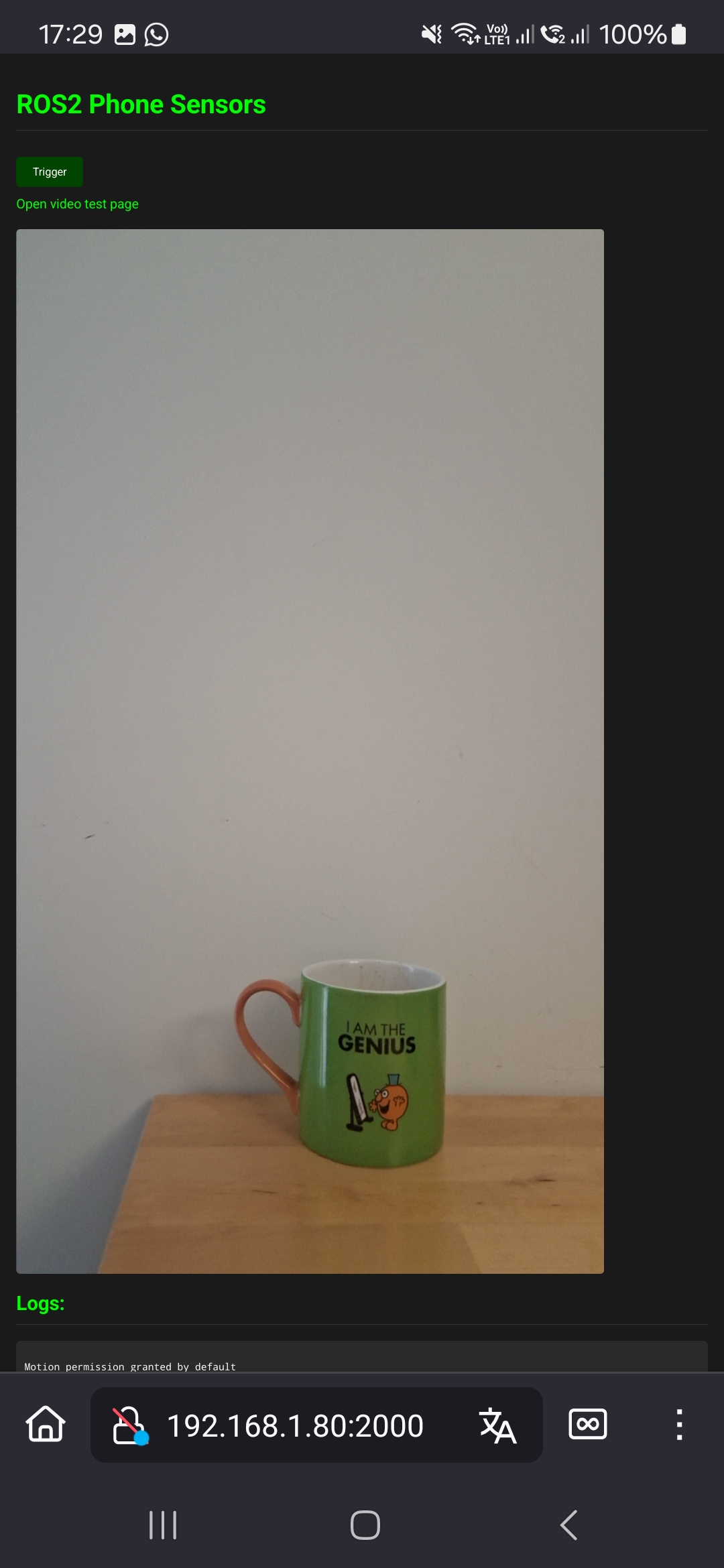

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

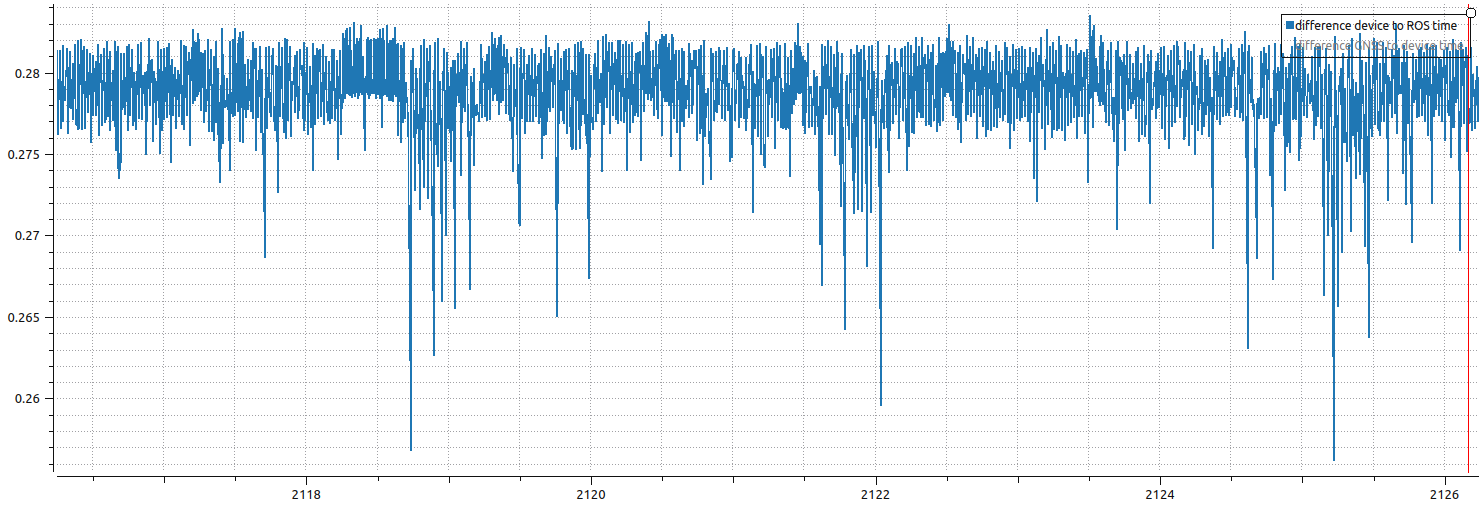

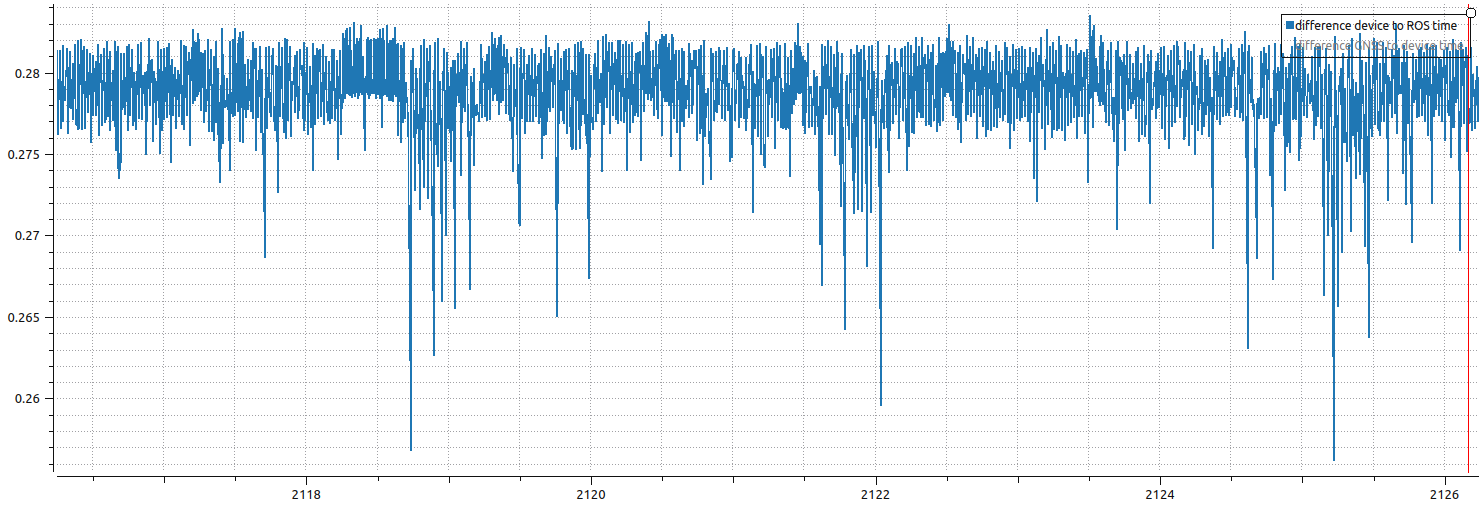

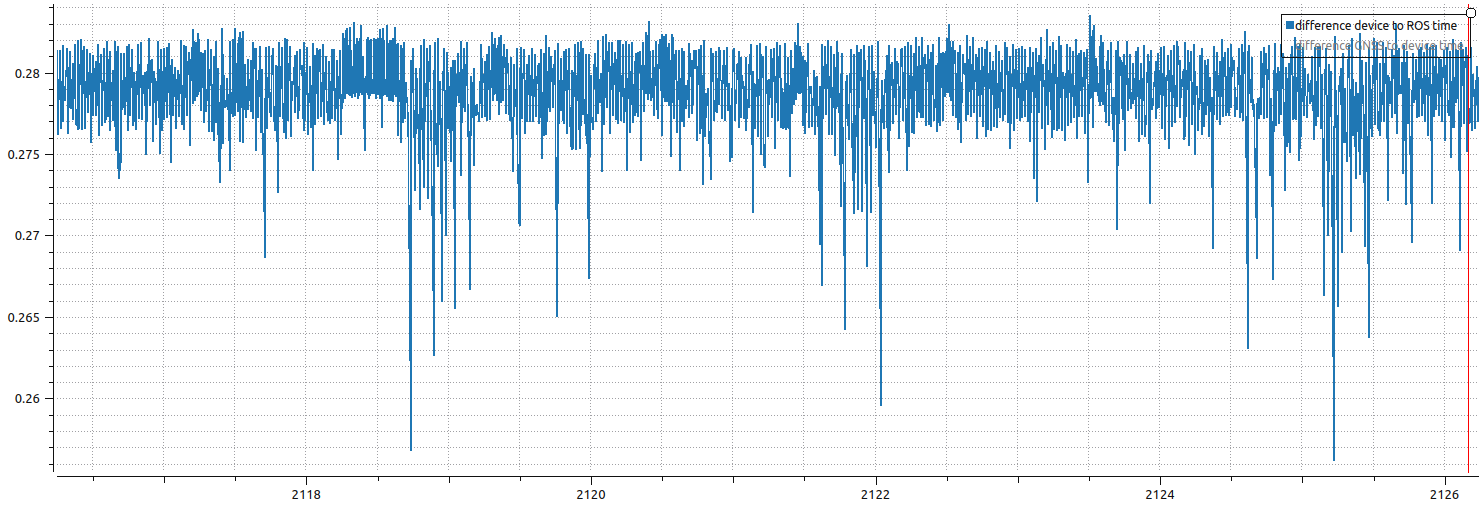

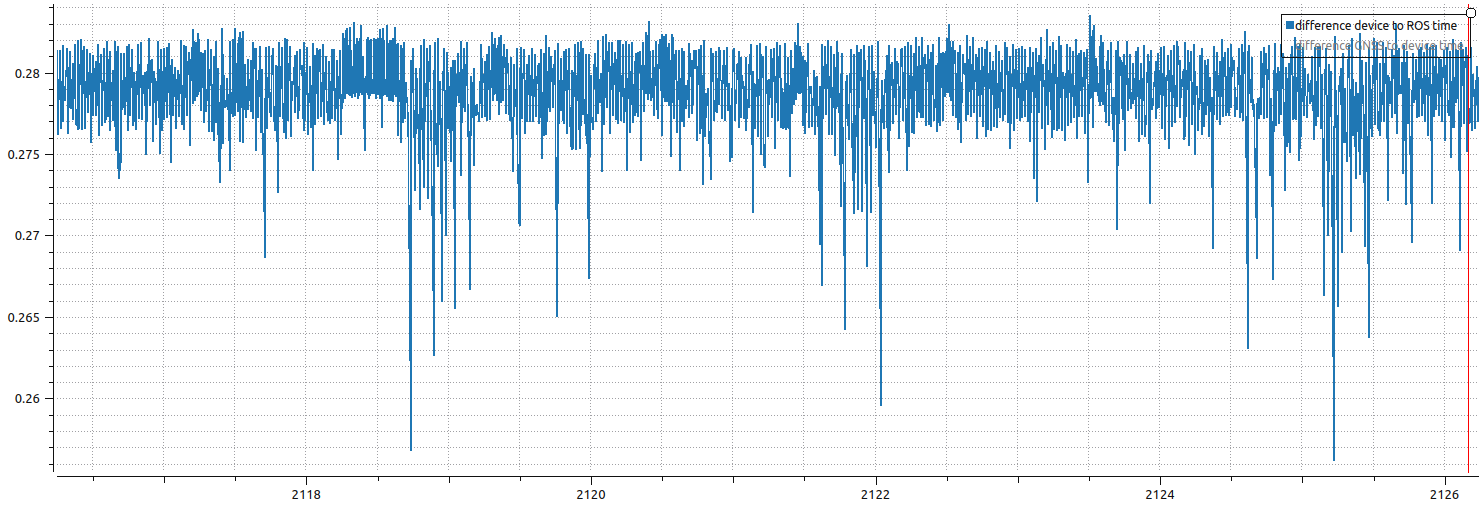

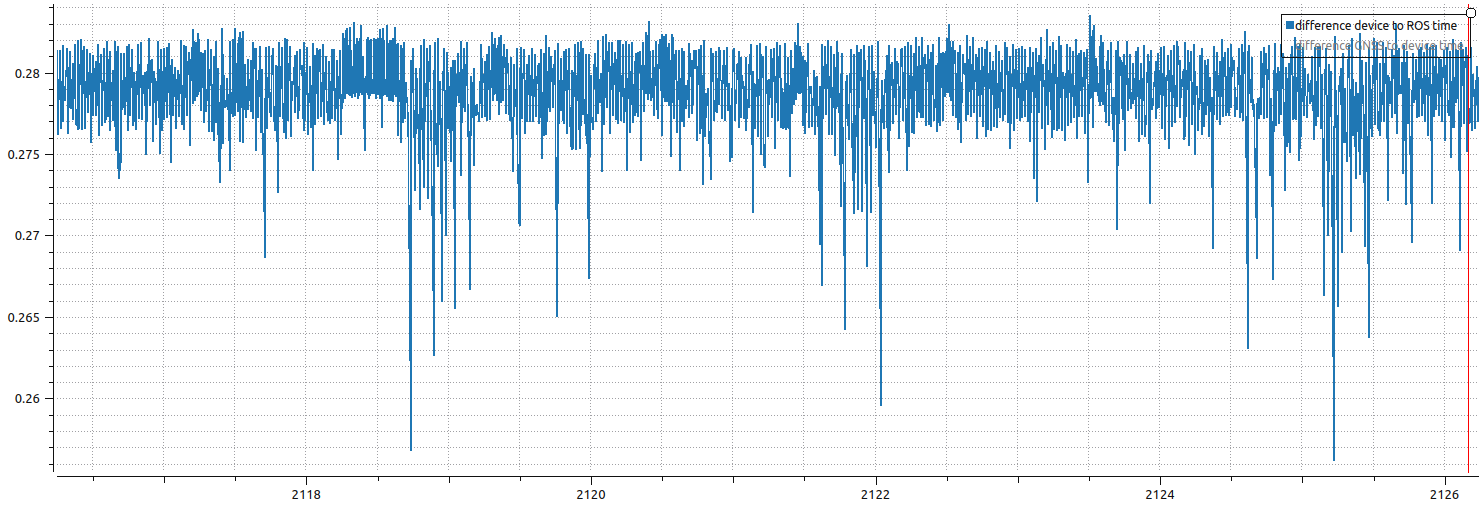

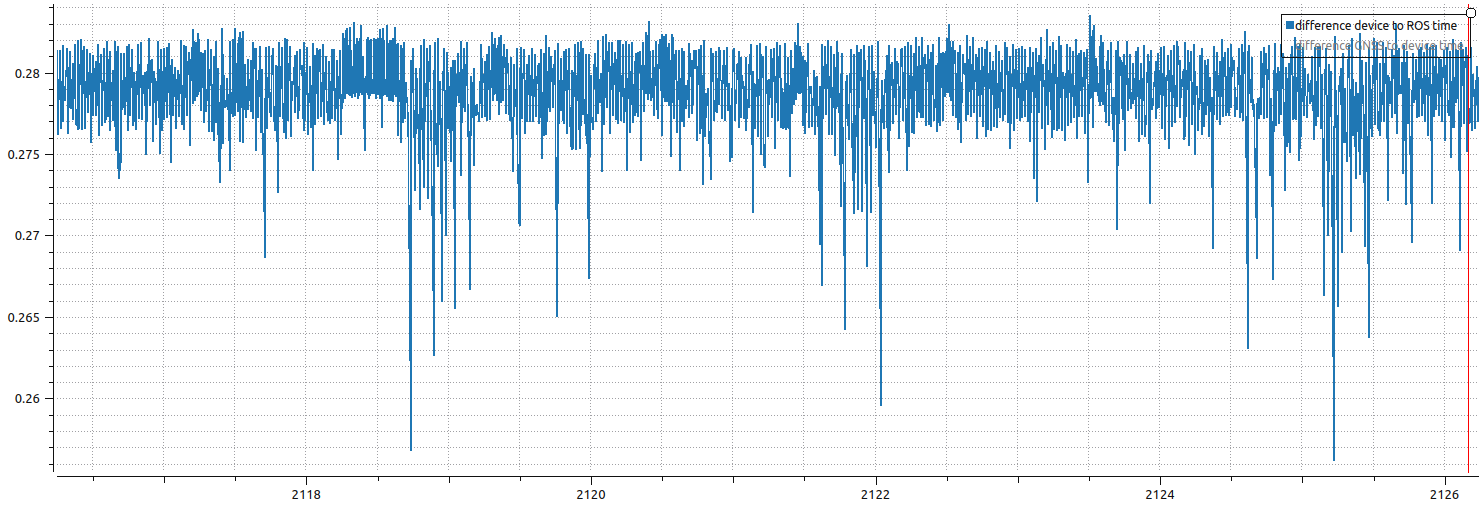

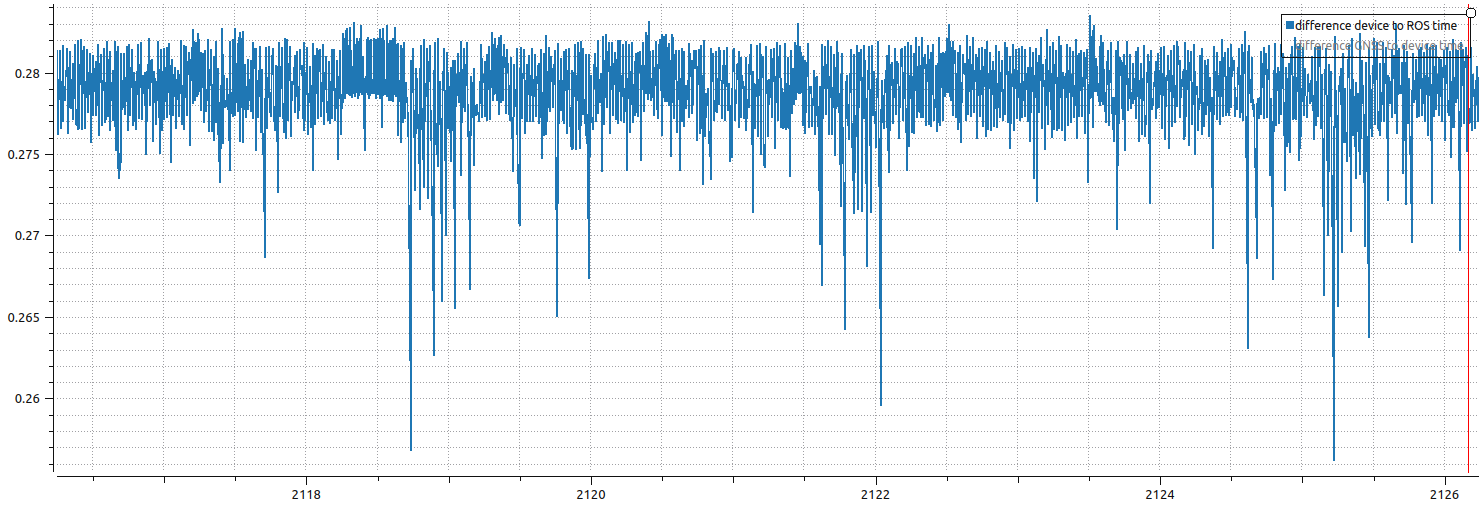

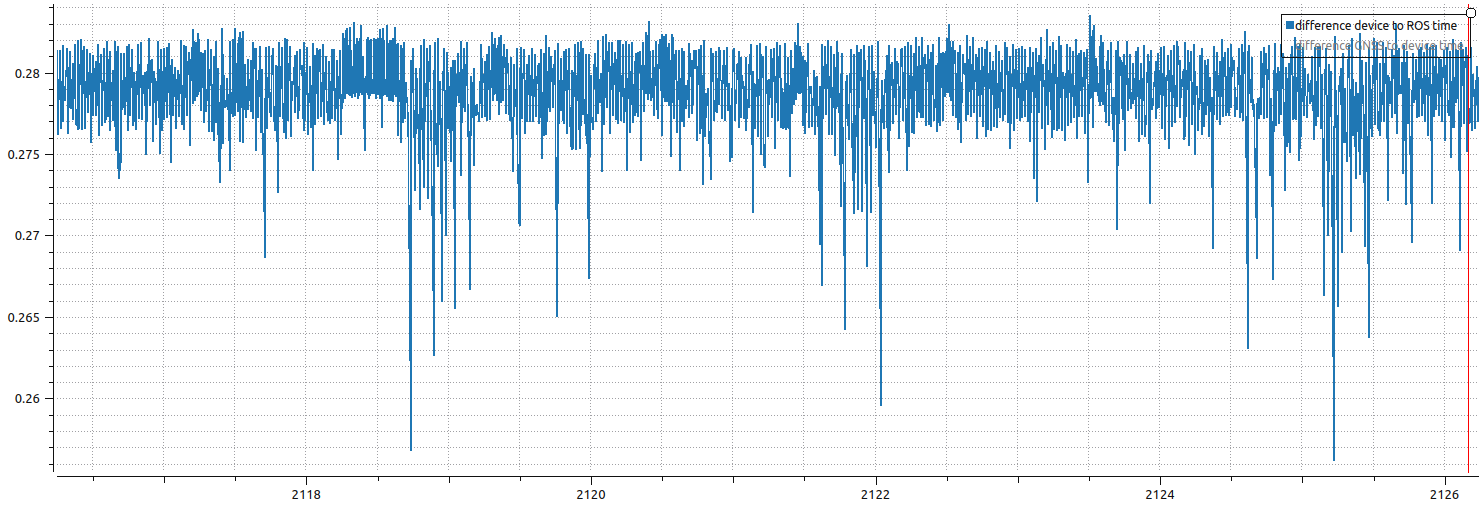

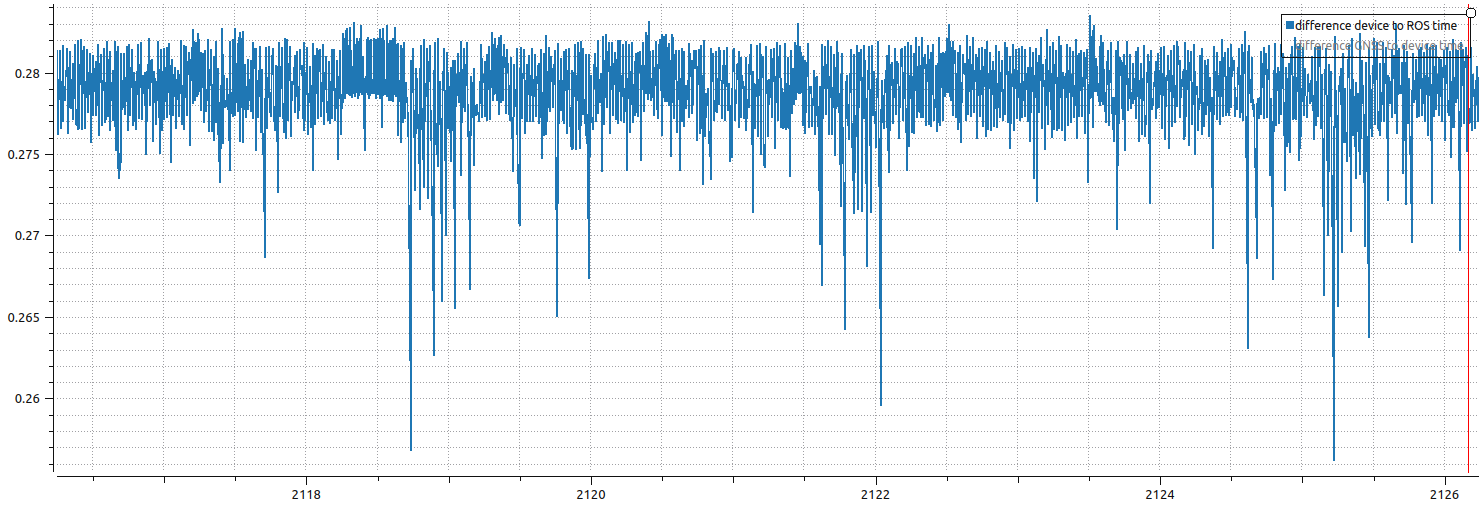

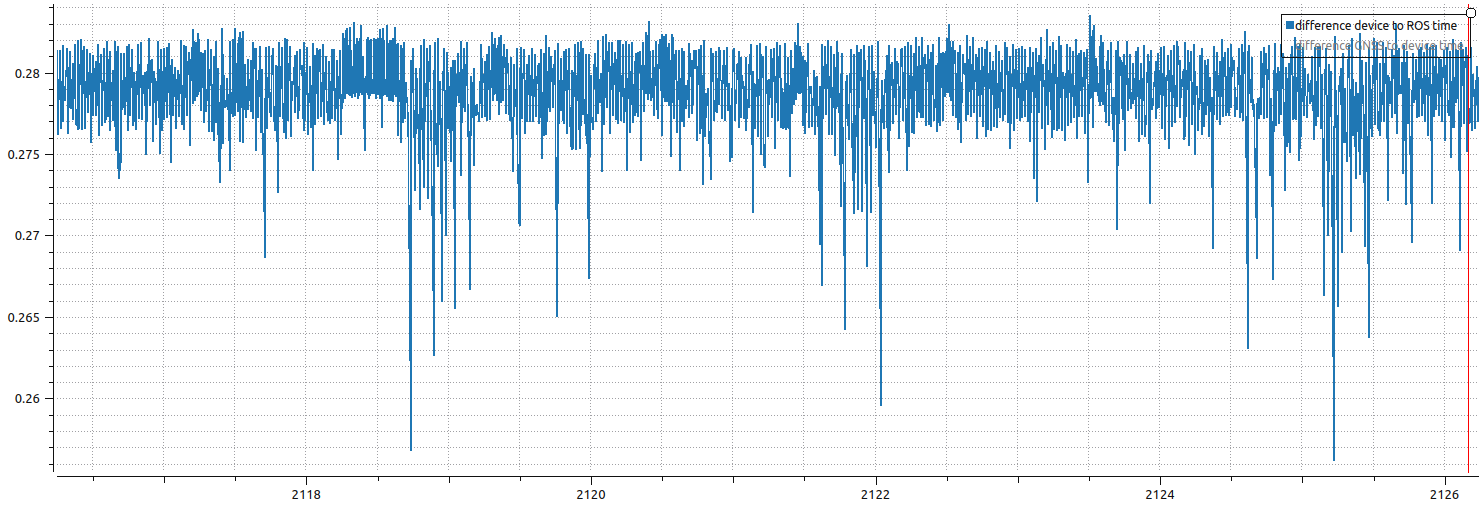

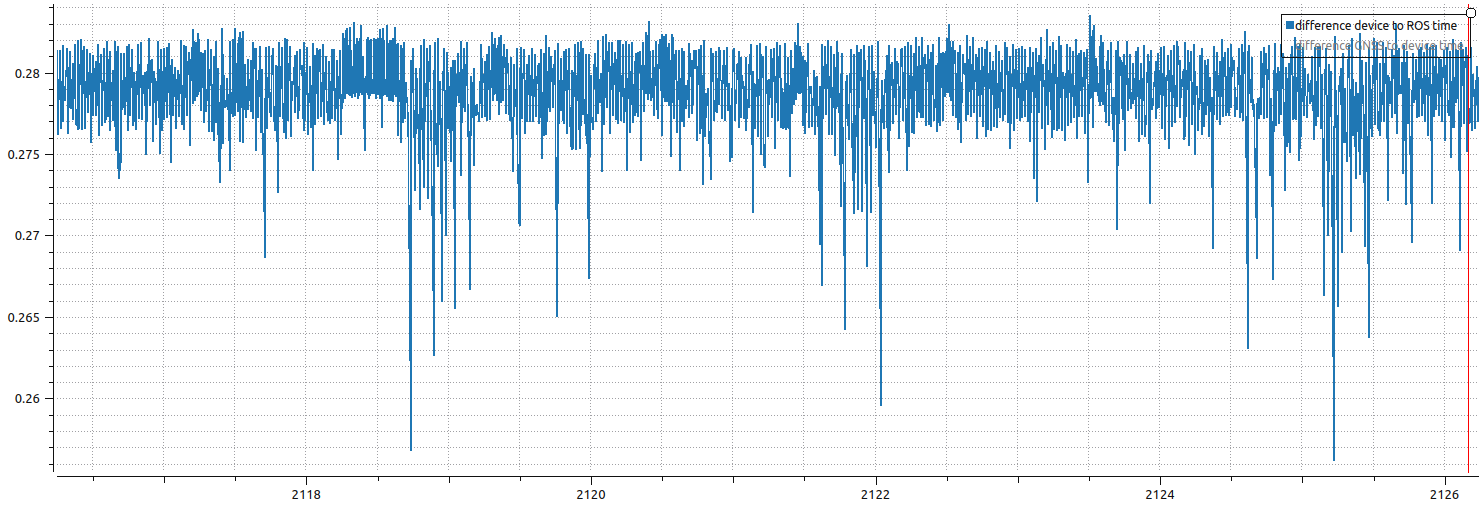

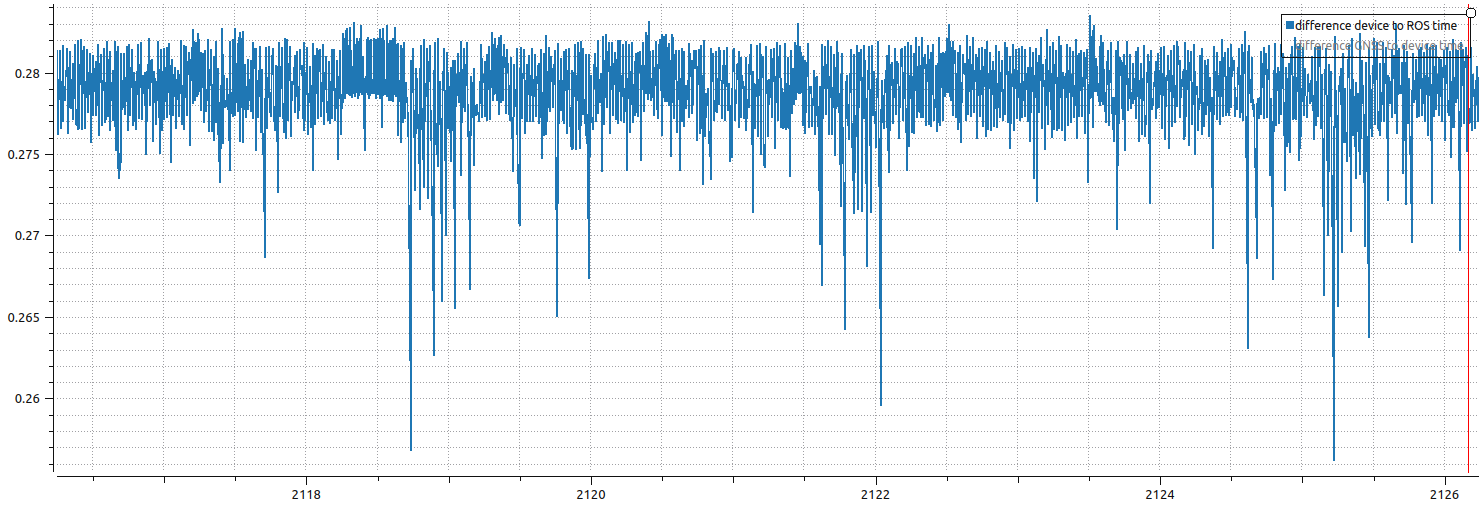

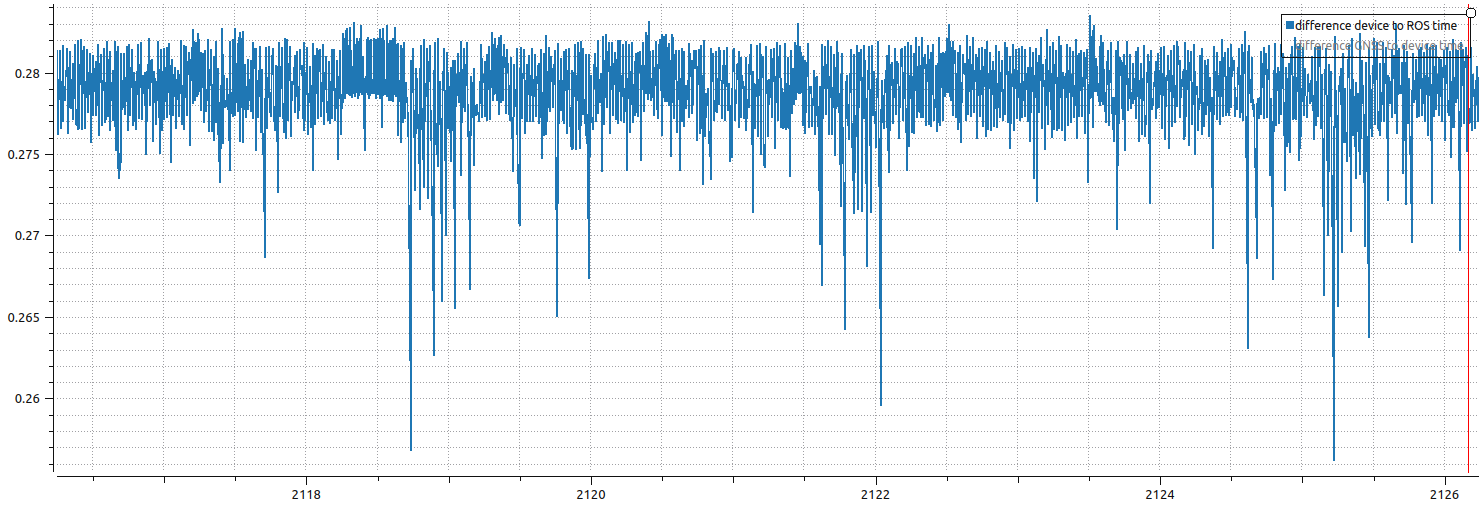

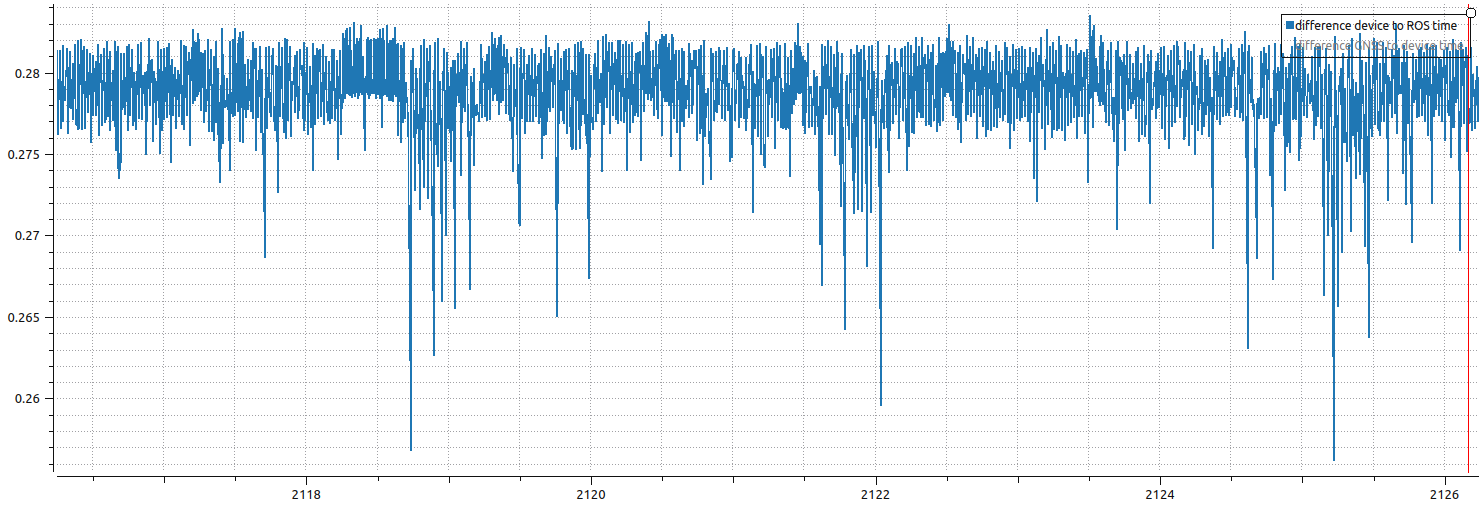

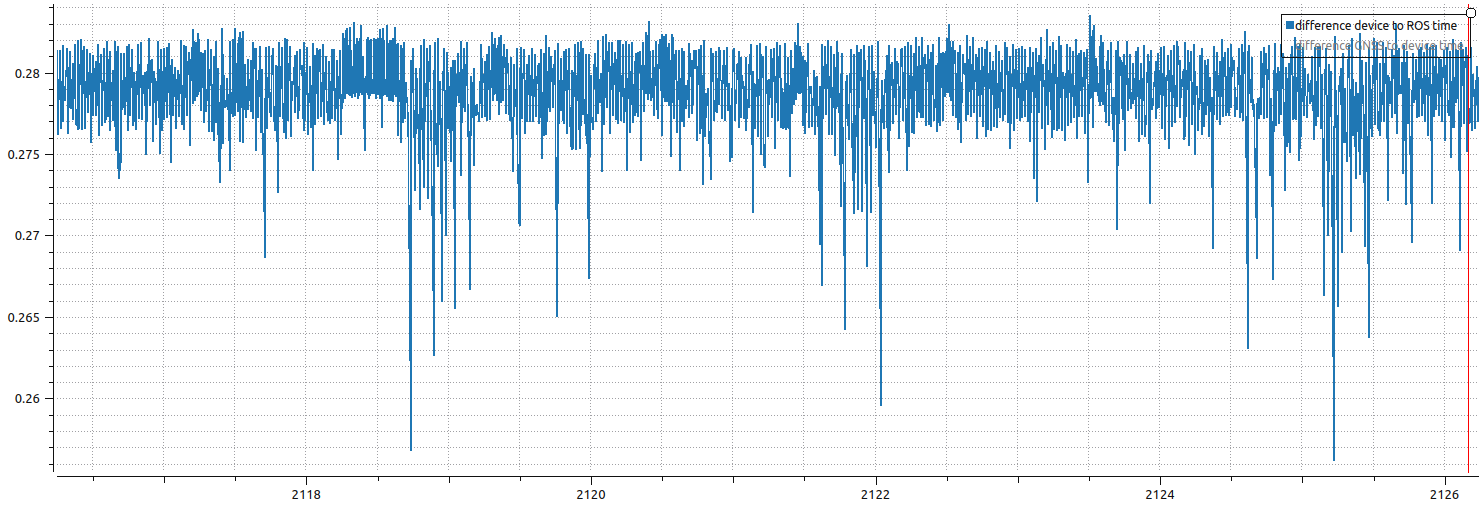

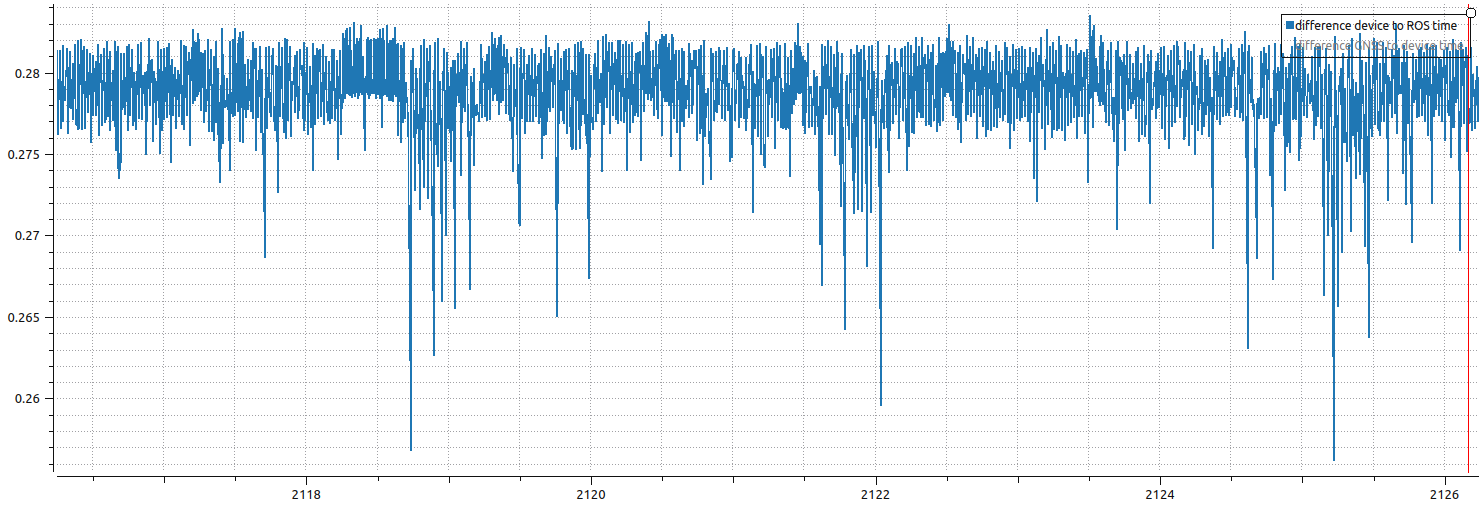

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

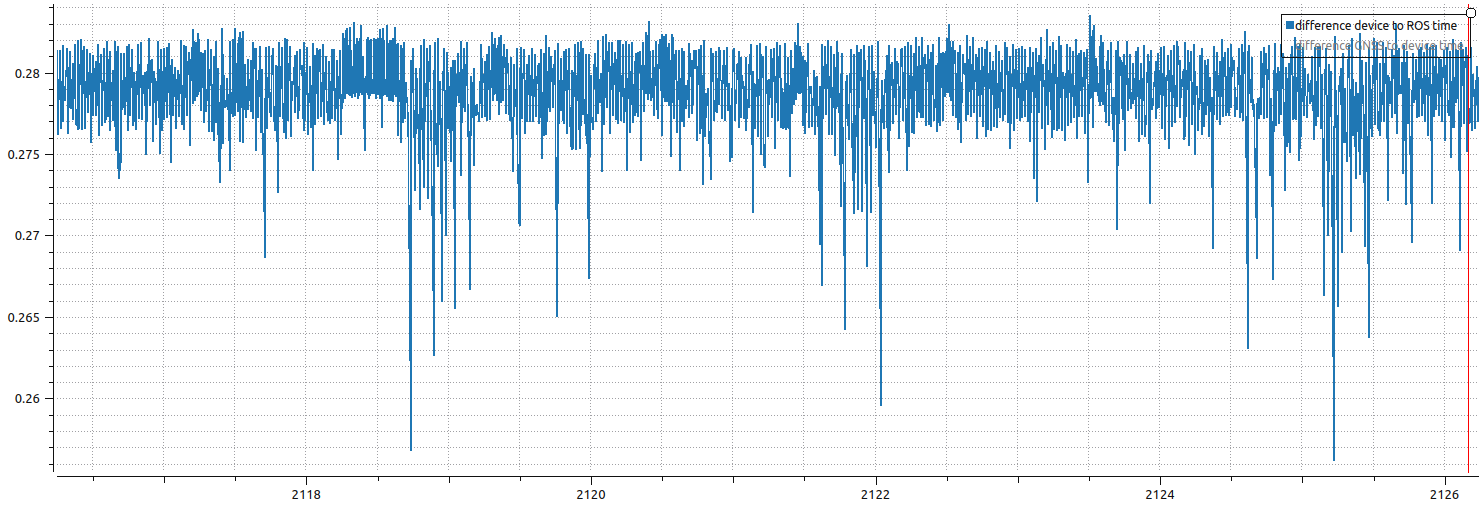

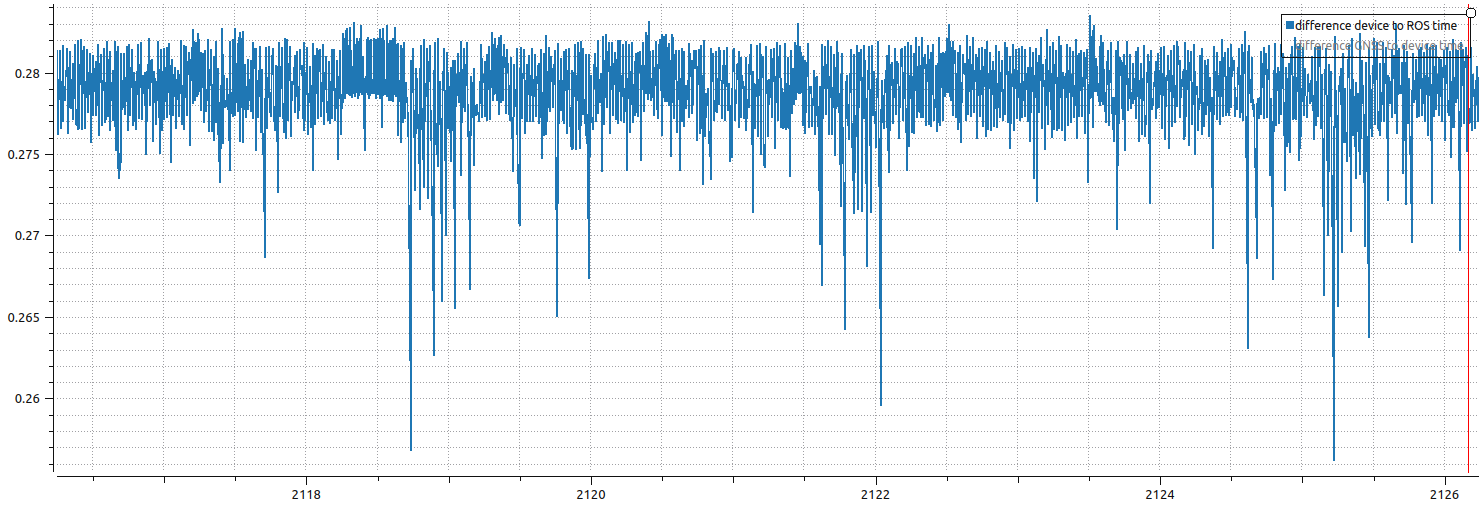

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

|

phone_sensors_bridge repositoryphone_sensors_bridge phone_sensors_bridge_examples |

ROS Distro

|

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.

Latency

The webpage includes a “Measure latency” button that runs 100 sequential WebSocket ping-pong round trips and reports one-way latency and clock offset using the NTP formula:

RTT = t2_client - t1_client

latency = RTT / 2

clock_offset = t_server - (t1_client + t2_client) / 2

Note: browser timestamps use the Performance API for sub-millisecond resolution, but precision is still reduced by browsers (100 µs floor in Firefox, up to 100 ms with fingerprinting resistance).

Example measurement over a local WiFi network when running two video feeds, IMU and GNSS:

Latency (N=100): mean=5.19 ms, min=1.50 ms, max=12.50 ms | Clock offset (ROS-client): mean=-293.10 ms, min=-300.00 ms, max=-285.00 ms

A negative clock offset means the ROS clock is behind the client (phone) clock, in this case by ~293 ms. A positive offset would mean the ROS clock is ahead. This offset is stable across samples (spread of ~15 ms), which indicates a consistent skew rather than jitter. If the parameter time_reference_frequency is strictly positive, then the time/device TimeReference topic reports the clock difference including network latency.

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only

We observe the same clock offset in PlotJuggler expressed in seconds, in this case measured running only time_reference_frequency:=100.0 and no video. The offset here is the difference in clock time plus network latency

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/vtalpaert/ros2-phone-sensors.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2026-03-18 |

| Dev Status | DEVELOPED |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| phone_sensors_bridge | 0.0.0 |

| phone_sensors_bridge_examples | 0.0.0 |

README

Use your phone as a sensor bridge in ROS2

Turn your phone’s GPS, IMU and cameras into ROS2 sensors. Connect an Arduino over USB to close the control loop. No custom app, just a browser.

Sensor-only use case (e.g. VSLAM, localization): mount the phone on a robot or handheld rig. It streams camera, IMU and GPS into ROS2 topics, ready for robot_localization, ORB-SLAM3, or any visual-inertial pipeline.

Full mobile robot use case: connect an Arduino or Teensy to the phone over USB. The phone relays commands from ROS2 down to the microcontroller (motor drivers, servos, …) while simultaneously streaming sensors upward. Your robot only needs firmware for low-level actuation, the phone handles perception and communication.

The bridge relies on the mobile browser rather than a dedicated app. A webpage is served from the ROS2 node; opening it on the phone prompts for permissions and starts streaming over WebSockets.

This repository is inspired by a project I did with students as a TA called phone-imu.

Build

source /opt/ros/humble/setup.bash

rosdep install -i --from-path src --rosdistro humble -y --ignore-src

colcon build --packages-up-to phone_sensors_bridge

# Or build for development, but remember to clean and rebuild every time the JS files are changed

# colcon build --symlink-install --packages-up-to phone_sensors_bridge_examples --event-handlers console_direct+

phone_sensors_bridge usage

Quickstart

Run the following in a different terminal than the one used for building, otherwise the server might start from inside the build folder which will not contain the template and static folders for the webpage.

source install/setup.bash

# Ubuntu IP

EXTRA_IP=$(ip route get 8.8.8.8 | grep -oP 'src \K[^ ]+')

echo "My IP is $EXTRA_IP"

# Generate SSL certificates for local webserver

ros2 run phone_sensors_bridge generate_dev_certificates.sh $EXTRA_IP

# Start server

ros2 run phone_sensors_bridge server --ros-args -p camera1_video_width:=720 -p camera1_video_height:=720

Open the webpage from your mobile device. The URL contains the server host IP where the node is running. It depends on your network, but most likely is https://<EXTRA_IP>:2000.

If you have multiple network interfaces, favour the fastest such as ethernet over wifi.

The page will prompt for permissions, then display the chosen camera

Published topics

| Topic | Type | Description |

|---|---|---|

time/device |

sensor_msgs/TimeReference |

Device system clock versus ROS time |

time/gnss |

sensor_msgs/TimeReference |

GNSS fix acquisition time versus device clock |

imu |

sensor_msgs/Imu |

Orientation (ENU quaternion), angular velocity and linear acceleration in the device frame |

gnss |

sensor_msgs/NavSatFix |

GPS fix: latitude, longitude, altitude with horizontal/vertical accuracy covariance |

gnss/odometry |

nav_msgs/Odometry |

GPS-derived velocity in the ENU frame; pose covariance is 1e9 (fuse twist only) |

camera1/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 1 JPEG stream |

camera2/image_raw/compressed |

sensor_msgs/CompressedImage |

Camera 2 JPEG stream |

camera1/camera_info |

sensor_msgs/CameraInfo |

Camera 1 calibration (only published when camera1_calibration_file is set) |

camera2/camera_info |

sensor_msgs/CameraInfo |

Camera 2 calibration (only published when camera2_calibration_file is set) |

usb/rx |

std_msgs/UInt8MultiArray |

Raw bytes received from the USB device (only active when usb_enabled is True) |

Subscribed topics

| Topic | Type | Description |

|---|---|---|

usb/tx |

std_msgs/UInt8MultiArray |

Raw bytes to forward to the USB device (only active when usb_enabled is True) |

Configuration

See the server configuration guide

Results

The current server is tested with Firefox and Chrome on Android.