Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

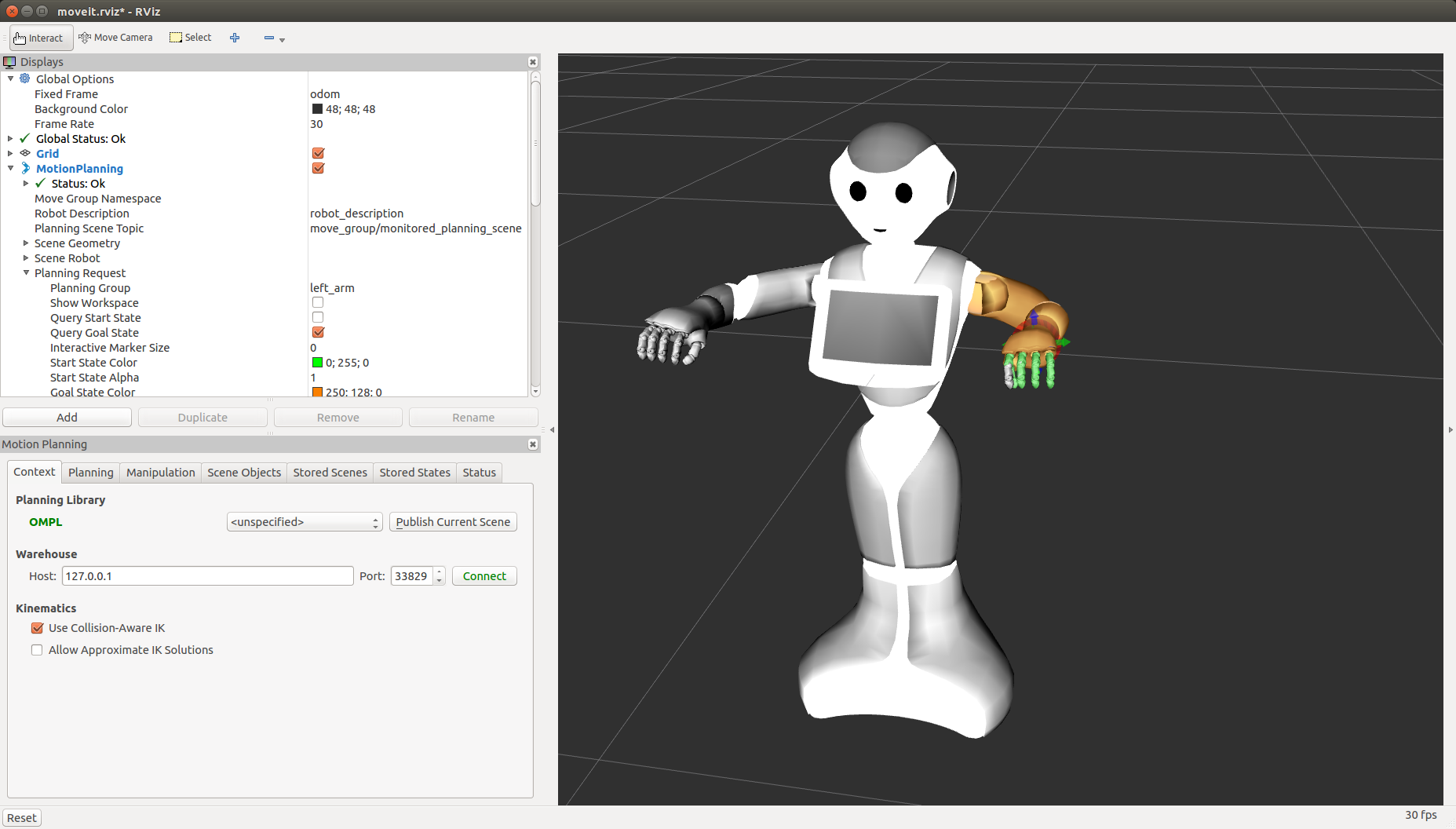

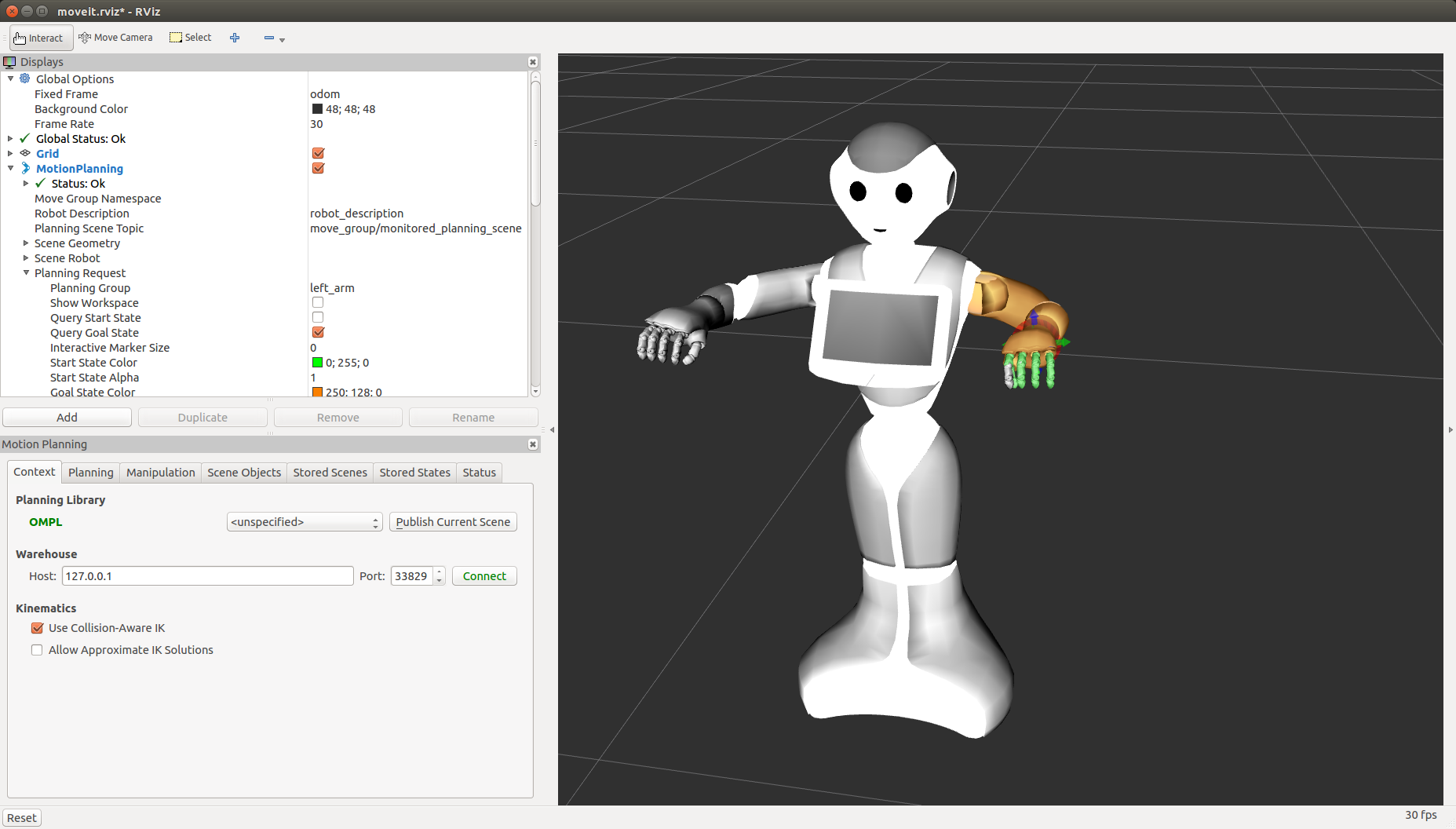

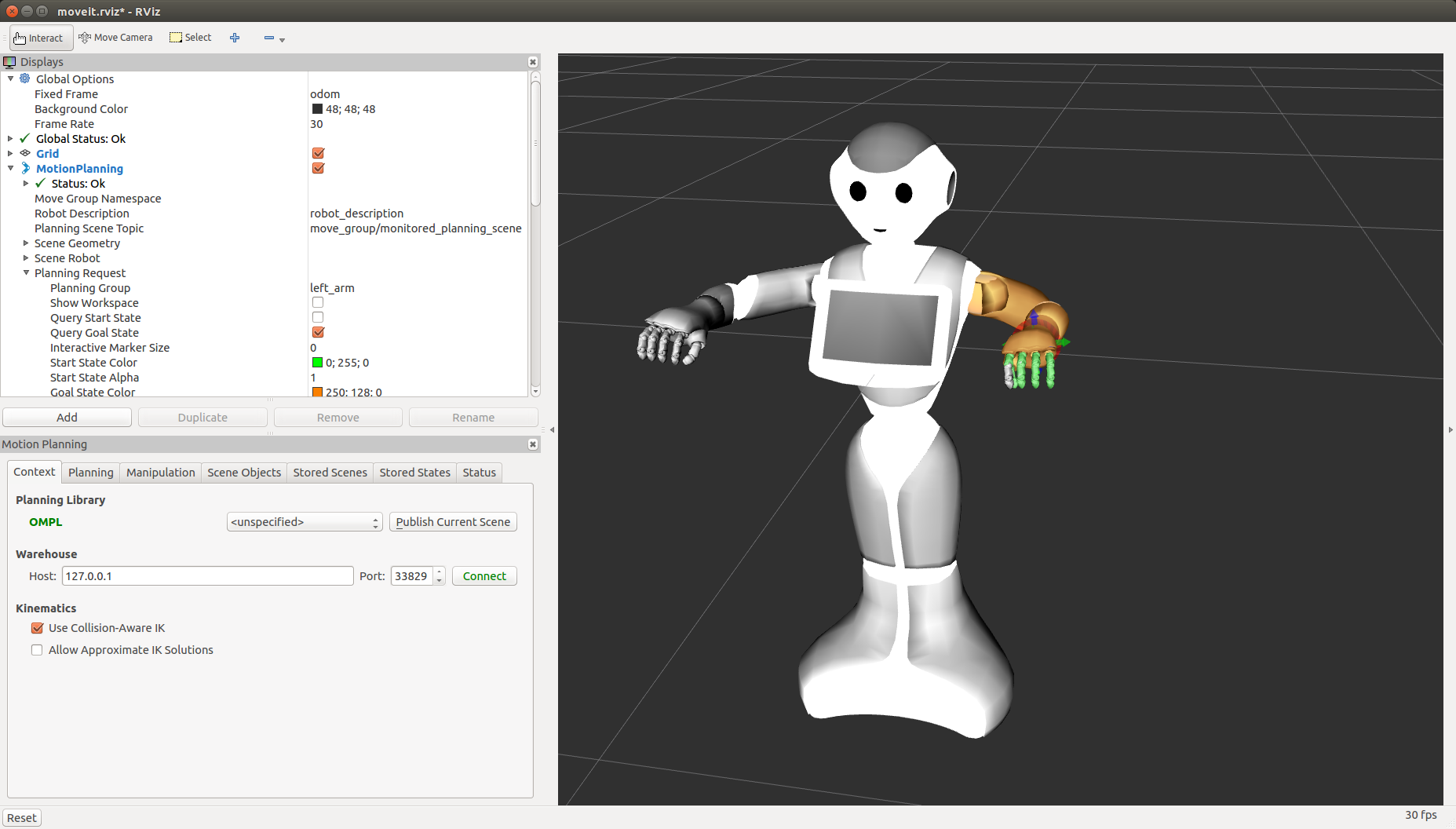

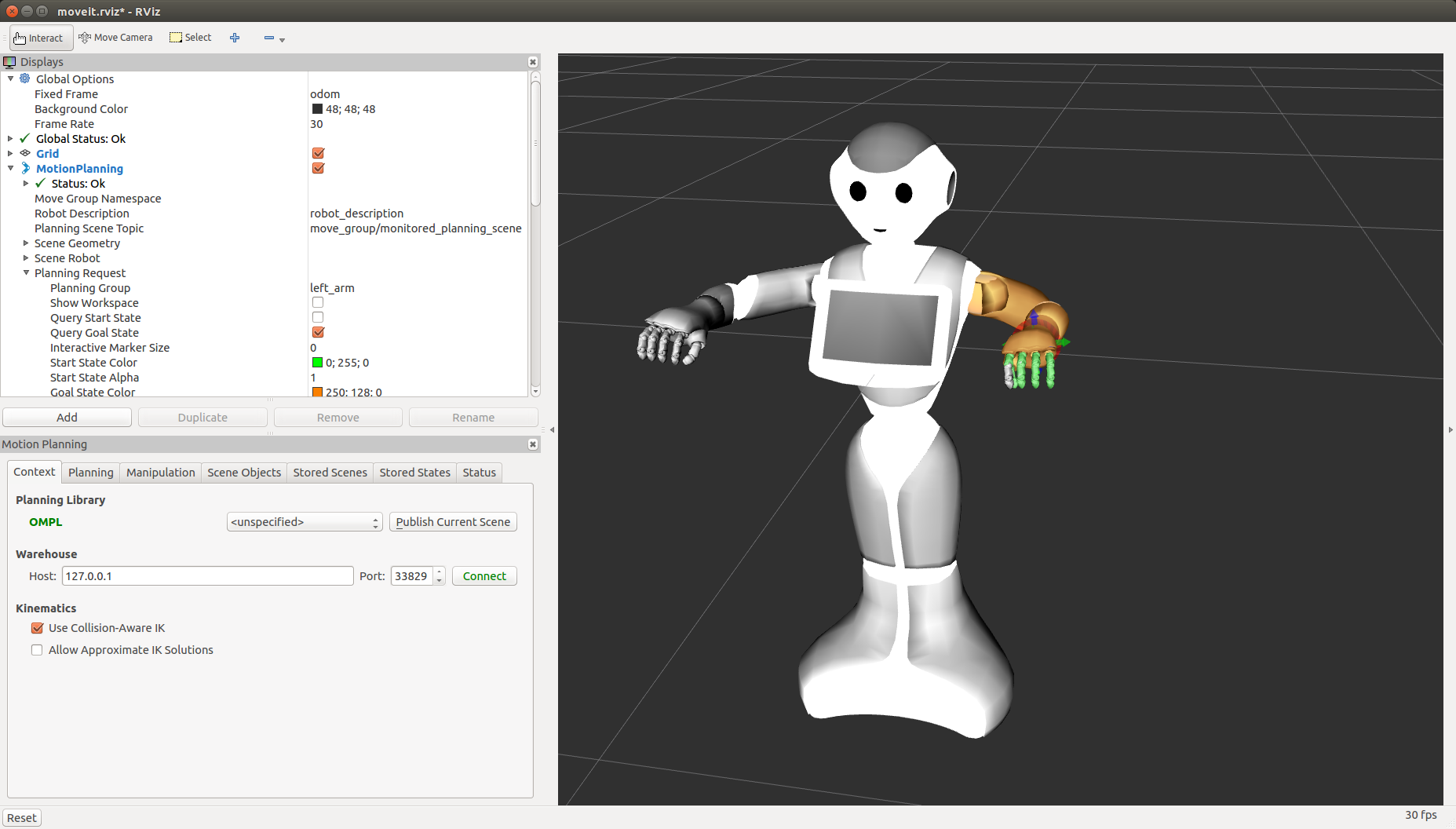

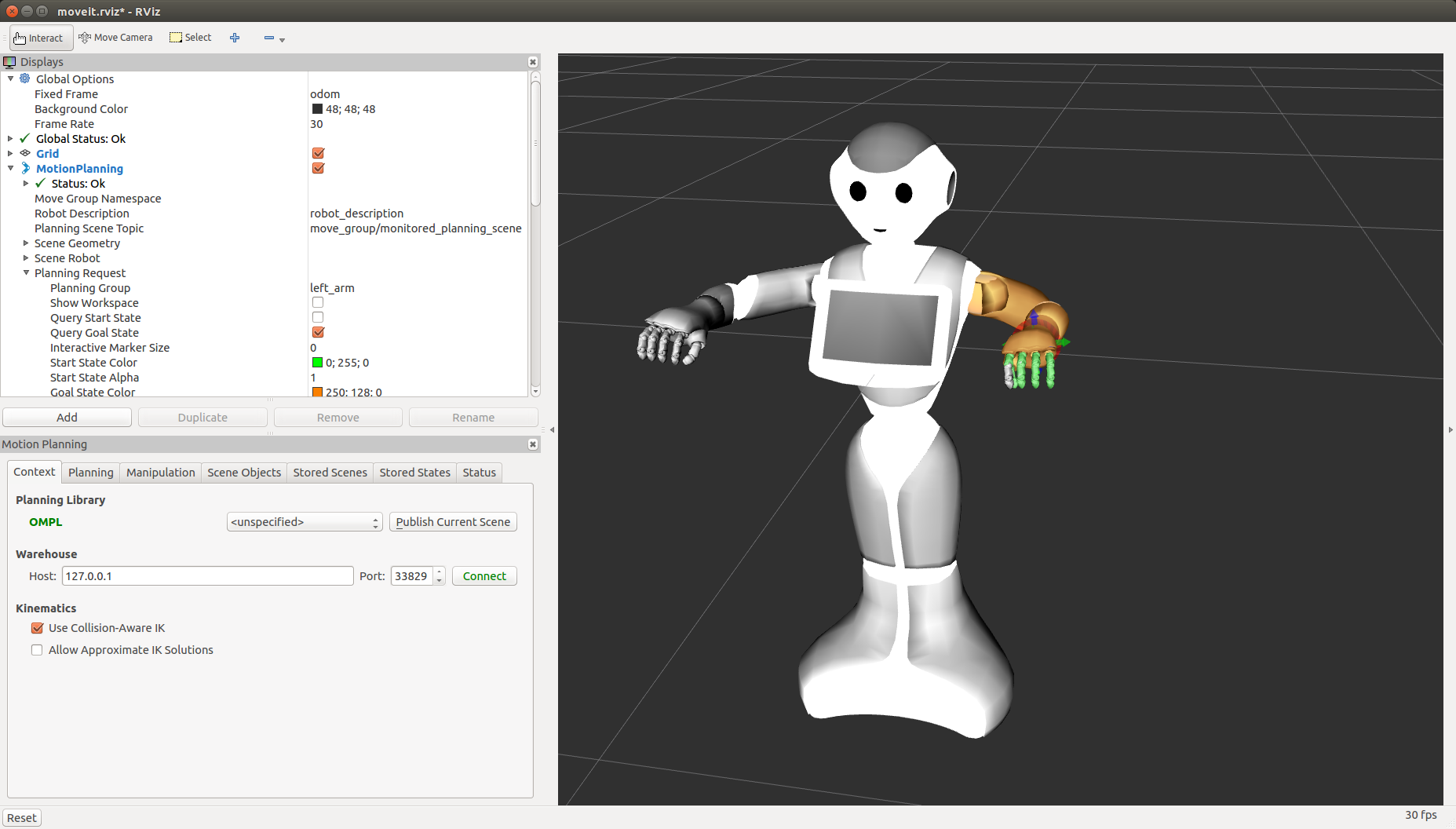

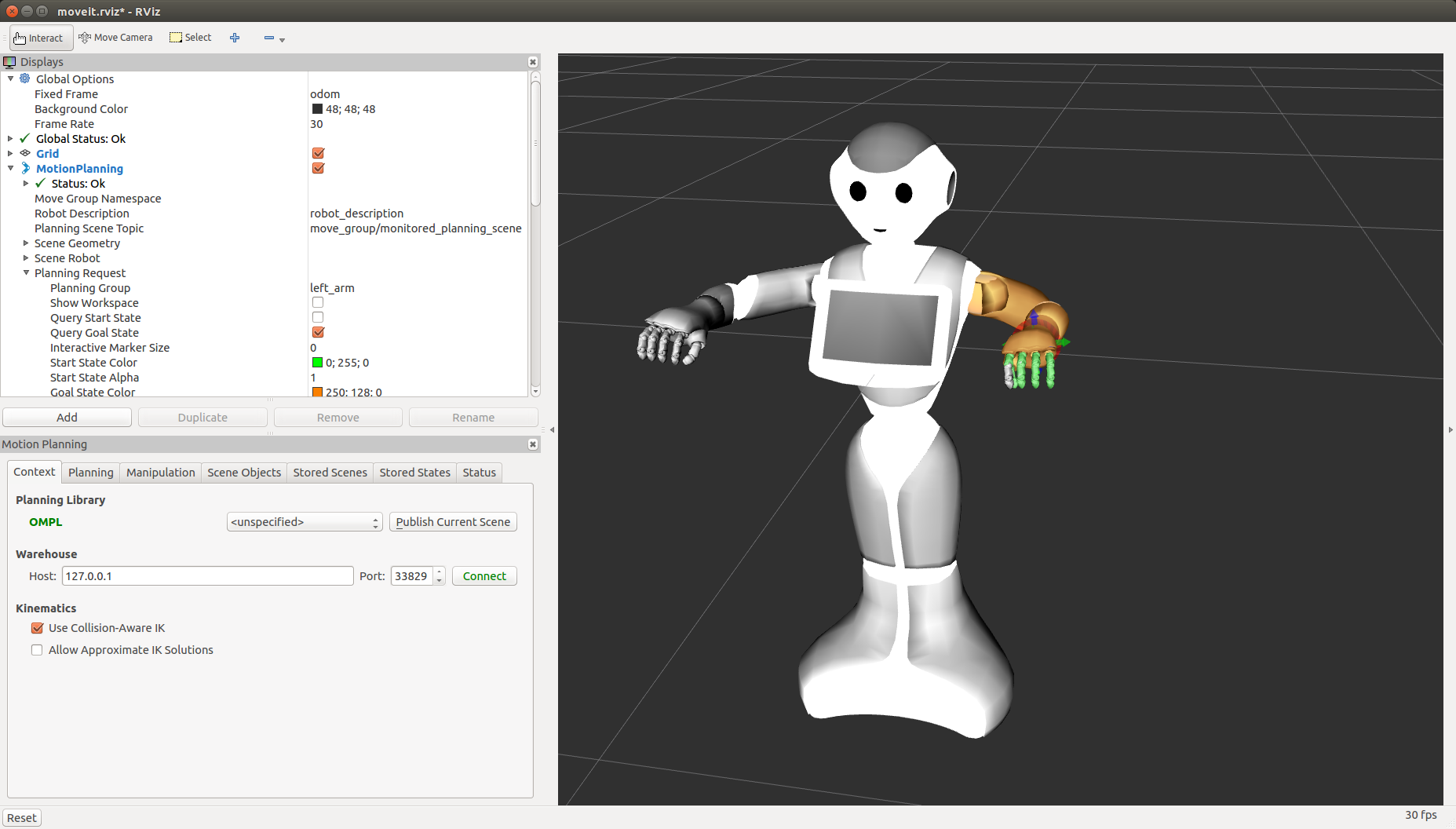

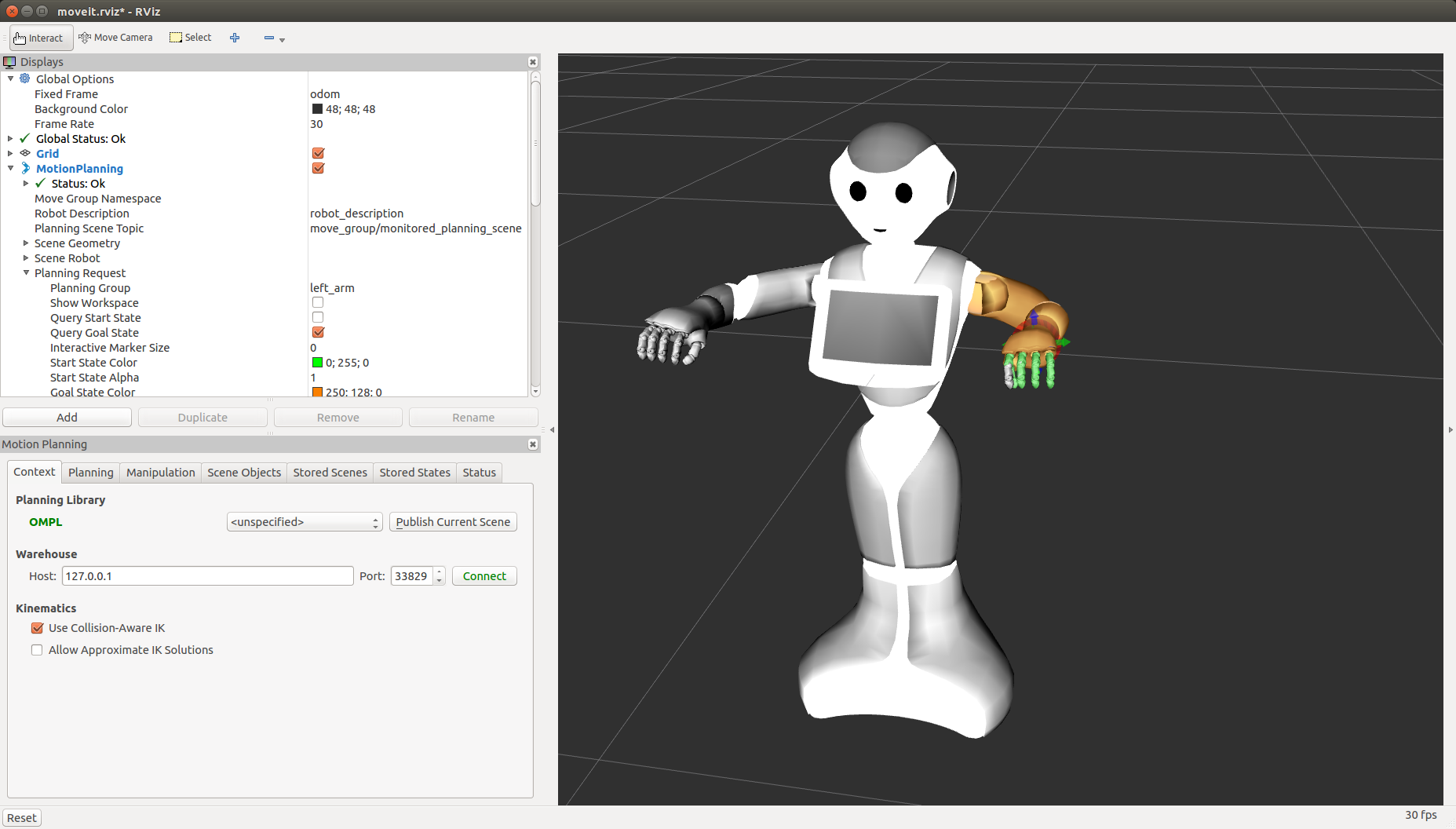

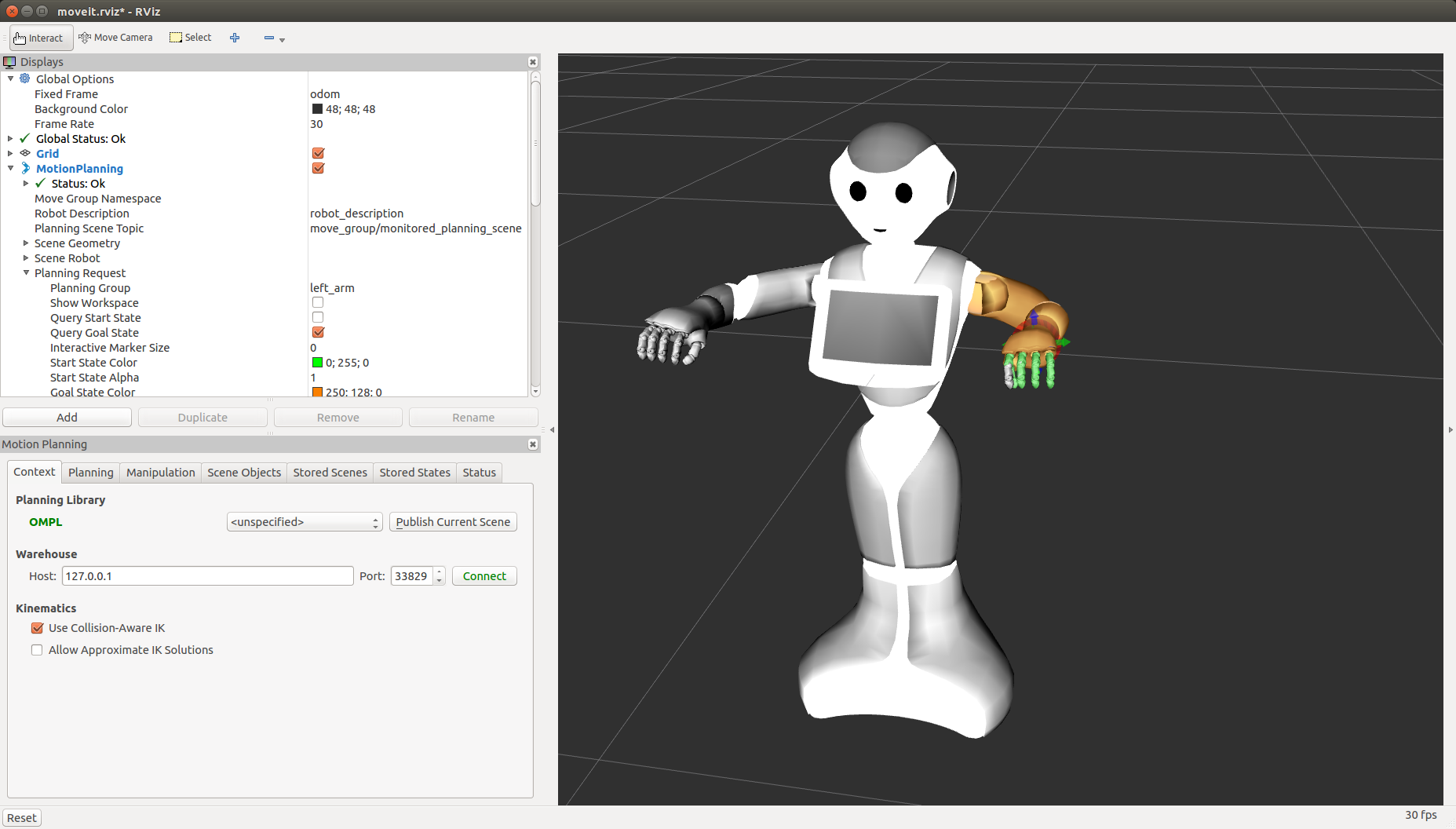

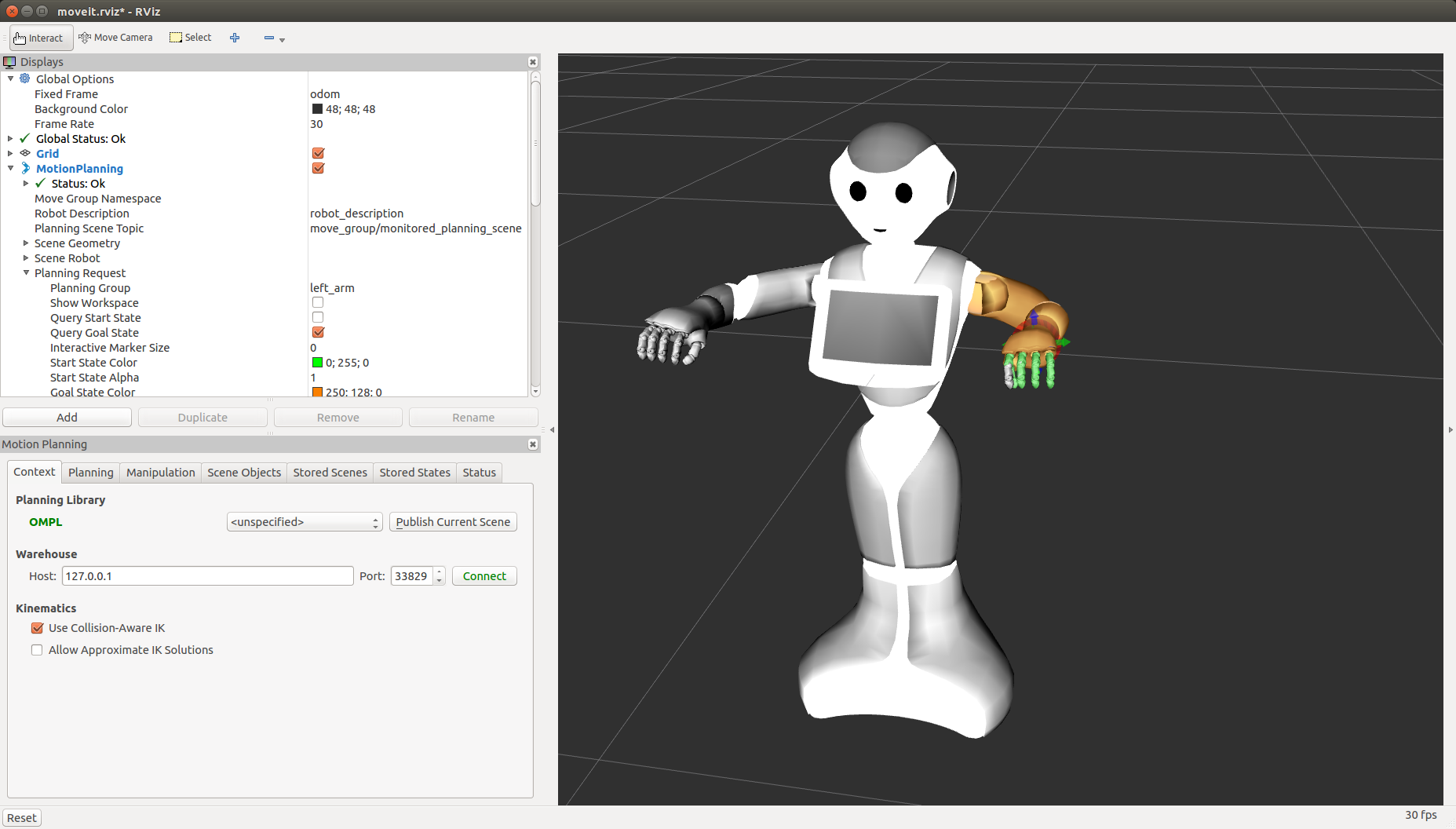

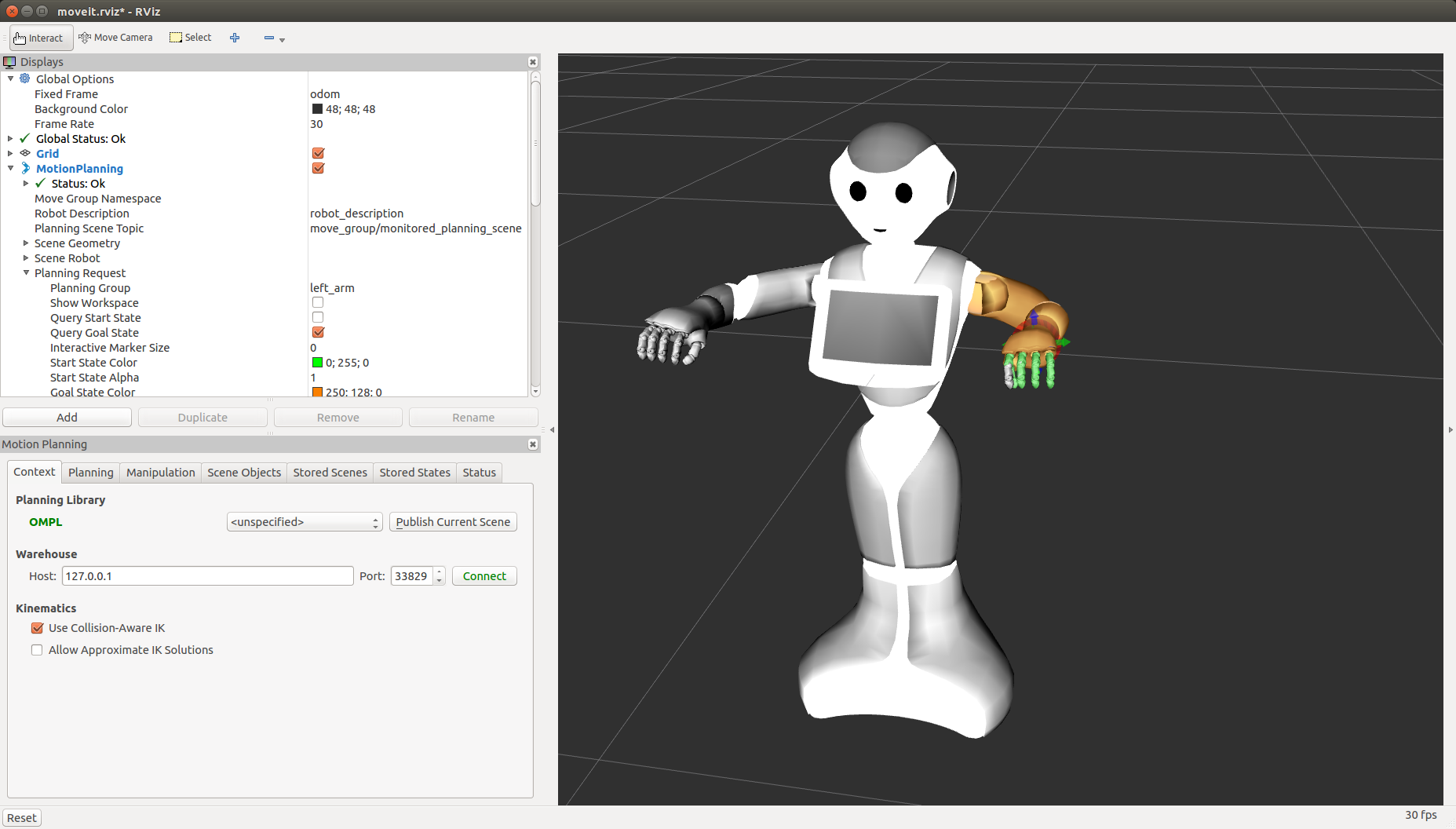

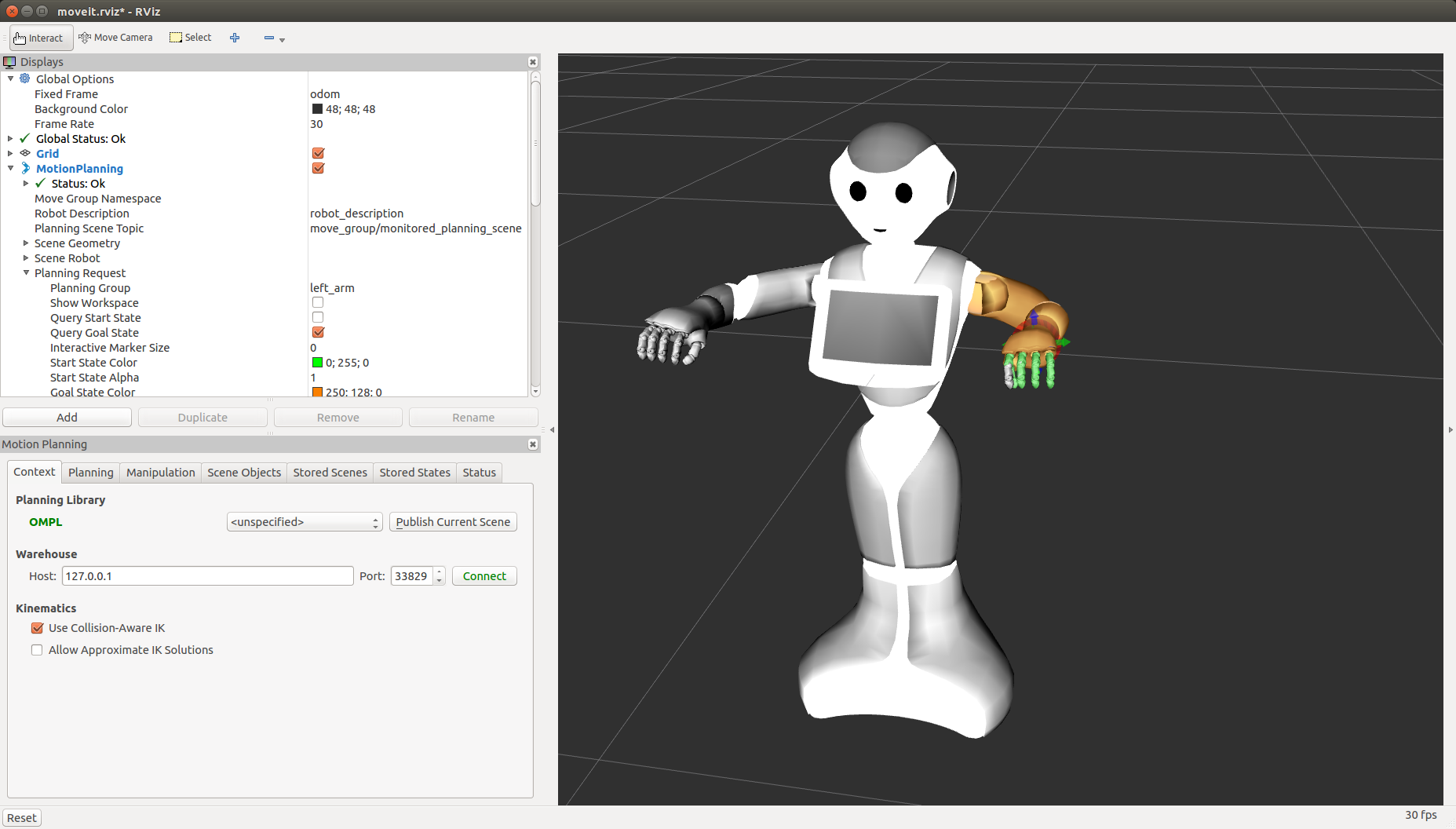

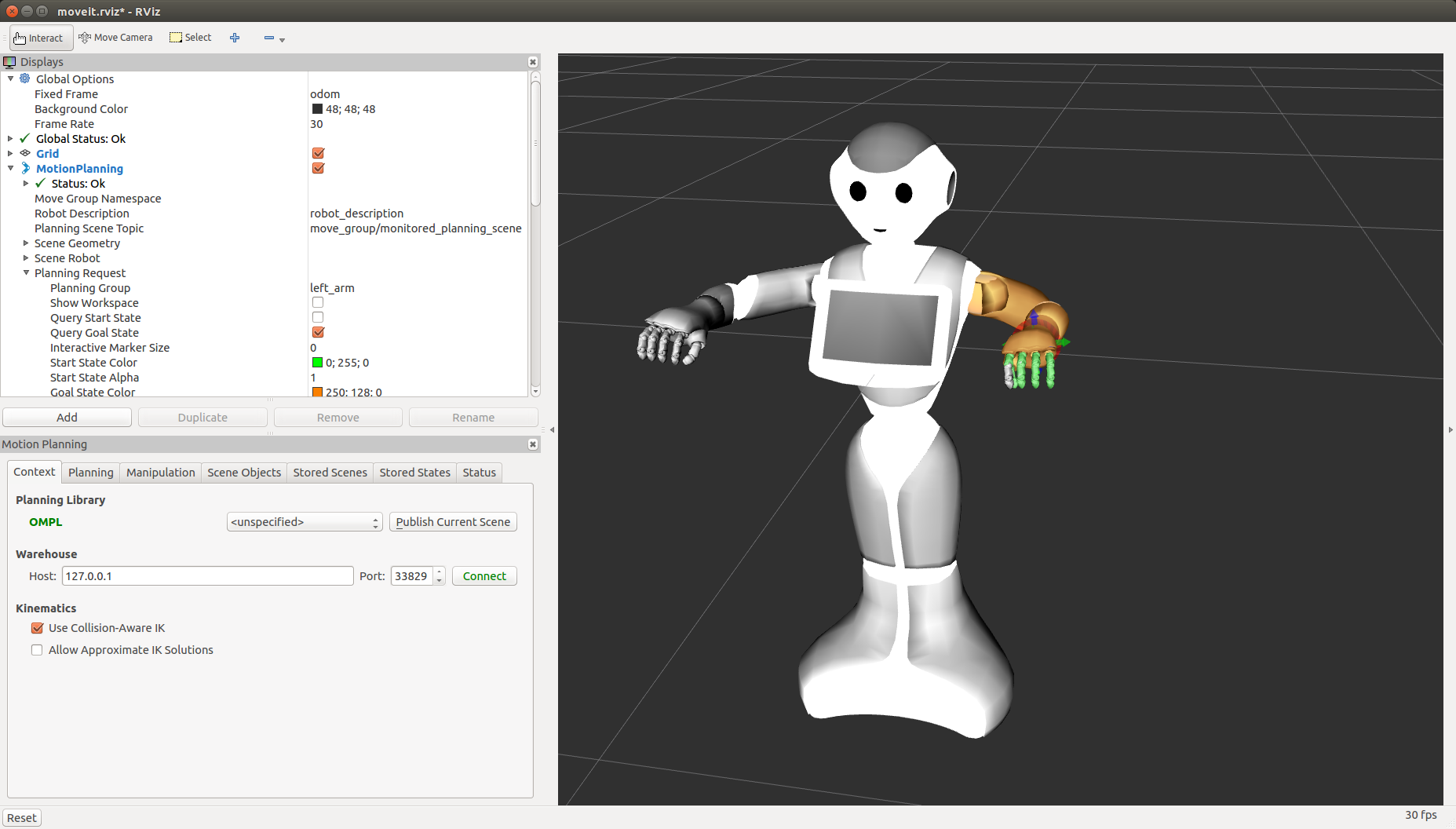

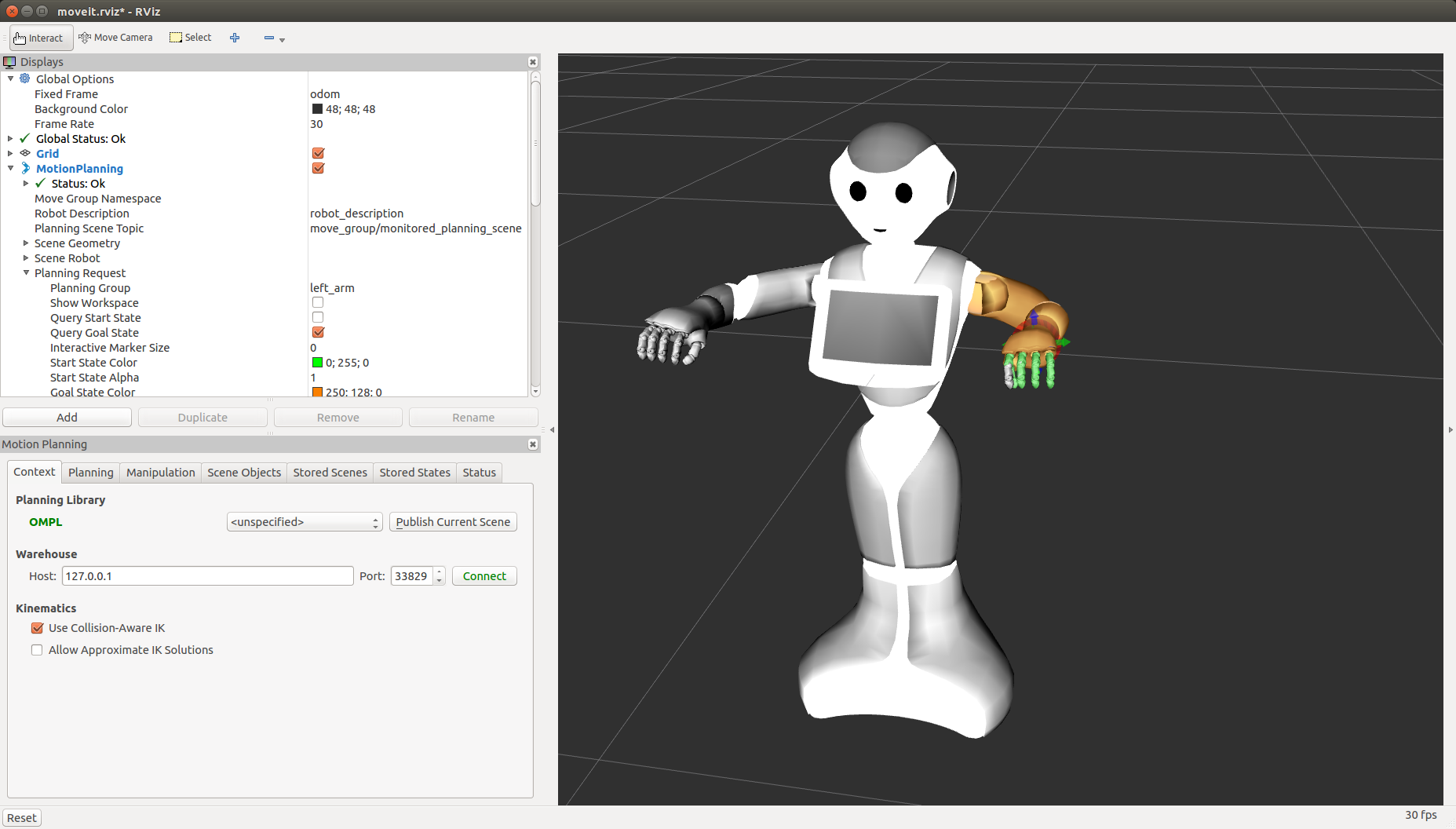

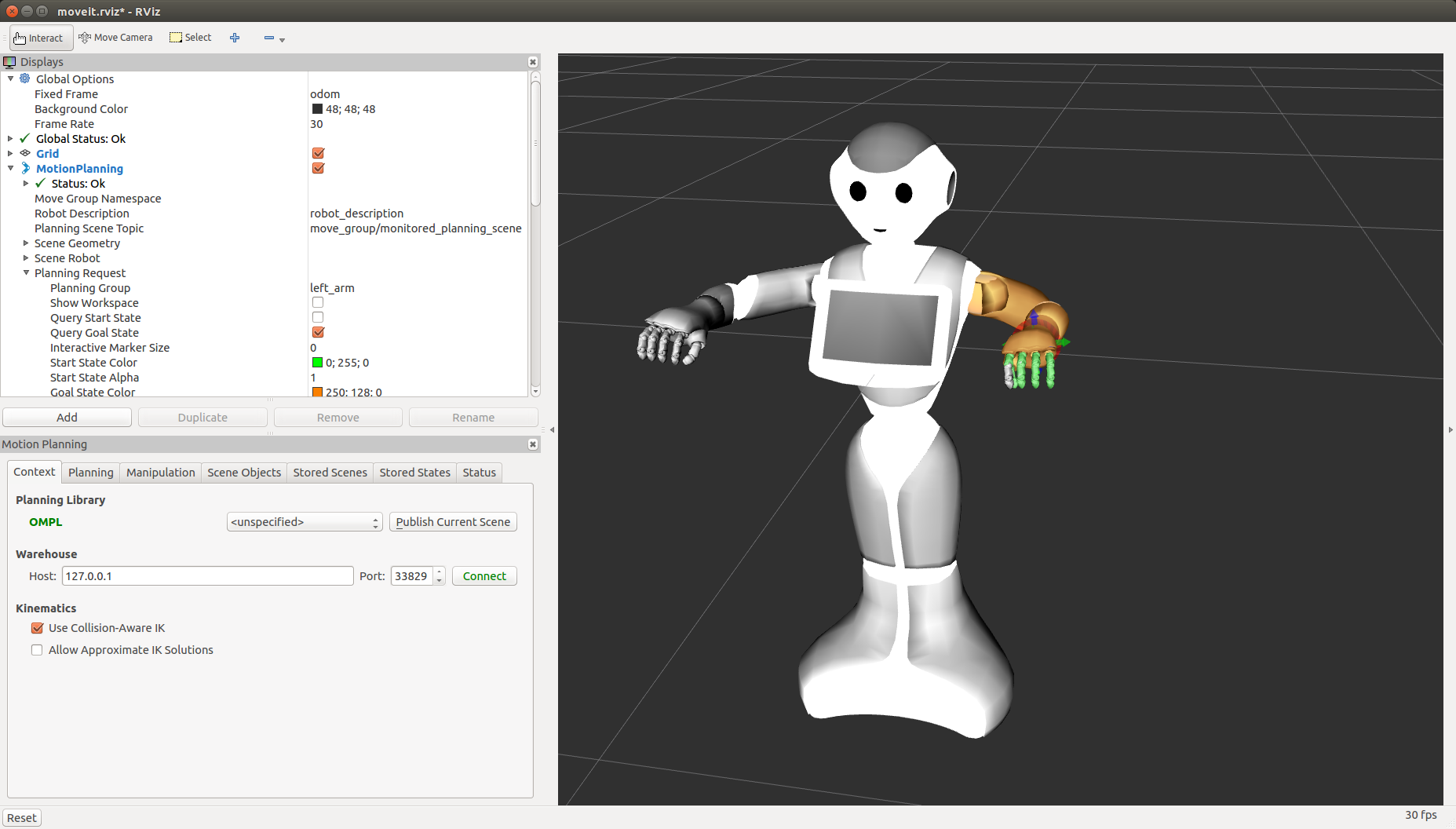

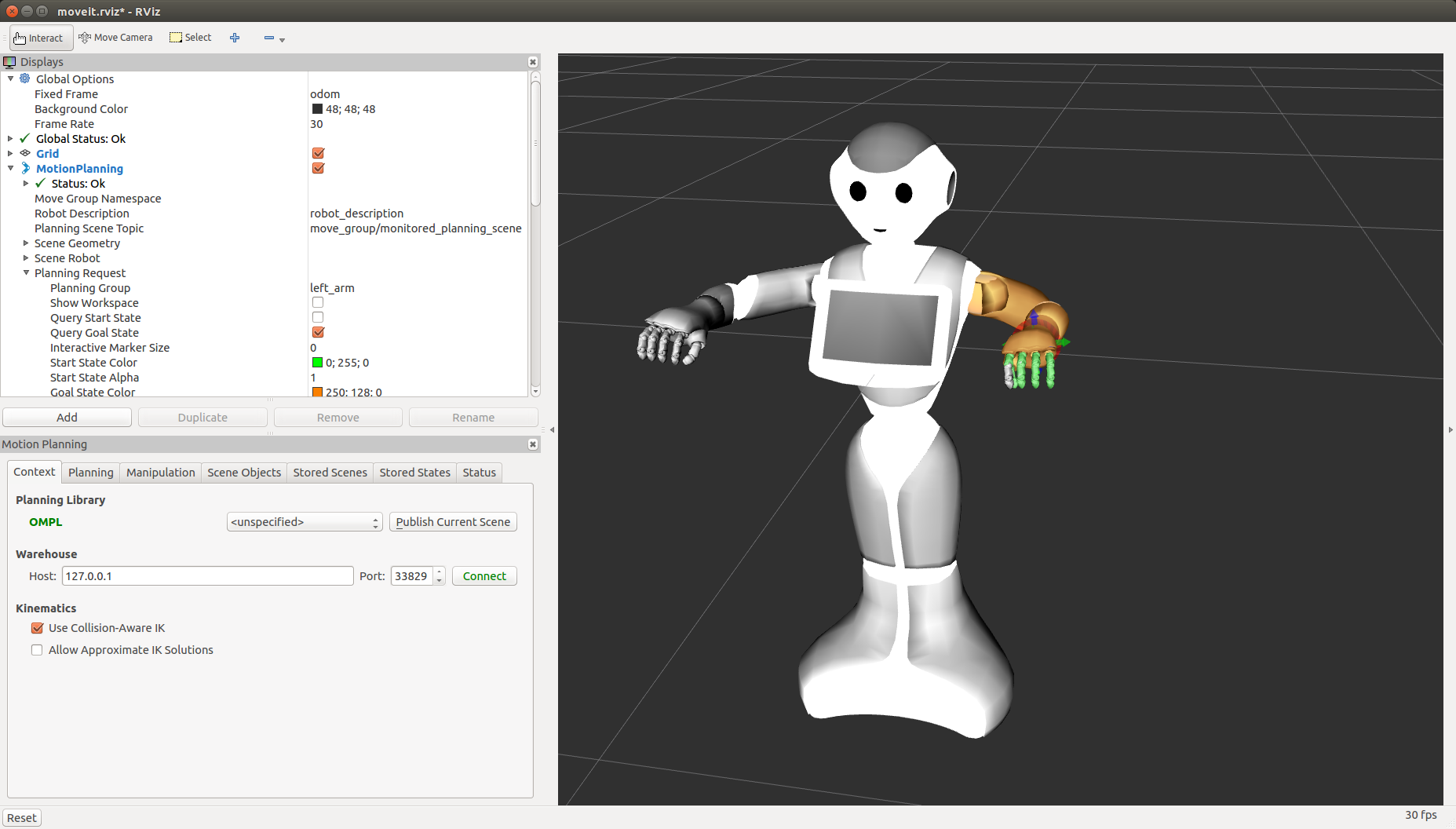

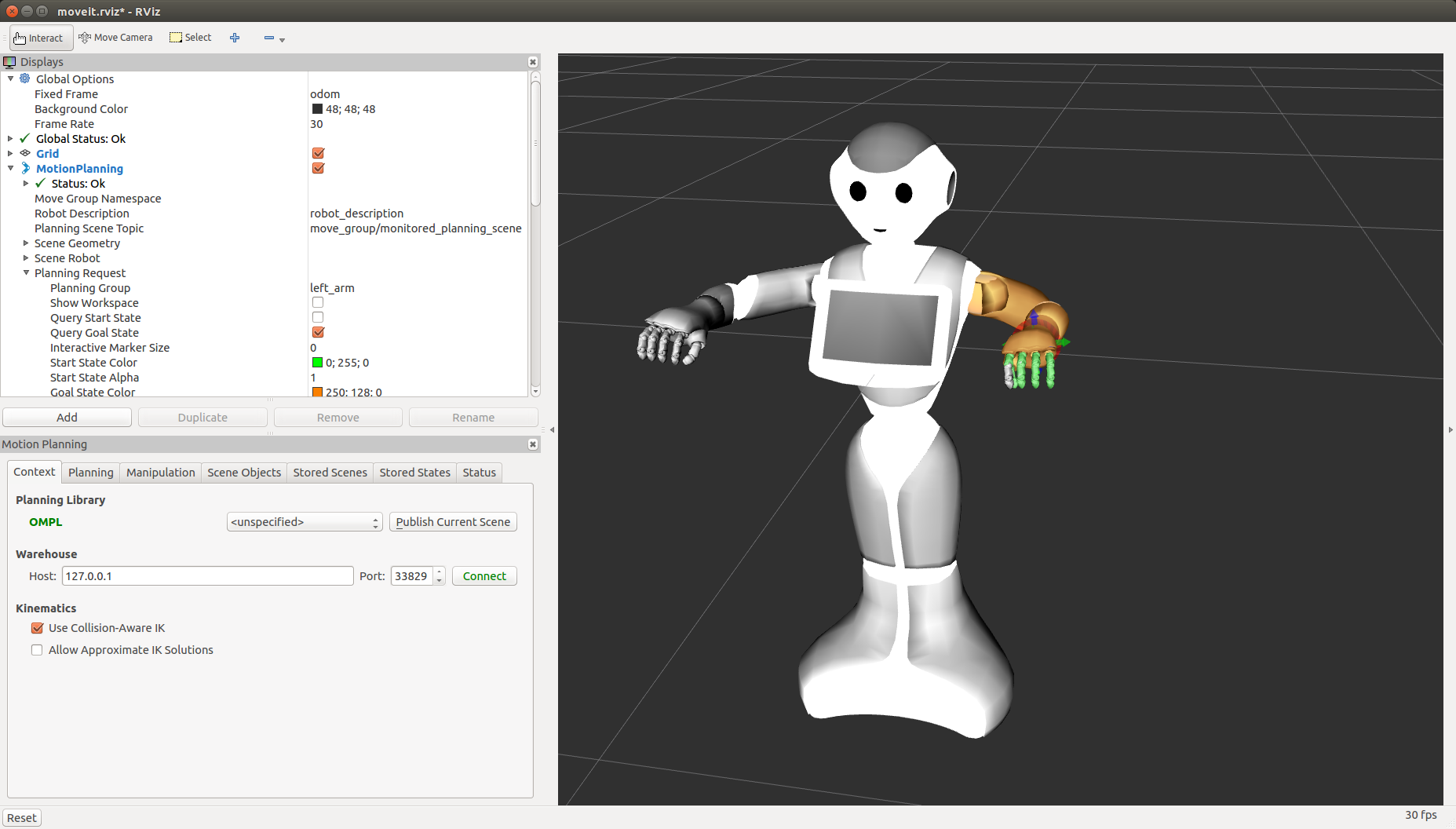

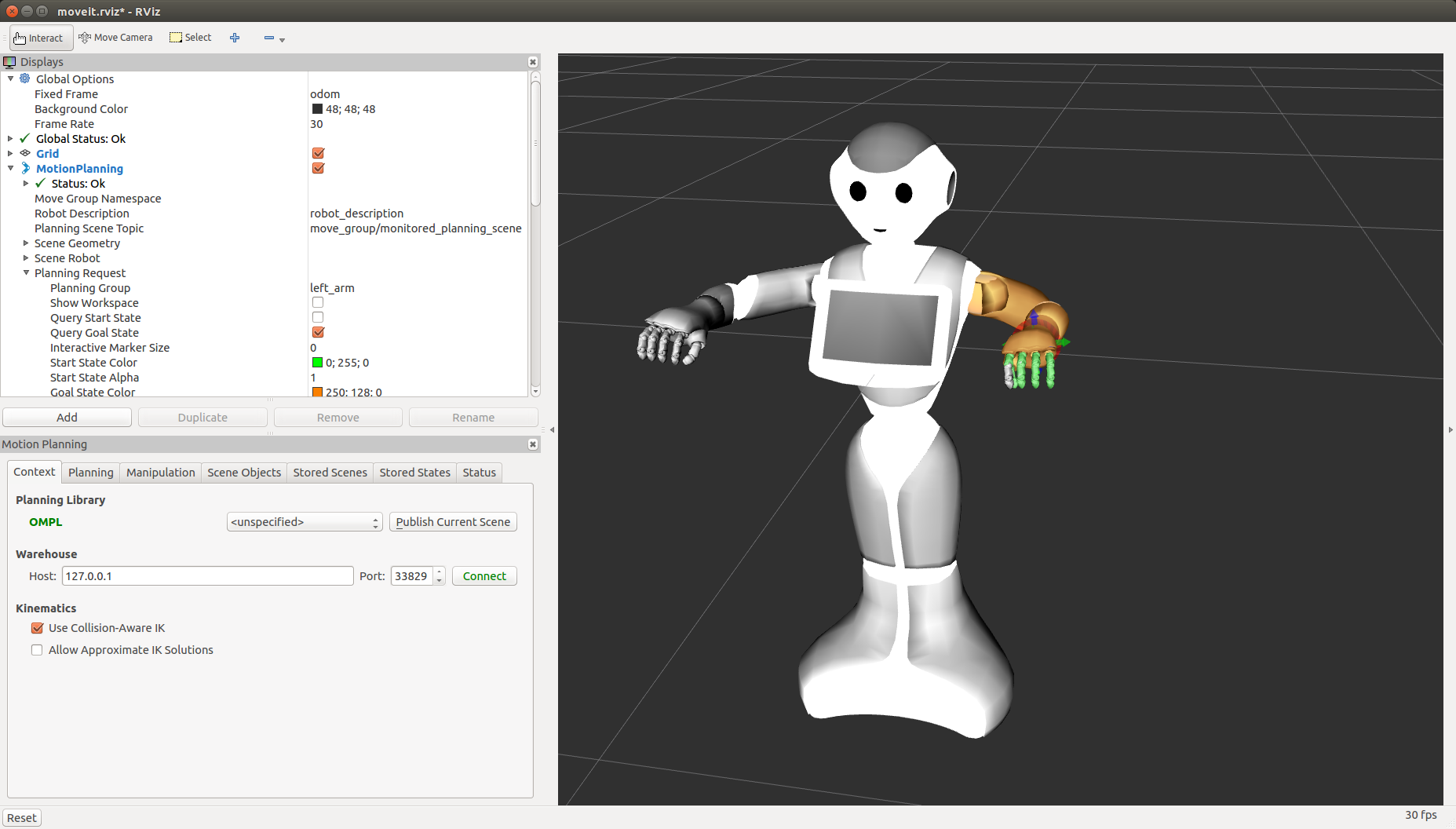

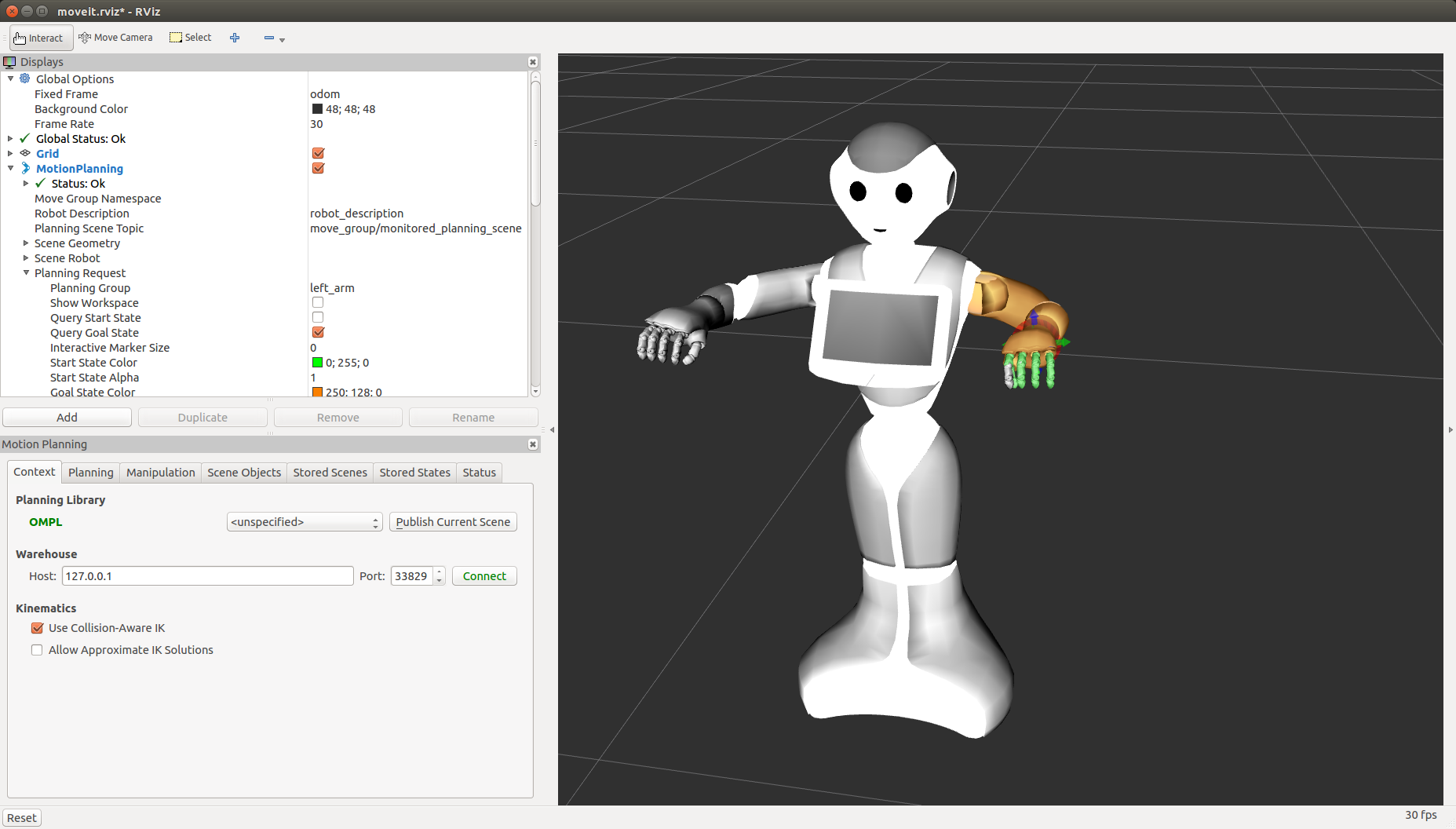

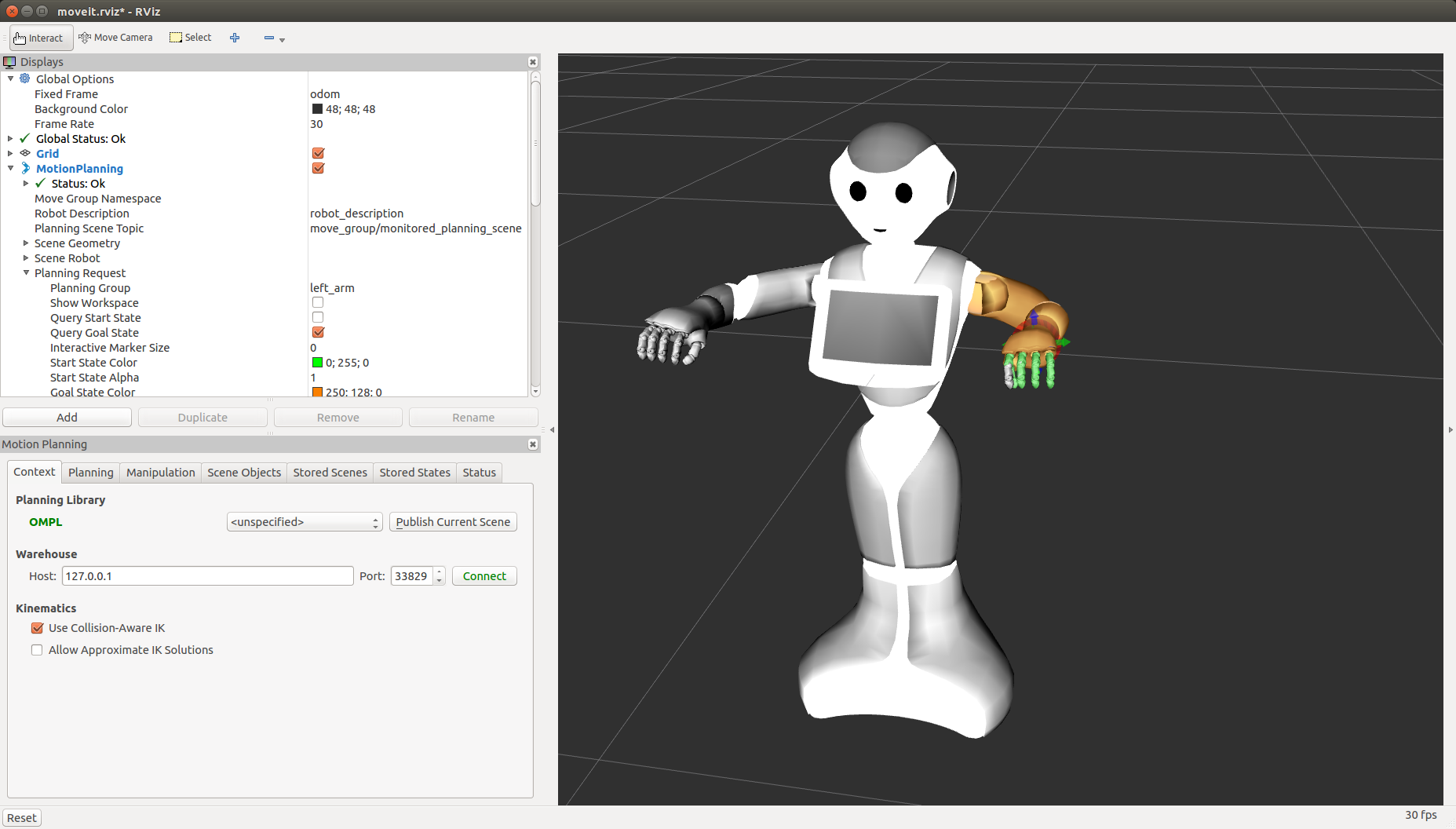

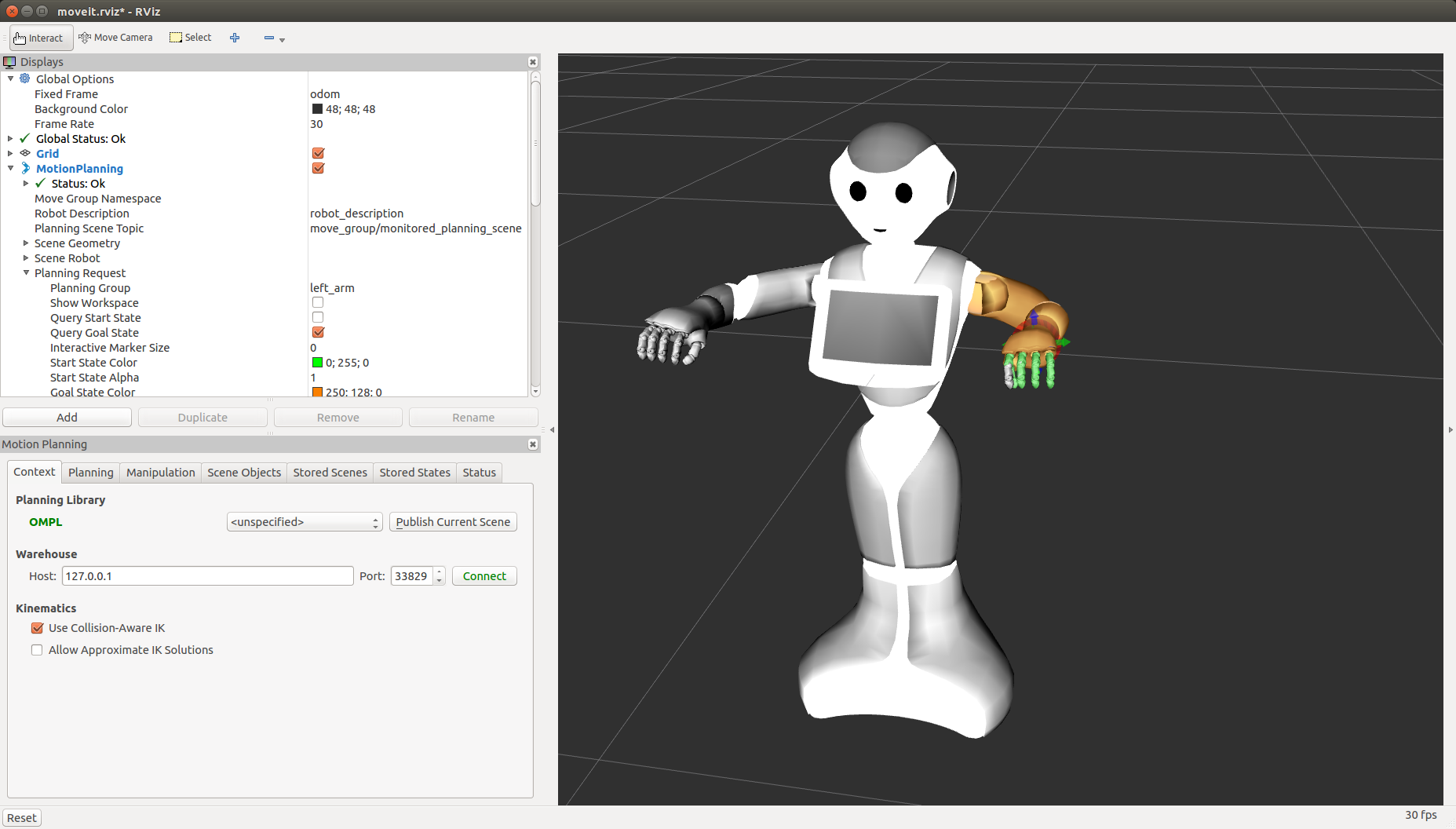

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

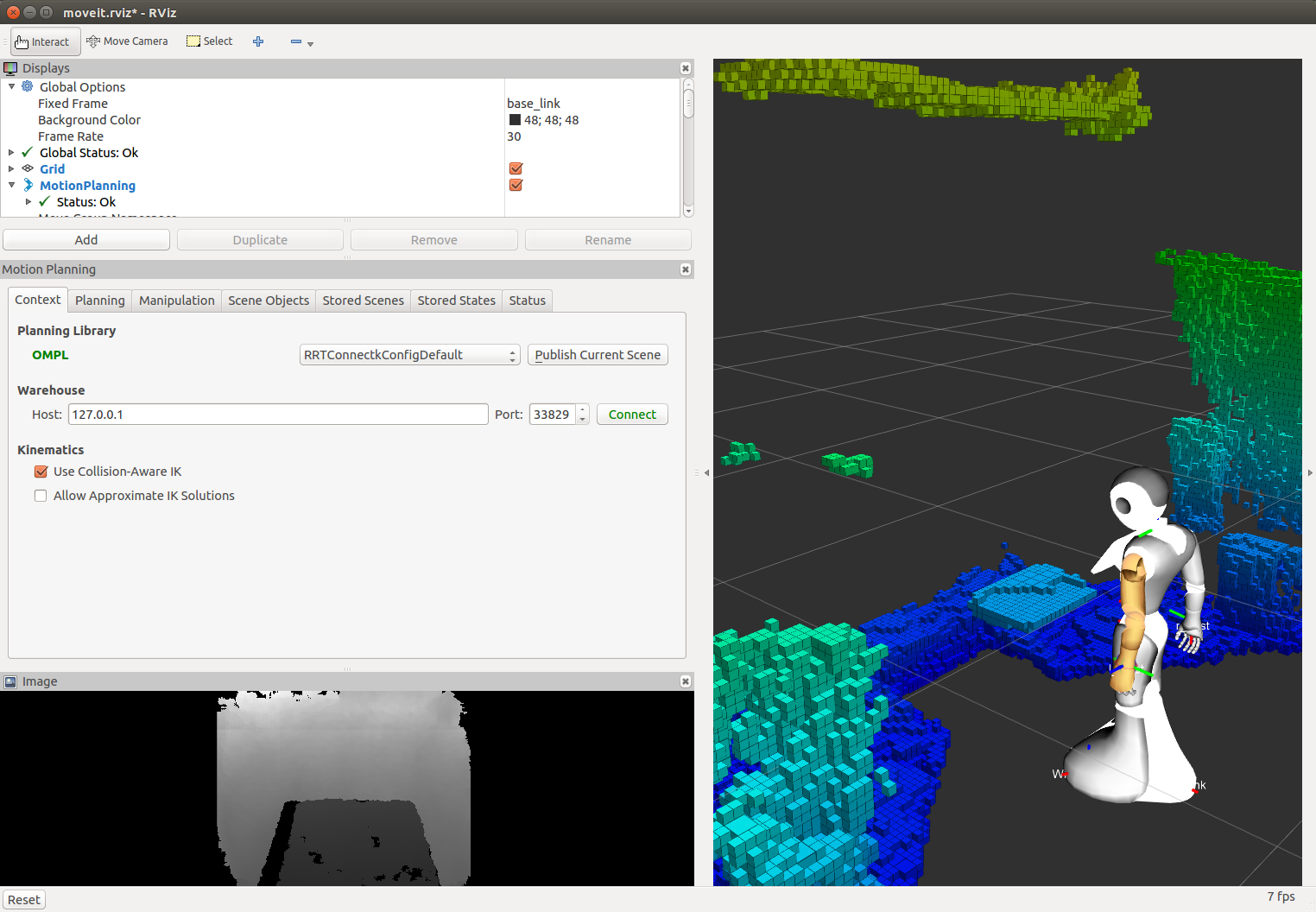

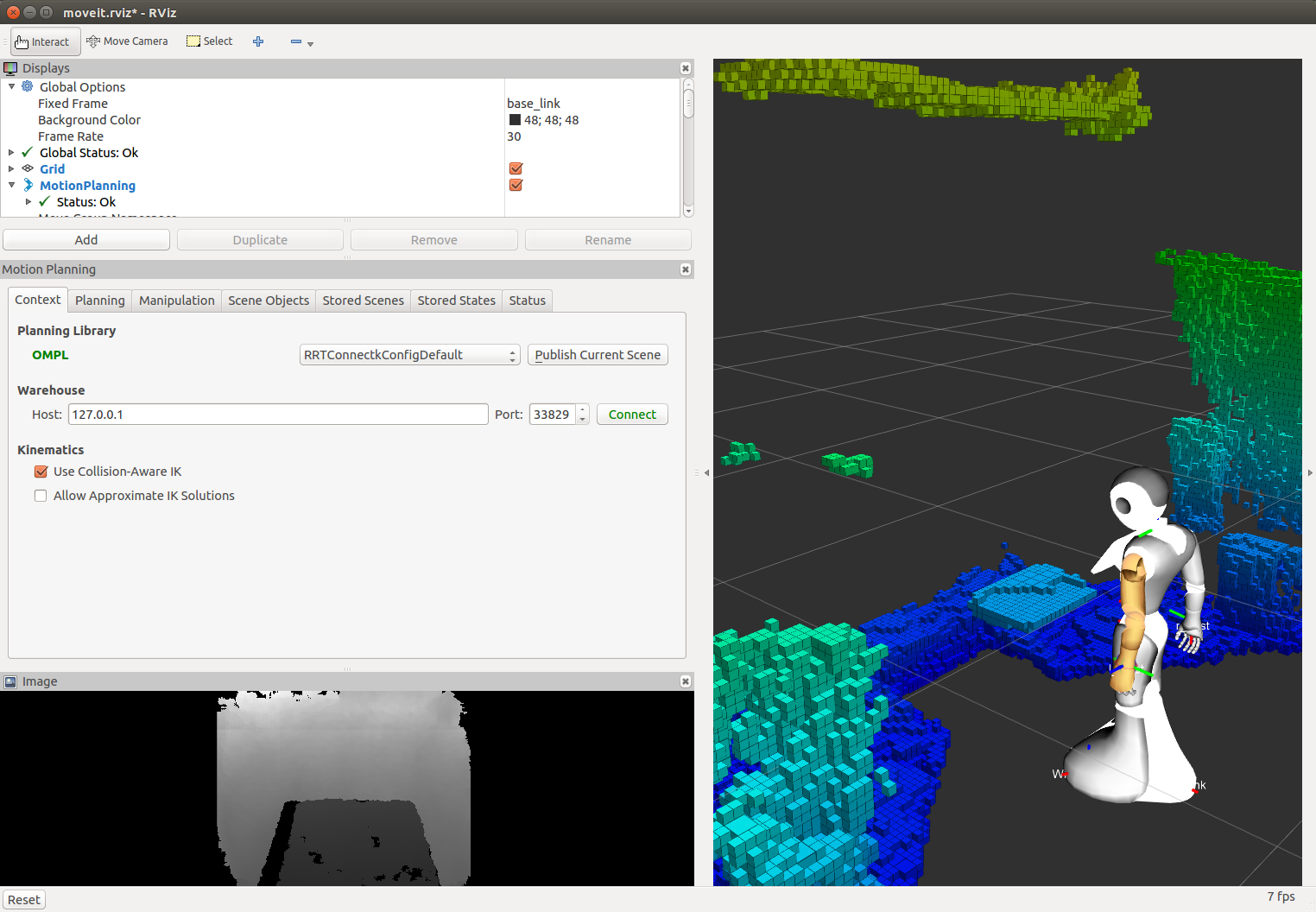

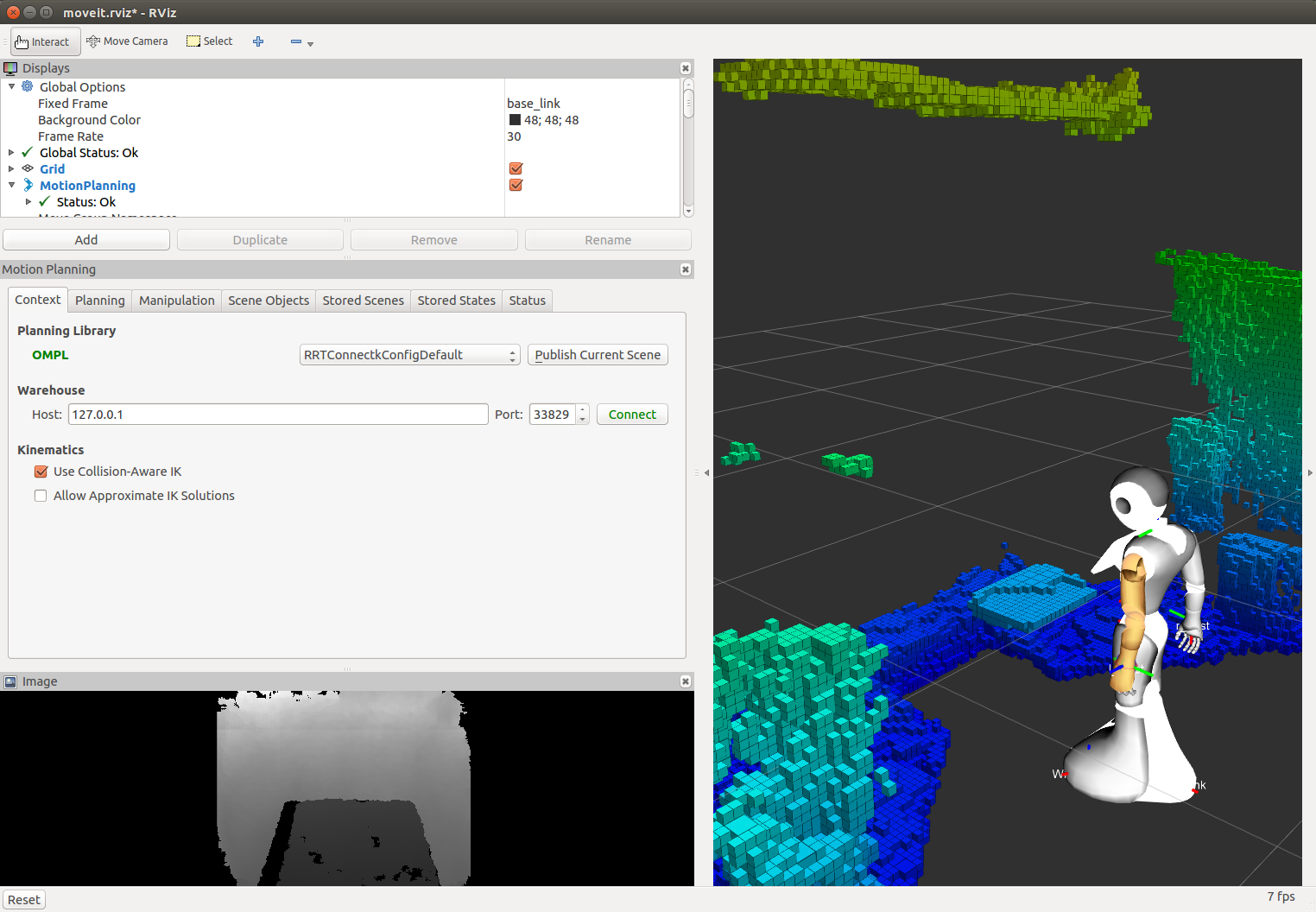

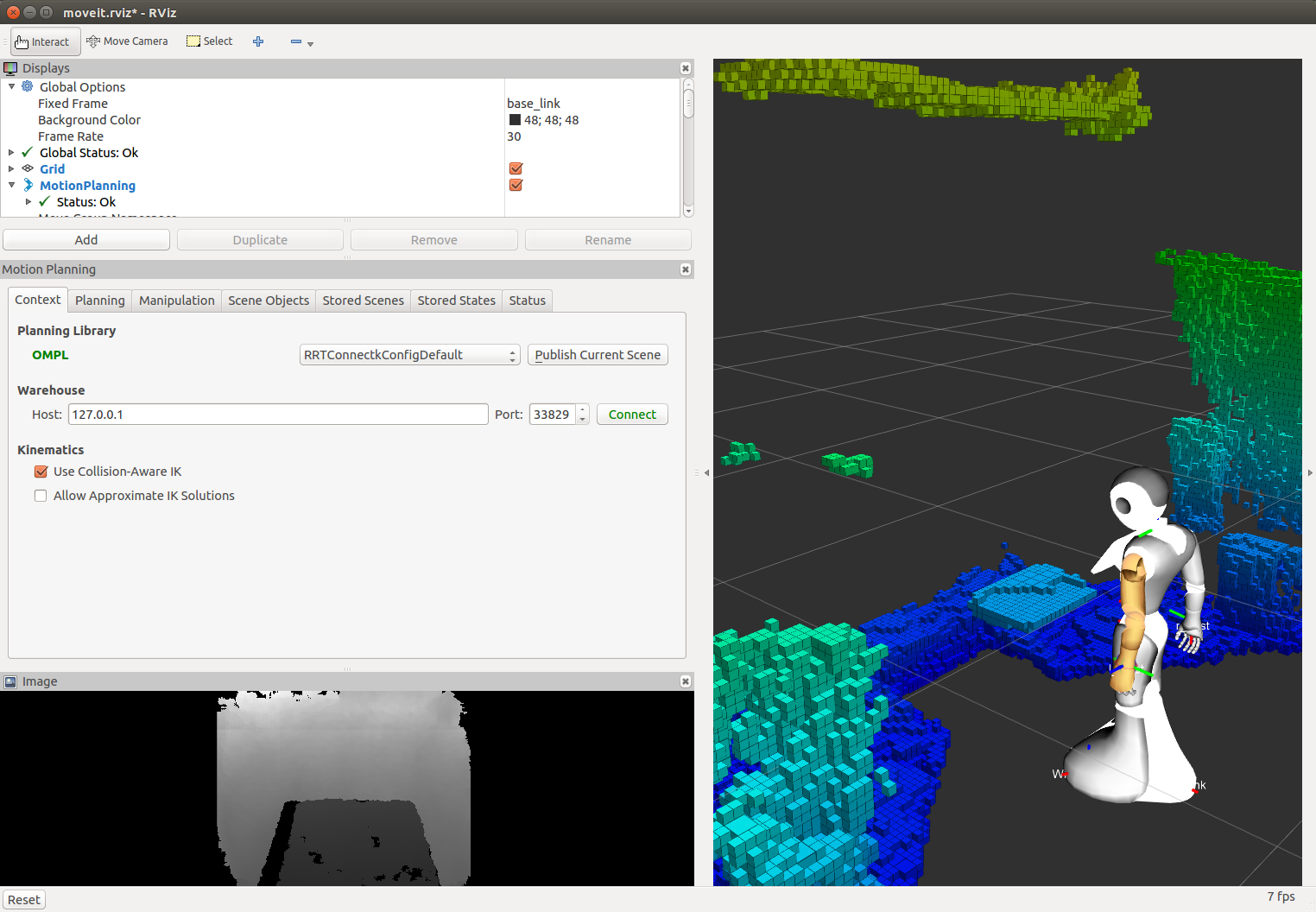

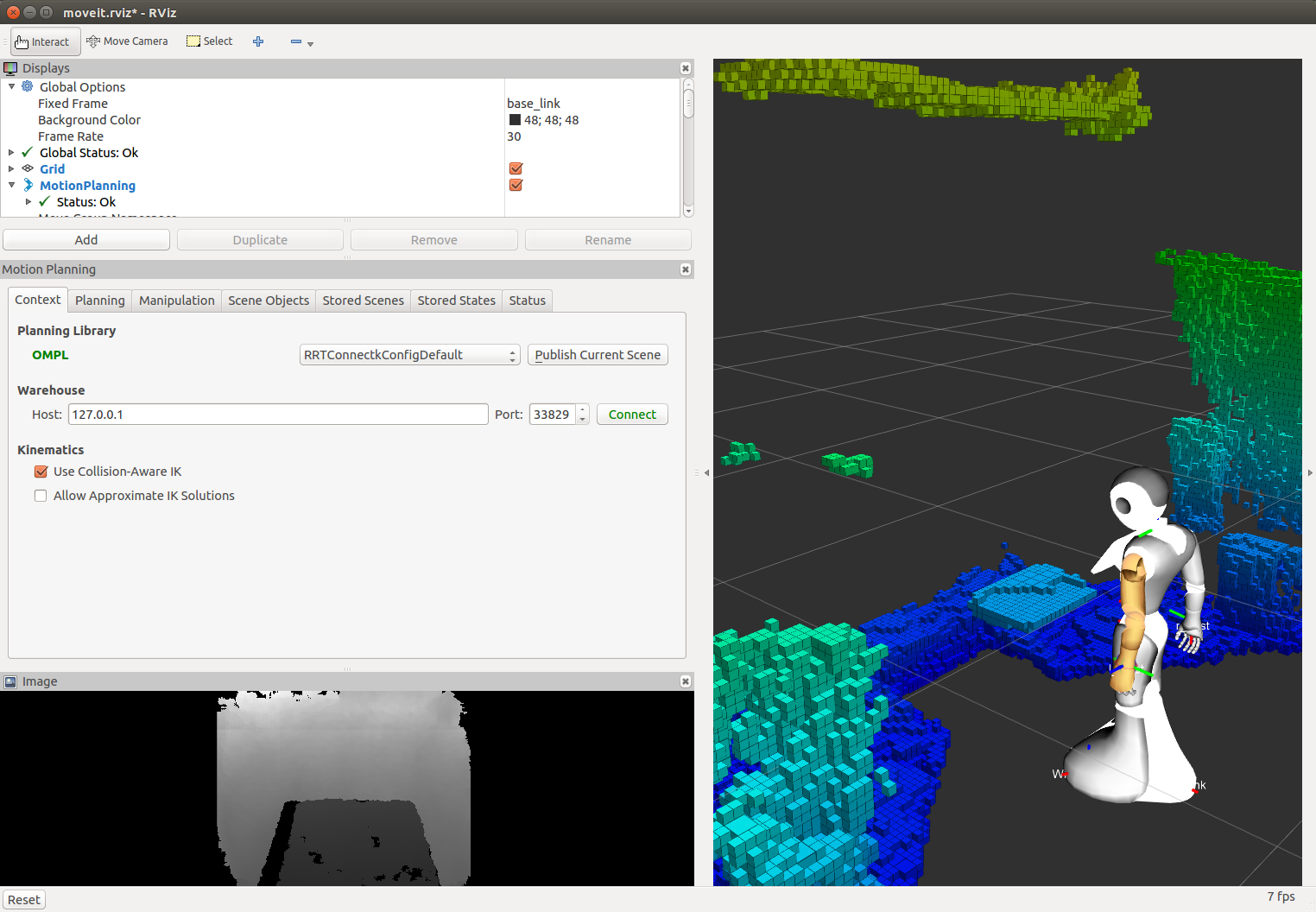

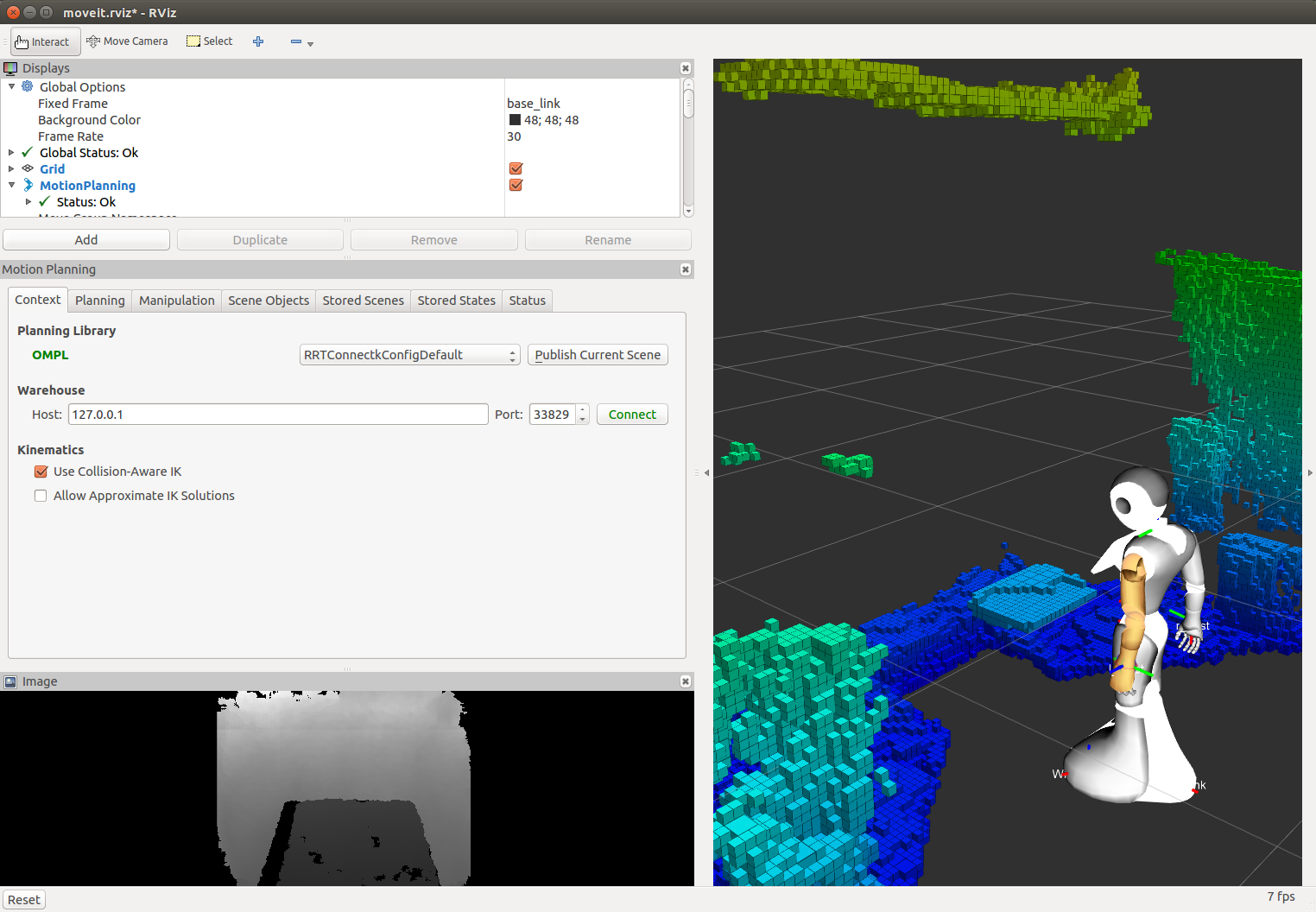

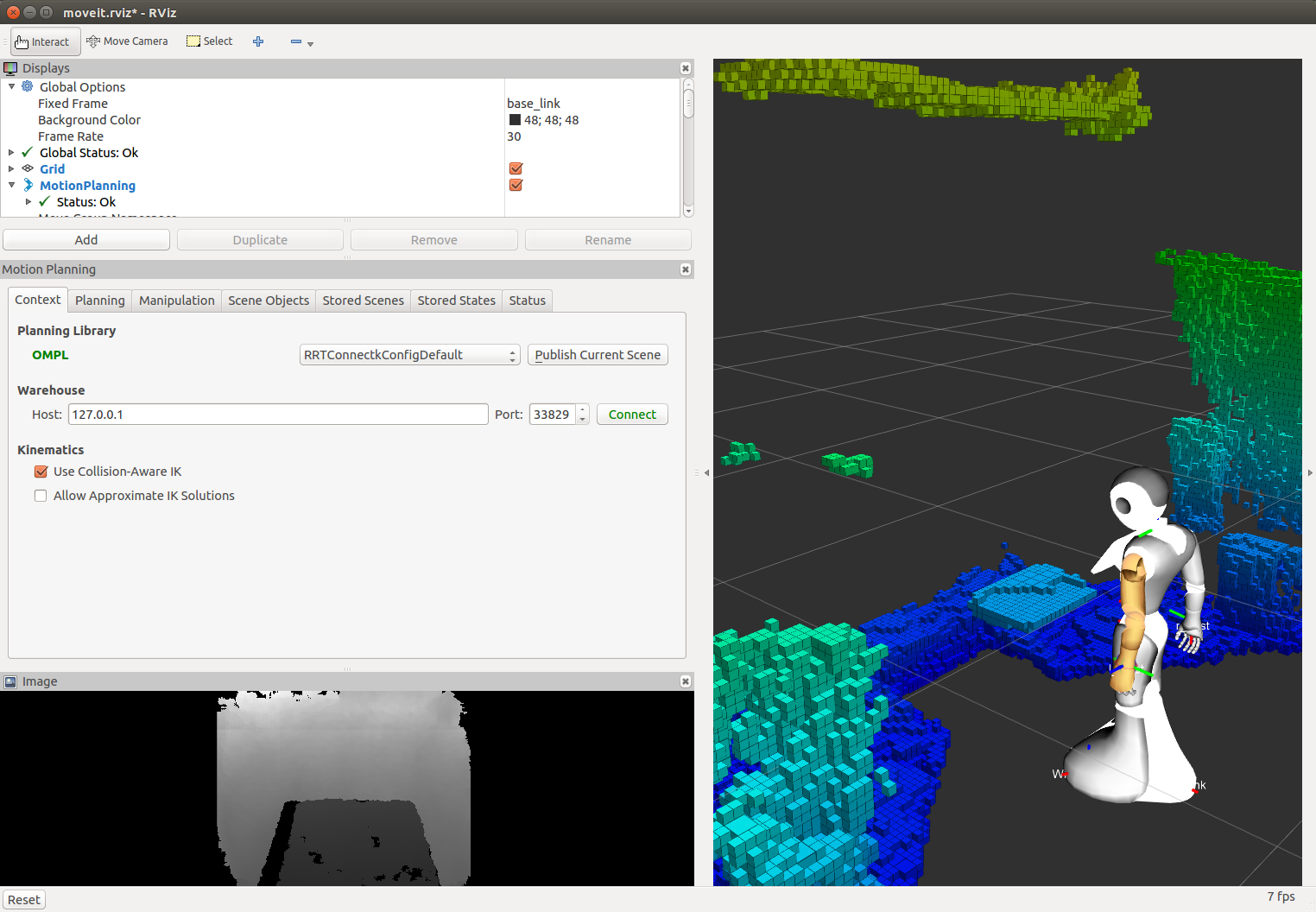

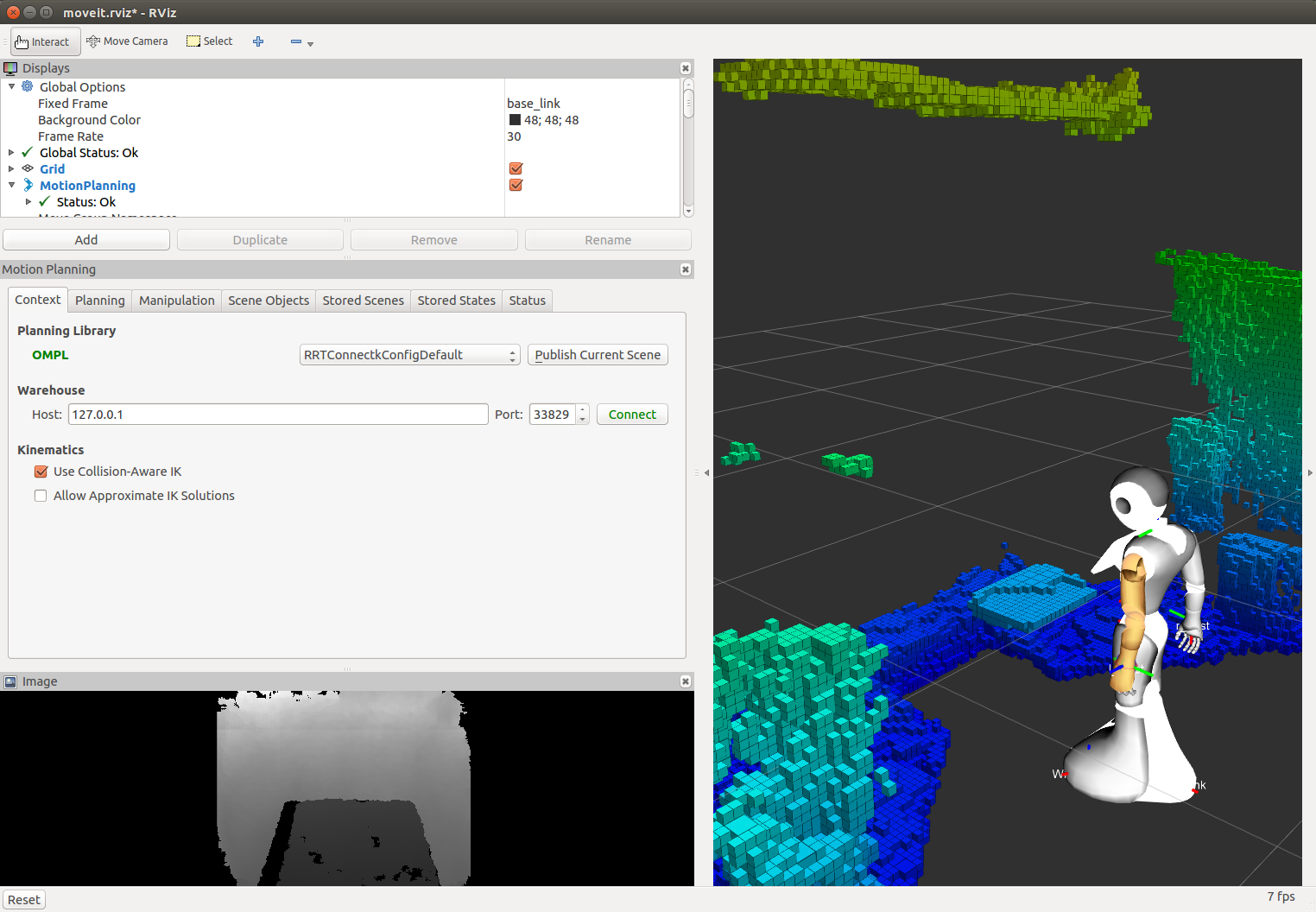

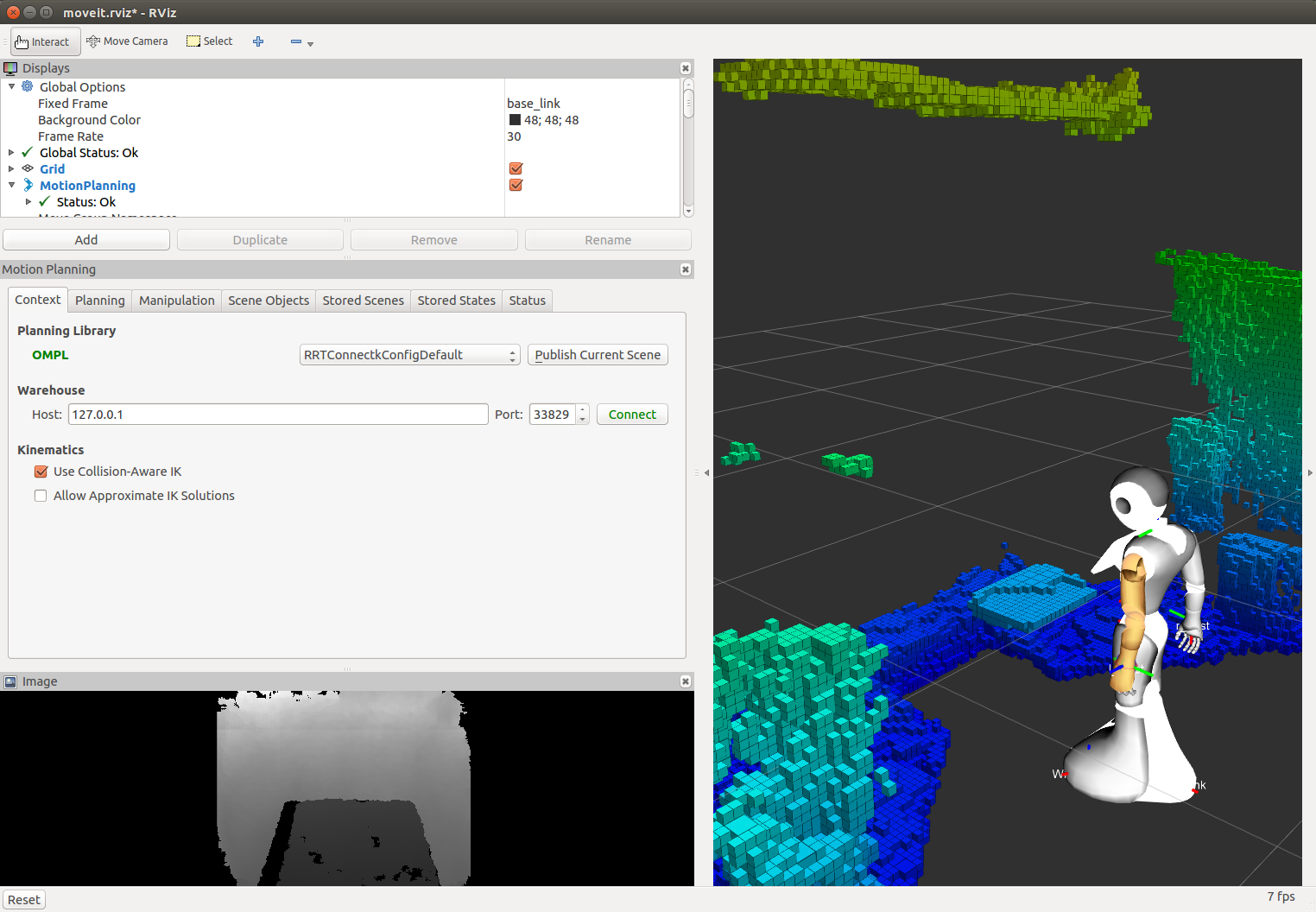

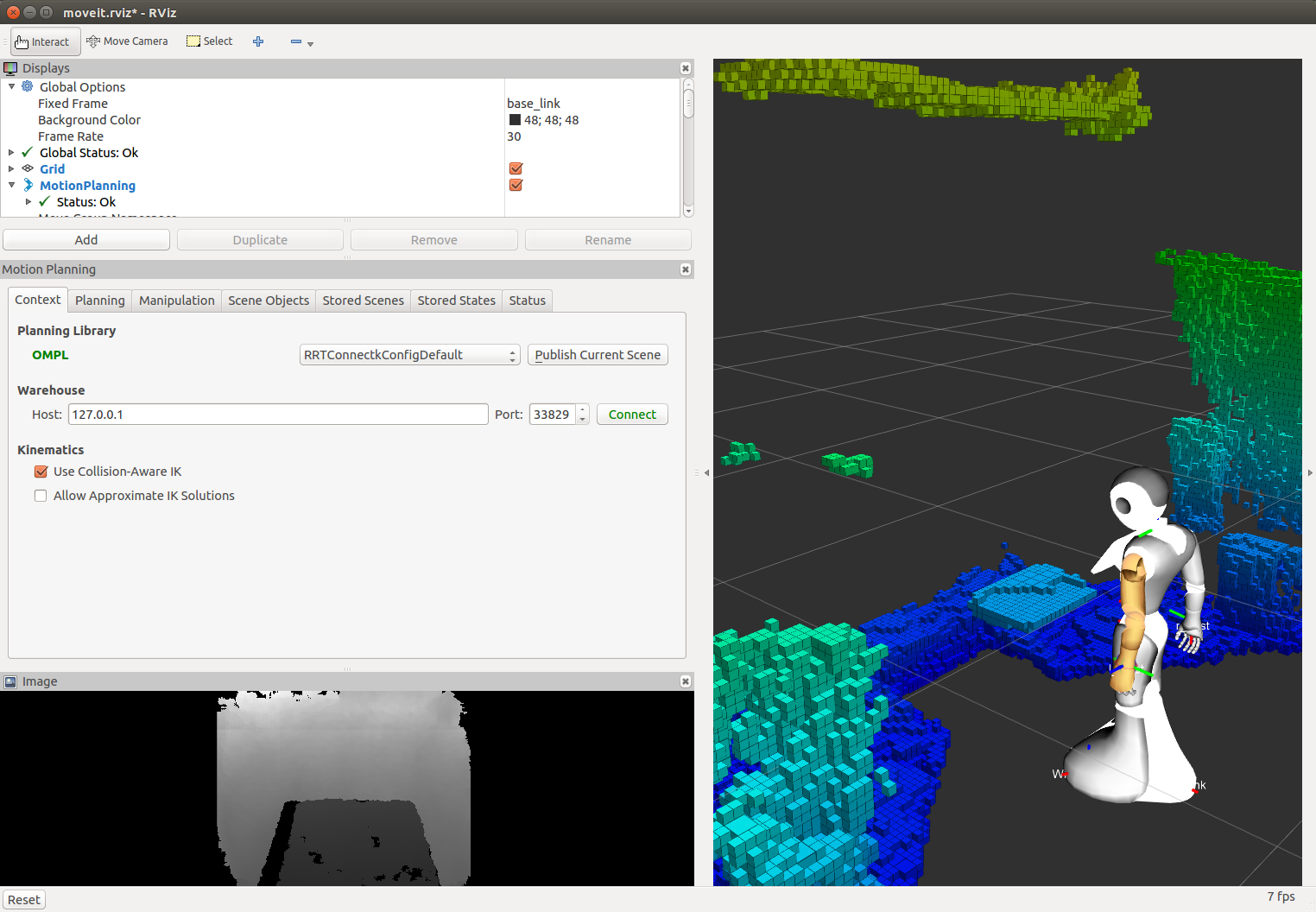

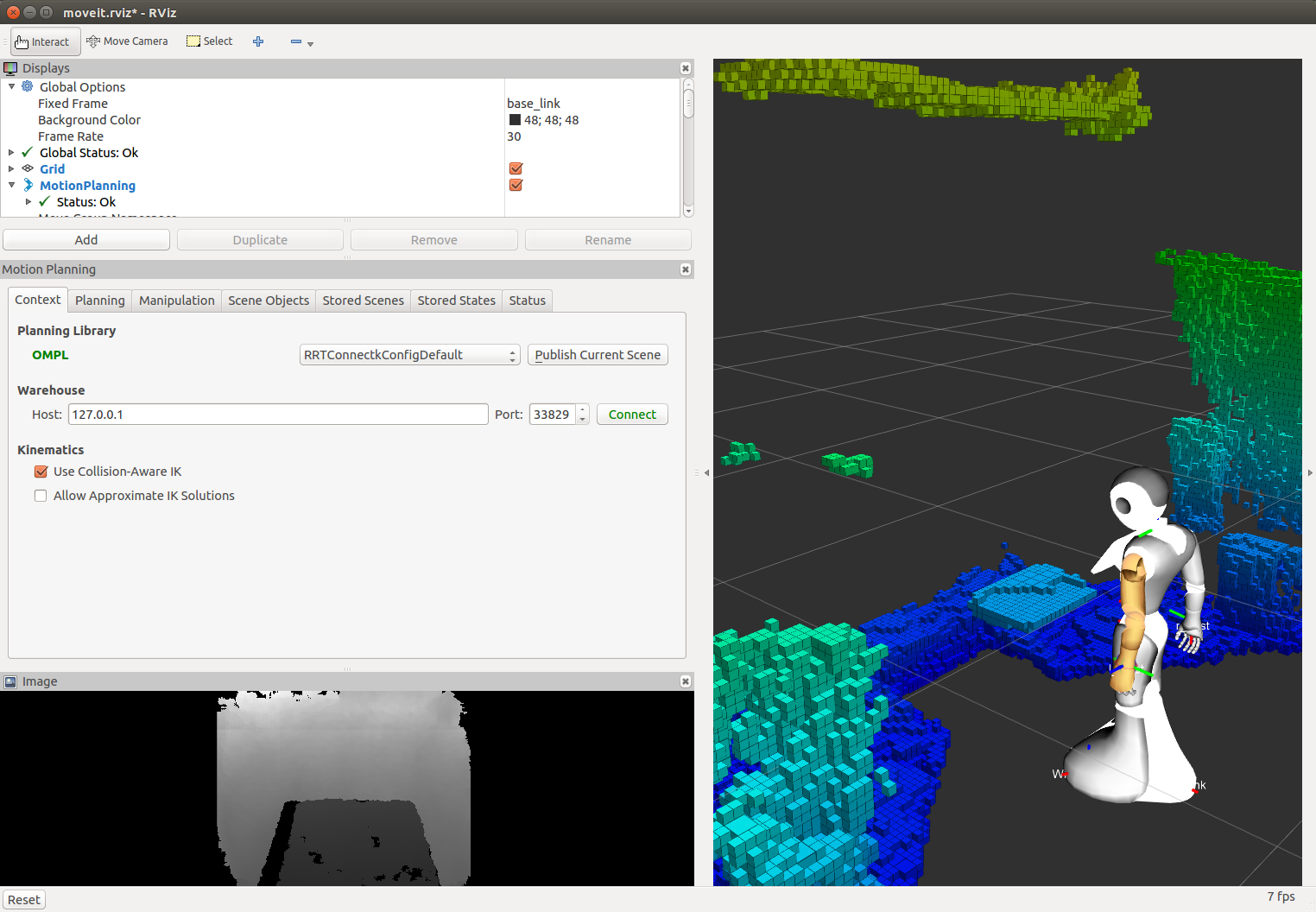

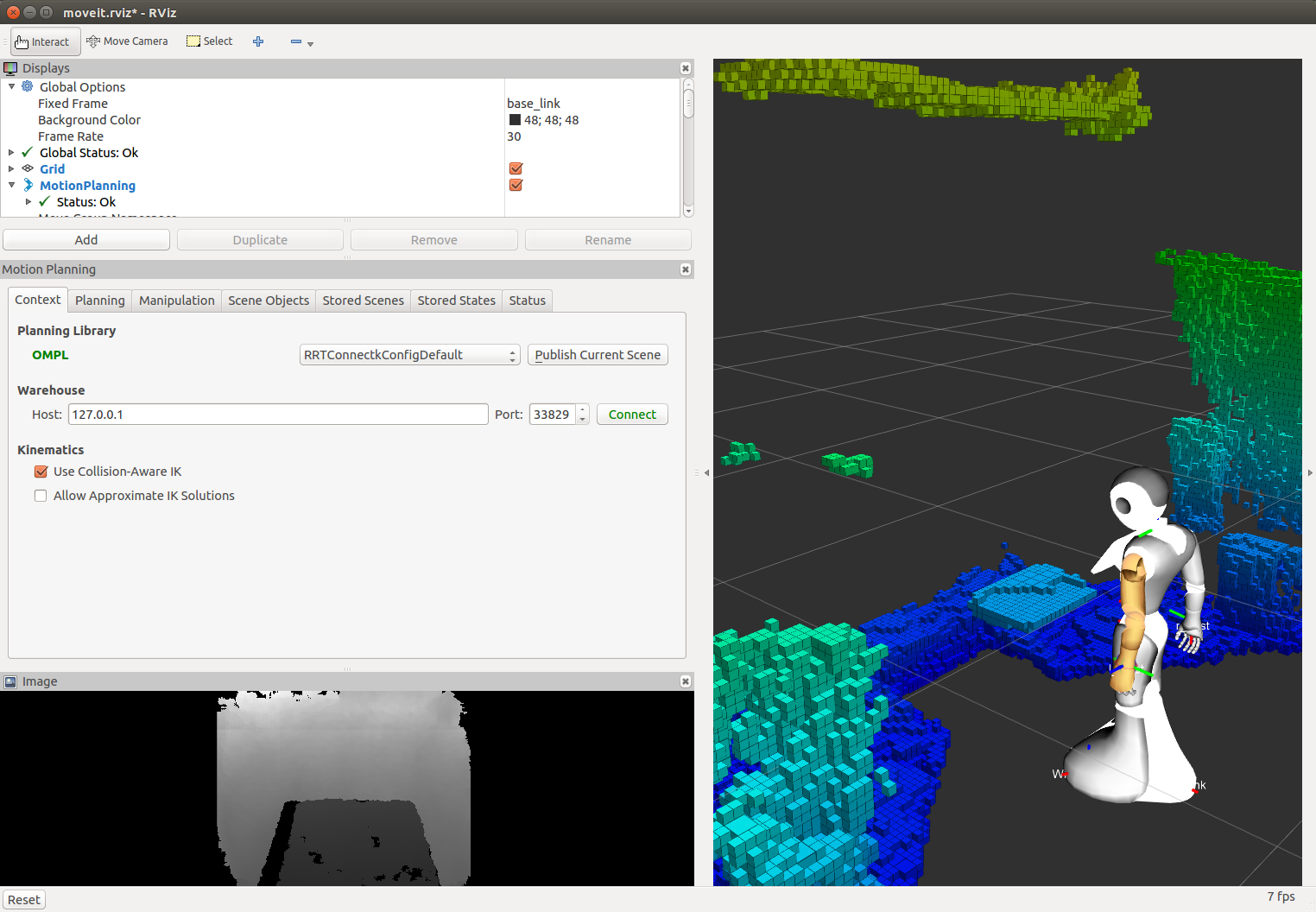

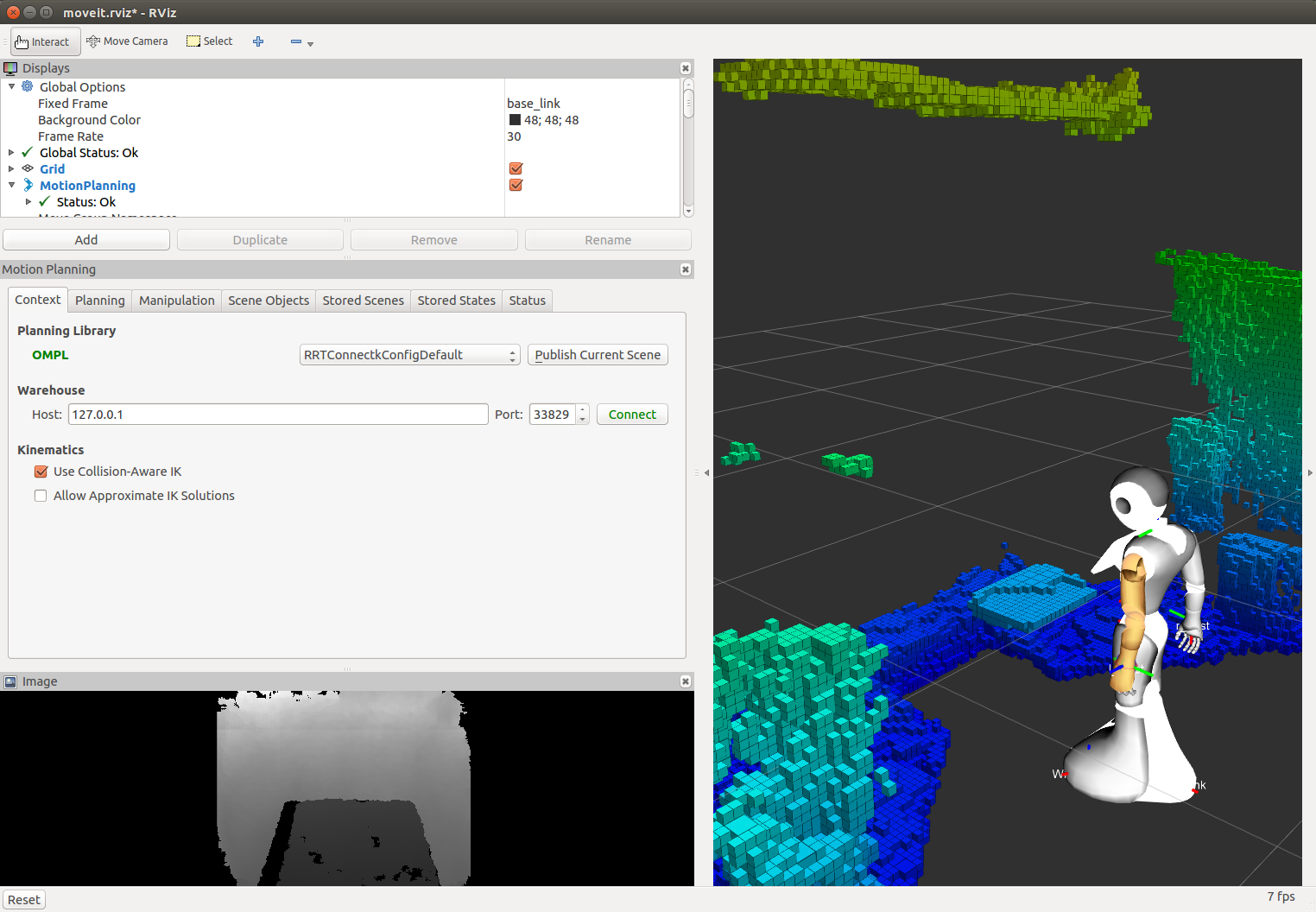

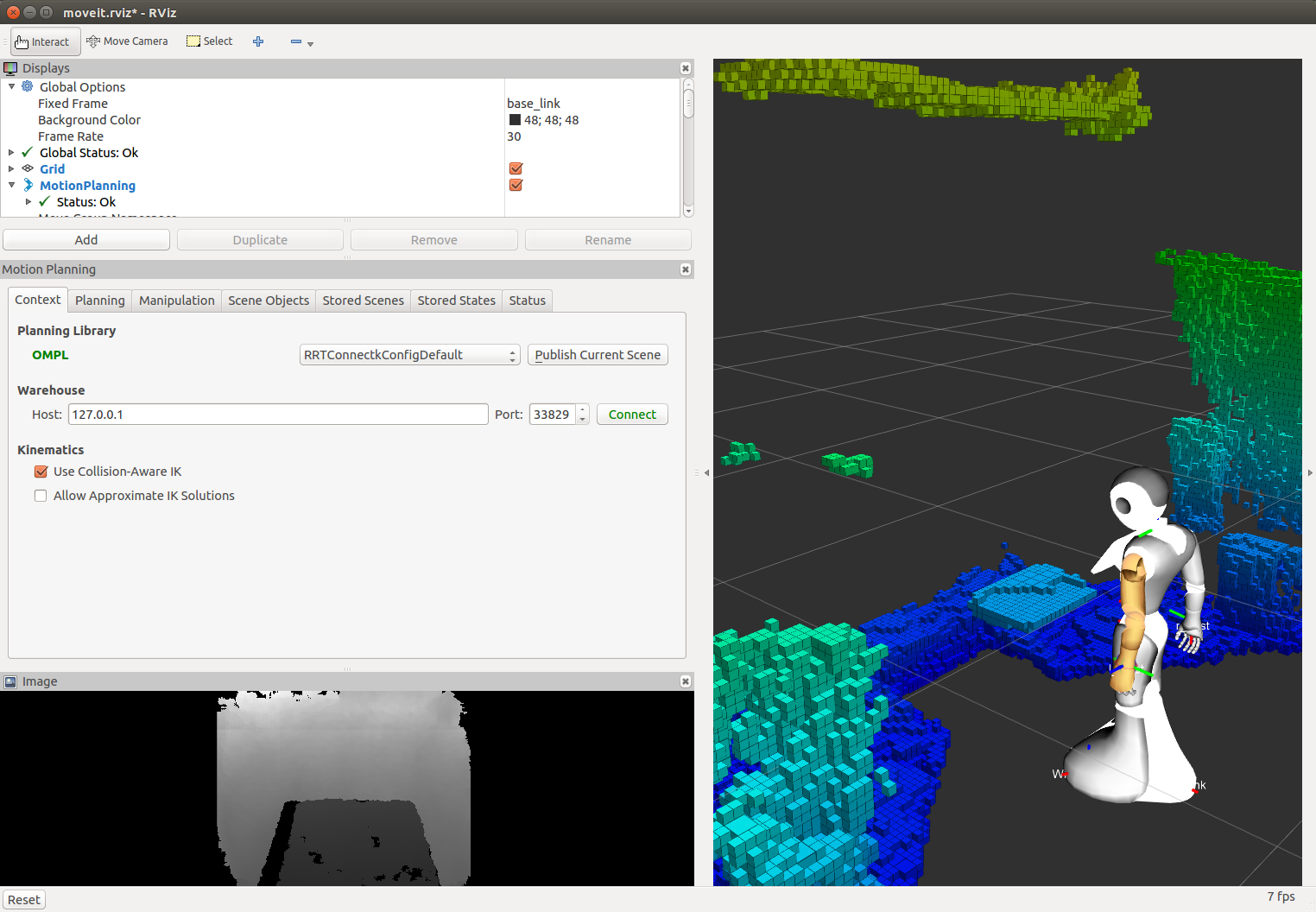

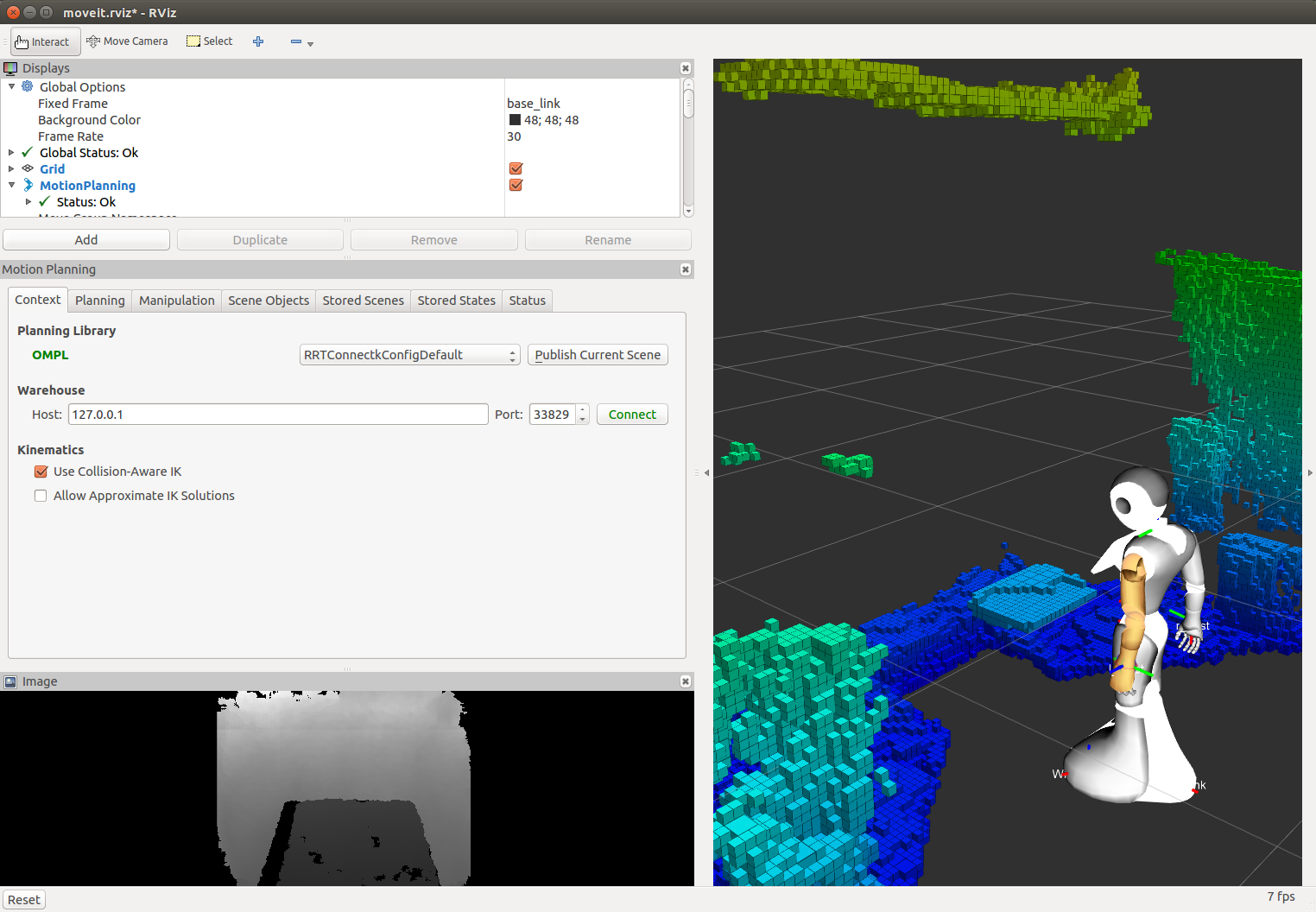

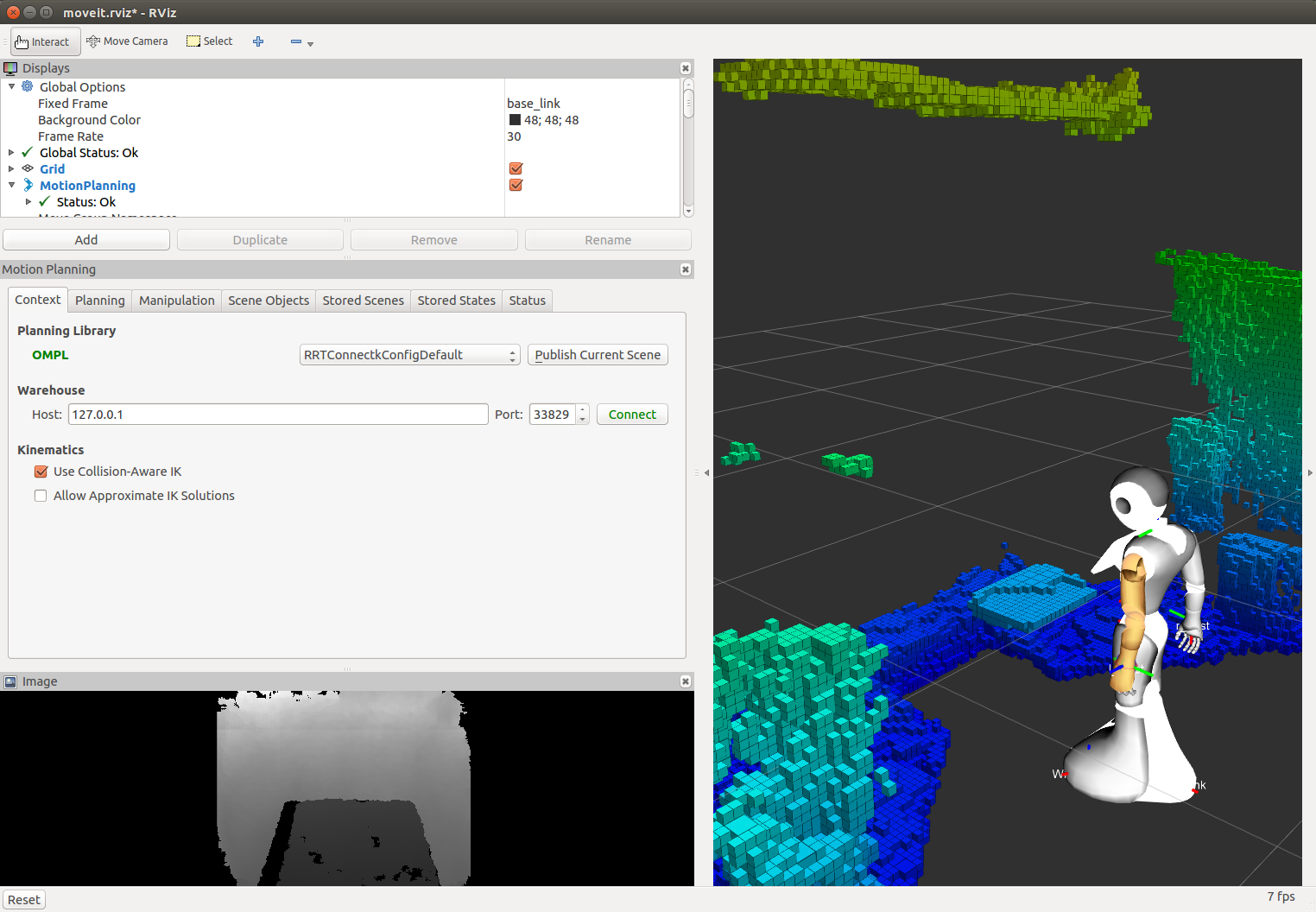

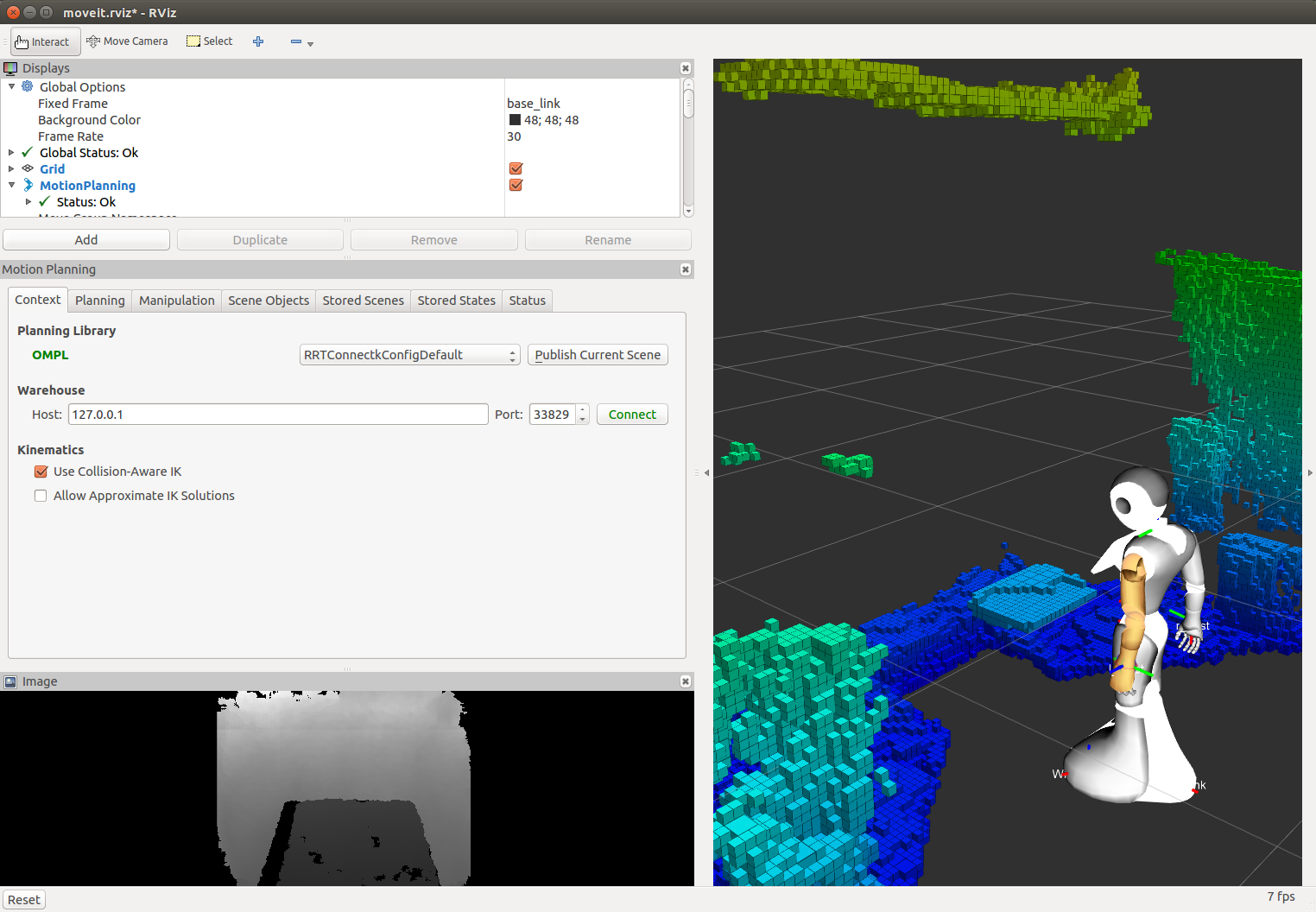

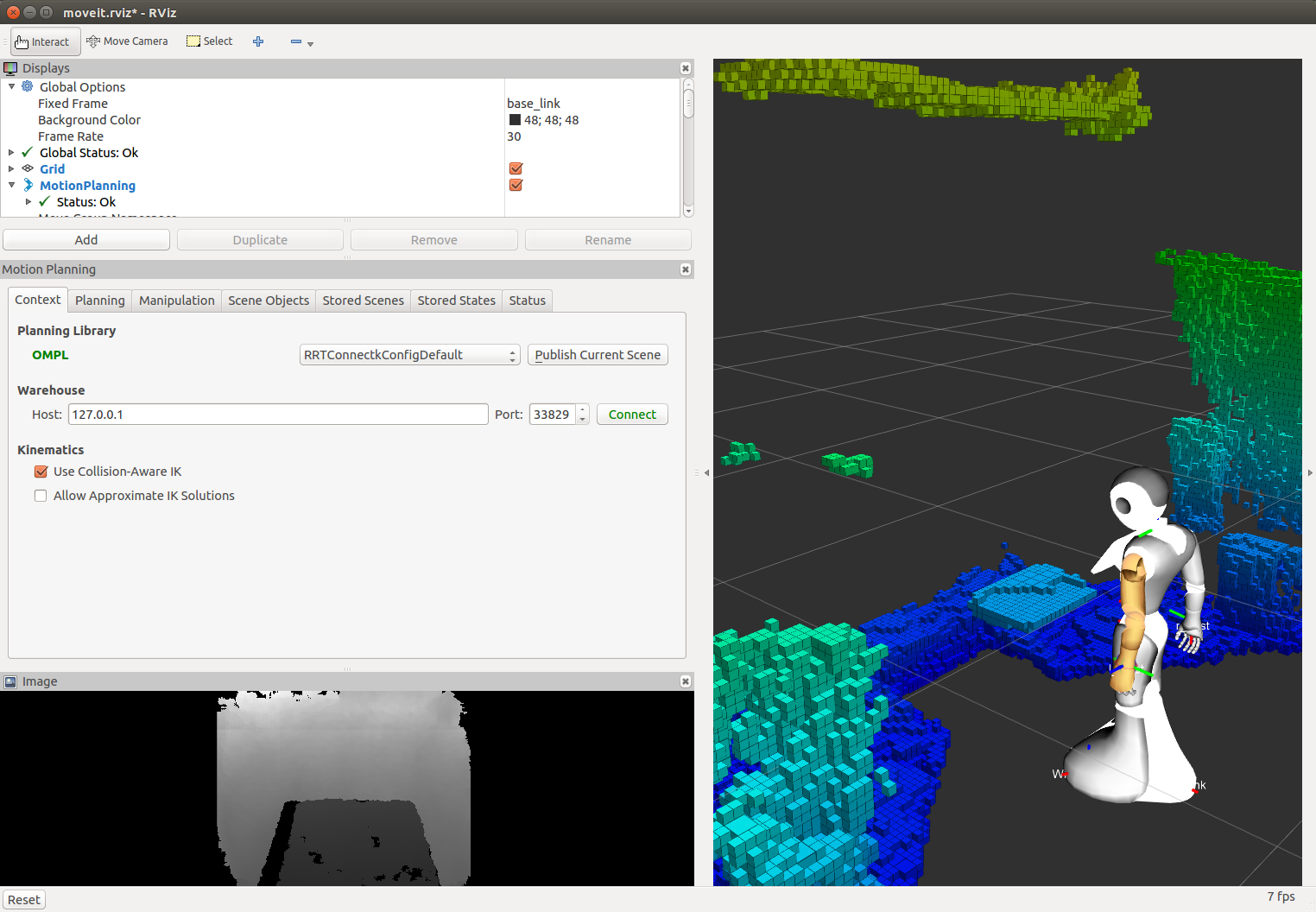

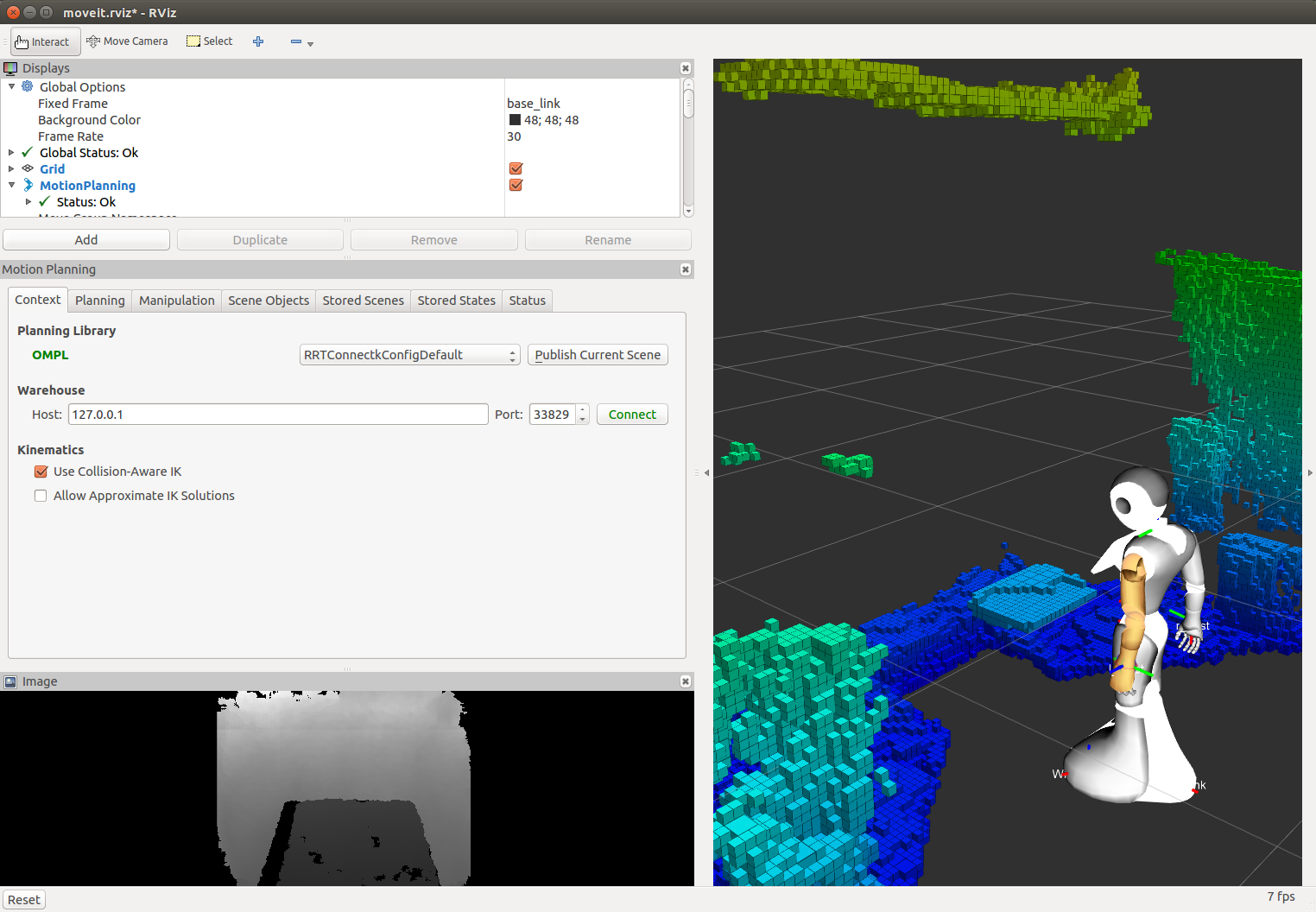

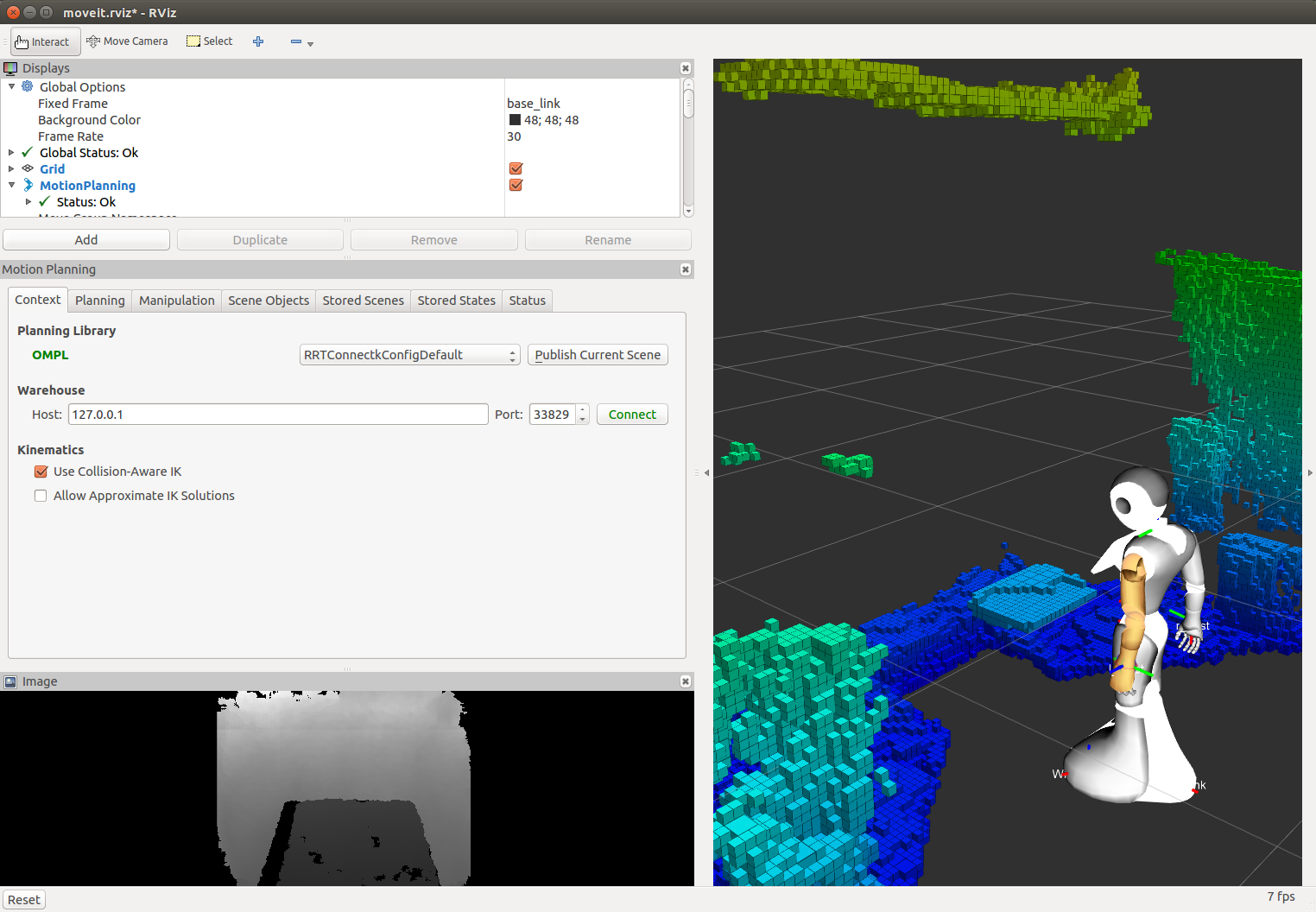

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Checkout URI | https://github.com/ros-naoqi/pepper_moveit_config.git |

| VCS Type | git |

| VCS Version | master |

| Last Updated | 2017-01-18 |

| Dev Status | MAINTAINED |

| Released | RELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pepper_moveit_config | 0.0.8 |

README

pepper_moveit_config ===================

This is a MoveIt! config package generated by the MoveIt! wizard. It requires a Pepper model which you can get from here:

https://github.com/ros-naoqi/pepper_robot/tree/master/pepper_description or from the binary package : ros-indigo-pepper-description

The moveit package must be run on a remote computer and not directly on your robot.

1 Compile the package

pepper_moveit_config package doesn't need any compilation so running rospack profile should be enough.

And your ready to play with your Pepper

2 Run MoveIt

Without a robot -------------You can run this moveit package either unconnected to any robot or attached to a robot (real or simulated): For a standalone execution :

roslaunch pepper_moveit_config demo.launch

Once launching the demo, you should see the robot in MoveIt! GUI like this:

{width=”100.0%”}

{width=”100.0%”}

Check the box Allow Approximate IK Solutions (and optionally, choose the Planning library), and you are ready to control the robot.

On a real robot

To use MoveIt! on a real robot, you need to instanciate ROS controllers on the robot. To do so, you need:

First, set NAO_IP environment variable to your robot's IP address:

export NAO_IP=<YOUR_ROBOT_IP>

Launch the DCM bringup:

roslaunch pepper_dcm_bringup pepper_bringup.launch

Wait until it is ready, then run:

roslaunch pepper_moveit_config moveit_planner.launch

Using visual data from the robot's cameras

It is possible to integrate visual data from the robot's cameras into MoveIt that can be useful for obstacle detection. There are several ways to acquire visual data:

- using naoqi_driver

roslaunch naoqi_driver naoqi_driver.launch

- using pepper_sensors_py (get data from a single camera)

roslaunch pepper_sensors_py camera.launch

If you would like to do obstacle detection based on Octomap library

integrated in MoveIt, then change the active camera to the depth

camera through rqt_reconfigure. You can also tune acquisition

parameters if needed:

rosrun rqt_reconfigure rqt_reconfigure

Once depth data are available, you could configure Octomap to represents the robot's space as a set of occupied and free voxels.

{width=”100.0%”}

{width=”100.0%”}

On a simulated robot (in Gazebo)

- To use MoveIt! with Gazebo simulation, you need:

File truncated at 100 lines see the full file